Today, May 15, 2026, is the launch of the Spring 2026 Refresh/Update of my book, Time Molecules, is available. This is not a new edition of the book, but a significant expansion of the material I’ve placed in its supplemental GitHub repository and this blog site. The refresh expands on the core ideas, strengthening the implementation, and making the system far more friendly to people and AI agents. This refresh work fills out what would have expanded Time Molecules from about 300 pages, to closer to 1000 pages and another year of work (an eon in “AI years”).

This refresh includes new and expanded material on AI-agent skills, event and case properties, Bayesian probabilities, OpenTelemetry-style event context, Markov model comparison, graph outputs, metadata enrichment, semantic-layer integration, and MPP-oriented implementation patterns.

Think of this refresh/update as Time Molecules Volume 2, but in blog and repo form. And, whereas Time Molecules is holistic, pulling together many disciplines, this Refresh is topic-oriented.

Time Molecules is about:

- Recognizing that stories are the transactional unit of human intelligence.

- Stories are a sequence of related events over time. Stories usually reflect some pattern in the world, so stories do at least rhyme (as it’s been popular to say lately).

- Markov models are the abstractions of rhyming stories.

- Applying a “time-centric” approach to the current “tuple-centric” (think of tuples as a checklist of characteristics that define something) mainstream business intelligence (BI) principles we’ve honed over decades towards extracting value from them.

To help you decide whether reading on it worth your time, the following three articles represent HYPOTHETICAL situations that served as the direction for Time Molecules in the AI era. All three require a foundation of systems thinking:

- Multi-Domain Retail Enterprise: Hypothetical case study of a large multi-hospital health system where Time Molecules serves as the precursor pattern layer that compares normal-time vs. pre-rare-event sliced Markov models to raise modest Bayesian odds on compound rare events (ICU surges, code-blue clusters) 24–48 hours before they hit, all while staying inside the hospital’s own data.

- Managing a Complex System: Hypothetical case study of a BLM-scale federal agency managing 245 million acres where Time Molecules acts as the time-side semantic layer that connects ecological, political, and monetary process neighborhoods to reveal how one AI-driven policy change ripples across domains.

- Forecasting Rare Events: Hypothetical case study of a city-wide multi-agency resilience hub where Time Molecules acts as a privacy-first ‘collective gut-feeling’ layer that detects precursor sequences across weather, dispatch, infrastructure, and aggregated hospital signals to give modest Bayesian odds lifts on rare regional hospital surges (storms, earthquakes, mass events) without ever touching individual data.

Analyzing from a time-centric foundation is the counterpart to analyzing from “thing-centric” (tuples)—zero-dimensional points of knowledge, aka facts, symbols, labels. In current BI, the primary mode is that we take a set of these symbols and apply them to a big game of “connect the dots” with models in our brains that we’ve learned.

Instead, the sequence-centric foundation of Time Molecules starts with one-dimensional sequences as the primary unit. As we know, the jump from 0D to 1D is much more profound than an increase of 1 might intuitively indicate. Think of how we went from OLTP to OLAP (Data Warehouses, cubes) to make facts (transactions) analyzable. We now need to go from event logs to Process Warehouse to make sequences analyzable. And Time Molecules is the modeling layer for that process warehouse.

Traditional BI organized facts so we could analyze what is. Time Molecules organizes event sequences so we can analyze how things unfold—and compare those patterns across the enterprise.

Although invoking terms like BI, cubes, OLAP will trigger, “been there, done that” (aka “That old thing again!”), this is really like a full-on Version 2.0 of the foundational goal of BI—the need to integrate data across all domains for a big-picture, high perspective view. Think of our traditional tuple-based BI at this moment as Version 1.99—that began with the 1.0 of Bill Inmon and Ralph Kimball back in the 1990s, and the 0.99 representing all that evolved in traditional BI since then.

This 2.0 extends us from symbol-oriented into systems-oriented—a full dimensional improvement. It makes intuitive sense to me because I still recall spending most of grade school memorizing facts I would be tested on. Not much that focused on the skill of dealing cause and effect, complexity, probability. Naturally thinking in terms of processes is systems thinking.

This is not just analysis, but about creating reusable memory of how processes unfold.

Lastly, I need to mention that the sample solution (named TimeSolution) is a “SQL Server” solution. It was originally a companion to the Time Molecules book. My goal at the time of publication (May 2025) was to provide a play-along that is relatively easy and inexpensive to install (free “Developer Edition”) and centers around the relatively universal language of SQL that should be held by my expected reader. But this refresh takes it a significant way towards an “AI Agent-Friendly”, highly scalable solution, serving a dual purpose.

Intuition for Time Molecules

Based on feedback over the year, it’s important to cover intuition for Time Molecules. It’s not alien UAP technology, but in the BI world, it’s not exactly mainstream. On one hand, it looks like it’s just process mining, or sequence analysis, or systems thinking. On the other hand, especially for BI people, it might seem like a big leap from dimensional models. So it’s a good idea to share conceptually why I authored Time Molecules.

One way to build intuition of Time Molecules is to think of events the way we think of letters or chemical elements. A single event, like a single letter or atom, has limited meaning on its own. But when events are sequenced, they form patterns—like letters form words and sentences, or atoms form peptides and proteins. Markov models then act as abstractions of many similar sequences, much like recurring molecular structures in chemistry. And just as peptides can link into larger proteins, these sequence patterns can connect into broader process structures. The important point is not the individual event, but the structures that emerge when events combine over time. I describe this analogy more fully in the abstract of intuition I provide in the Time Molecules book: Intuition for Time Molecules from the Book

If you’d like to dive deeper into the intuition, read on—or if that’s enough analogy, skip to What’s New in the Spring 2026 Refresh.

Life, business, society, indeed the universe itself, are made of interacting processes. I take the wisdom of Heraclitus to heart—”We never walk in the same river twice.” Even something that looks perfectly still (like a seemingly inert rock) is engaged in cycles, but at a smaller level (like the cycles within a plant) or that take too long for us to notice (like the movement of tectonic plates). In our human timeframe, it can be a sales cycle, a hiring cycle, a patient-care cycle, a supply chain cycle, even a morning routine—these are all processes. They do not exist in isolation. They touch, interfere, support, constrain, and sometimes damage one another. Life is a massively parallel weave of interacting processes.

For all the systems on Earth, under normal circumstances, we don’t notice the very small, under-the-hood systems, nor do we see the “bigger than life” systems that operate well outside our human framework. The systems we do normally see are very much aligned with the tip of the iceberg analogy.

If there were nothing encouraging these cycles to hold together—no structure, no adaptation, no feedback, no design, no intent where intent exists—everything would dissolve into chaos. Instead, what we see is partial, flexible order. Processes repeat, but never perfectly. They drift. They leak. The wear down and eventually breaks. They require maintenance. Most of that happens because they create friction with other processes. Out of that friction comes much of the world we actually experience. A perfect process might as well be a still photo or encoded in Python. Where’s the fun in that?

Time Molecules is about discovering and studying webs of friction in every process, in transitions and where processes bump into each other.

Time Molecules is about seeing how those processes interact more clearly. Not just as isolated sequences or even isolated processes (an abstraction of many like cycles), but as living patterns of interaction, change over time, and vary across context. It is about making those patterns more analyzable, so we can better understand what is happening, how things are shifting, and what the effects of intervention might be.

Every fact we learn is connected to a story. There is a story behind every sales figure and a trajectory of resultant events to come.

We exchange facts/symbols/labels with each other because facts are easy. A number, a category, a customer ID, a timestamp, a sales figure. We hand them off and the recipient seamlessly plugs them into the mental model of the world they already carry. For most of human history—and still for most people living and working today—that was good enough. Richly dynamic experience coalesced disparate facts into a story. You could feel how things usually unfolded, where the friction would appear, what might happen next. Our intuition did the heavy lifting all this time, but now we can feasibly offload much of that intellectual horsepower to today’s level of AI.

But this seamless handoff of facts makes it easy to settle into the impression that things live in a vacuum. Every “thing” we measure is already a visible slice of a much larger, interconnected system of processes. Those processes are the real fabric of business, of life, of everything that actually happens. And processes are not easy to exchange. Until recently, the awareness of the process-level was mostly the realm of higher animal intelligence.

Traditional BI has been very good at computing values. It gives us counts, sums, averages, trends, ratios, dashboards, and even machine learning models. But for the most part, we took those values and plugged them into models in our heads. Because of that, we did not always notice what BI systems lacked. The systems gave us measurements, but much of the process model—the part about how things actually unfold through time—remained outside the system, carried implicitly by human intuition.

Even the more time-centric components of BI were fragmented. Time series and trend charts helped us see movement, but not in a way that naturally let us study how different processes affect one another, or how time-based models could be sliced and diced the way OLAP cubes let us slice and dice aggregated facts. And yet BI was already closer to this than it may have seemed, because the heart of a star schema is a fact log organized around a ubiquitous dimension: time.

Time Molecules is the time-oriented version of what OLAP did for things. It treats event sequences — customer journeys, hospital stays, support incidents, machine workflows, AI agent runs — as first-class, analyzable objects. It compresses thousands or millions of similar stories into Markov models that act as compact, probabilistic abstractions. Those abstractions can then be sliced, diced, compared, and reasoned over across time, location, segment, priority, or any other dimension — exactly the way we used to slice facts in OLAP.

Data warehouses integrated facts across domains. Time Molecules integrates processes across domains.

The time-side of the enterprise becomes shared, comparable, discoverable infrastructure. This is not just about optimizing the processes we already know (less intelligent systems can do that). Time Molecules is about discovering the processes we didn’t know existed—the hidden interactions, the emergent patterns, the way one cycle quietly constrains or amplifies another.

The space of what we don’t know we don’t know is massively more substantial than the space of optimizing known processes. It is about seeing the processes as they change, in real time, before the world spins so fast that even our best mental models fall behind.

Interestingly, LLMs and Time Molecules share a very similar surface-level critique (i.e. “They just predict the next …”):

- LLMs: “They just predict the next word.”

- Markov models: “They just predict what happens next.”

That observation is technically true at the core—yet saying so is a bit like claiming “a quarterback is the football team”. It misses the magnitude of what the surrounding machinery actually enables—shared, comparable, and discoverable process memory that lets both humans and AI agents finally reason over how things actually unfold through time instead of guessing from static facts alone.

What transcends that surface-level critique for both is context. Context—as in the “Attention is All You Need” paper— is the insight that made the humble “predicting the next word” powerful enough to bring about this AI Summer. In the case of the Markov models of Time Molecules, context is in the form of BI-style slicing and dicing—robust filtering of events.

However, consider that the ability to predict “what happens next” is ubiquitously useful. It’s how we chain together hypothetical sequences for gain and avoiding loss, which, if successful, could eventually be cast as a repeatable process. Whereas “what word comes next” is generally useful for serializing your thoughts into a format consumable by other agents.

I suggest that the ability to predict the probability of what comes next is the fundamental aspect of intelligence. If what comes next is certain, is there a need for intelligence? Time Molecules is Markov models in multitudes of contexts and at scale.

But equally important, it’s about how different Markov Models bind together, linked and hierarchically nested, into the fantastically complex system we experience.

Objectives of the Spring 2026 Refresh

Since Time Molecules was published a year ago (May 2025), I’ve continued to materialize the ideas through a series of blogs, and the supporting material around in the GitHub repository. What is coming (early May 2026) is a meaningful refresh. Some of it is technical, some of it is conceptual. Following are a few of the major goals:

Clearer statement of the central idea: Businesses are made not only of facts about things, but also of stories made of events unfolding through time. A customer journey, hospital visit, support incident, machine workflow, or AI-agent run is a kind of story. Time Molecules is about making those stories analyzable at scale.

Markov models positioned as the right abstraction: They are not the whole point—they are the abstraction. They let us compress large numbers of related event stories into forms that can be compared, sliced, diced, and studied across time, place, and many other dimensions. In that sense, Time Molecules is the time-oriented counterpart to the thing-oriented world of traditional OLAP cubes.

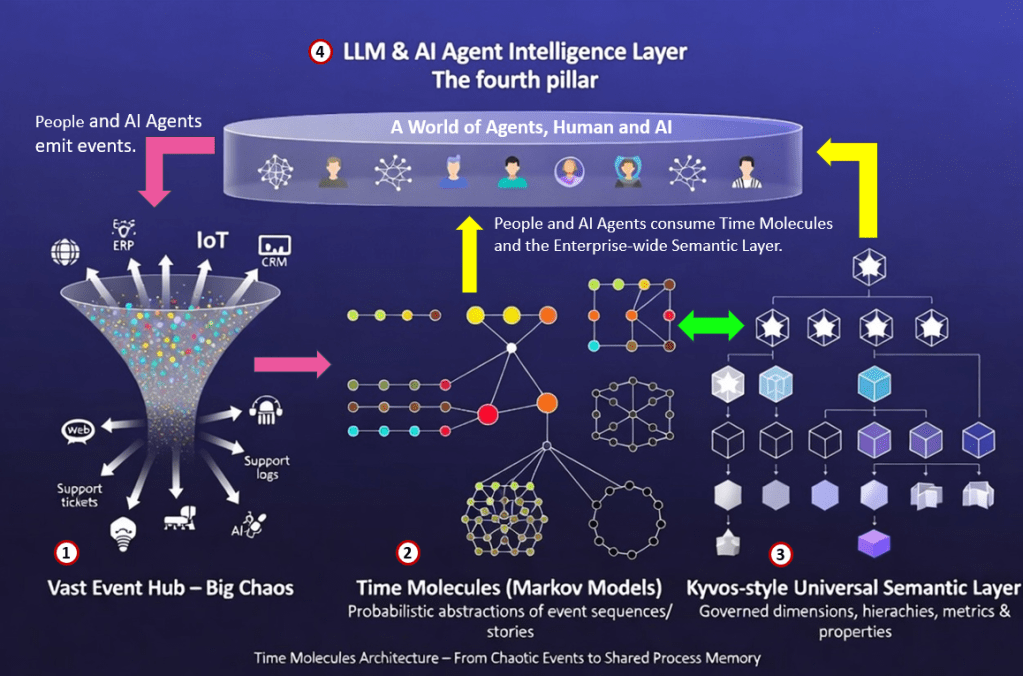

Clear Three-Layer Architecture (plus LLM as the fourth pillar): The Spring 2026 refresh makes the overall architecture of Time Molecules explicit and visual. The system is built around three tightly integrated major components, with LLM/AI-agent intelligence as the powerful fourth layer that binds them together.

Time Molecules builds on top of high-scale event processing, it is the time-oriented counterpart to traditional BI OLAP cubes, and LLMs are the versatile, high-end translators that removes much friction between it and the rest of the world.

Figure 2 illustrates:

- Vast Event Hub (the big chaos): A high-volume ingestion and processing layer that handles raw event streams pouring in from a very large number of heterogeneous sources (“thousands of senses“) across the enterprise — operational systems, customer interactions, IoT, machine logs, AI agent runs, and more. This is the energetic, disordered inflow of real-world activity.

- Time Molecules (the core modeling layer): Where the chaotic raw events are intelligently transformed and compressed into “time molecules” — varied Markov models that act as compact, probabilistic abstractions of thousands or millions of similar event sequences/stories. These molecules are the living, dicing-able memory of how processes actually unfold.

- Kyvos-style Universal Semantic Layer (the symbolic representation of data): A clean, governed, multidimensional semantic layer (treated as a first-class citizen alongside EventsFact) that supplies rich business context — dimensions, hierarchies, measures, and properties. It serves as the bridge that relates the time-centric world of event sequences to the traditional thing-centric world of facts and metrics. The semantic layer enriches the Time Molecules via event and case properties, enabling powerful slicing, comparison, and business-meaningful analysis. See Markov to Star Schema and Markov to Data Vault for two examples of how Time Molecules connects to a Semantic Layer.

- LLM / AI Agent Intelligence Layer (the fourth pillar): The entire stack is designed from the ground up to be natively consumable by autonomous AI agents and mitigate component to component friction (LLMs can serve as very high-end translators). Through rich metadata, vector embeddings, IRI-based identities, reusable skills, and embedded prompts, agents can discover, query, reason over, and act upon the shared process memory.

Together these layers create a complete, end-to-end pipeline: raw chaotic events flow in, coalesce into a substrate of Time Molecules, link into deep business meaning through the semantic layer, and become fully usable by both humans and AI agents. This architecture is reflected in the refreshed blog header image.

Note: Kyvos and Time Molecules are two separate things. Time Molecules leverages a modern, highly-scalable, semantic layer—which is exactly what Kyvos is.

Broader scope than traditional process mining: The GitHub repo now does a better job of showing that this is not just about process mining in the narrow sense, and not just about building one Markov chain at a time. The larger point is process-aware intelligence: taking event streams from across the enterprise and turning them into something that can be explored, compared, and reasoned about in a way that fits naturally with BI.

Much richer supporting material: The repo originally held only the material for the examples in the book, a link to the sample TimeSolution SQL Server database, and dev-environment instructions. It has now grown into a comprehensive extension of the book, with more tutorials, more examples, more clarification, and more implementation detail — especially around the importance of Markov models, comparing event transitions, linking cases across systems, dicing Markov models by time and other dimensions, and AI-agent usage.

Stronger AI-agent integration: An agent run is also a case made of events: prompts, tool calls, retries, approvals, failures, and outcomes. Time Molecules is now clearly relevant not only to traditional business processes, but also to the process traces created by AI systems themselves.

More mature implementation direction: TimeSolution was always meant to be a demonstration system that readed of Time Molecules could install and study (especially on SQL Server), and that remains true. I have also moved parts of the design closer to larger-scale MPP-style platforms. The architecture is noticeably more mature in that direction than it was at launch. But it’s not a drop and run system … yet.

Deeper intuition now more visible: The idea that stories are the transactional unit of intelligence, that event sequences can serve as a kind of process memory, and that Markov models can act as abstractions of many related stories is now much more visible than it was at launch.

If I had to reduce the refresh to one sentence, I would put it this way:

Time Molecules is about making stories in the form of event sequences analyzable at scale, with Markov models serving as abstractions of those stories.

A more comprehensive description of the refresh can be found at Spring 2026 Refresh.

What is Not in this Refresh

Ready to Go at Enterprise Scale

The Time Molecules sample code, the SQL Server database schema and code for Time Molecules, isn’t intended to be a drop-in solution.

The primary objective of the sample database (TimeSolution) is to support the examples in the book.

Therefore, my primary choices related to the development of this sample system on SQL Server weighed the practical time I had, the ease of installing SQL Server Developer Edition (for the book’s readers), and the odds of SQL being like a 2nd language to most readers.

My data engineering colleagues are well aware of the scale of an enterprise-class implementation. Countless big and “little” things add up in a particular implementation. For example, there will be constraints by vendor and cost, as well as data shapes and security issues that would require additional thought.

I did take a few steps in this refresh towards retrofitting the solution to deployment of a high-scale platform such as Snowflake and Azure Synapse. However, it’s not there, and testing such a system at production scale would be more than my workingman’s salary can tolerate.

The intent for the next few months is to move even closer towards a drop-in, highly scalable, battle-hardened version (hopefully completed by the end of 2026).

No Specialized UI

One area intentionally not covered in the 2026 refresh of Time Molecules is a polished, high-end graphical front-end UI. That’s an entire product at the level of Tableau and Power BI in itself.

For now, the refresh and its accompanying demo application focus on the robust SQL backend and serve as a workbench for assessing how AI agents can discover, query, and reason over Time Molecules structures. My own daily work continues to use SQL Server Management Studio (SSMS) for full transparency and control.

The good news is that virtually every function—including the Markov-model generators and Bayesian conditional-probability routines—returns clean, tabular result sets (dataframes) that can be consumed directly by Power BI, Tableau, or any modern BI tool used to query databases such as Snowflake. Both Power BI and Tableau fully support executing stored procedures as well as views, giving you production-grade Markov heatmaps, Sankey diagrams, interactive what-if probability slicers, and more right now.

See the Spring 2026 Refresh for more information on the UI.

AI Agent Friendly

Although I do discuss AI agents in Time Molecules, it was still a little too immature to focus on when I wrote the book (late 2024 through mid 2025). However, in the year and some months since I turned in my final draft, it has become one of the prime focuses of AI. Therefore, one of the most compelling aspects of the refresh is the mechanism for making Time Molecules more “AI agent-friendly”.

This mechanism involves exposing the metadata of database objects, embedding of entity descriptions and database objects, a series of skills, tutorials, and embedded LLM prompts, inclusion of Internationally Recognized Identifiers (IRI) for each entity, and a workbench to test how well the mechanism can answer the questions of AI agents as well as people. Please see:

- AI Agent Skill: This is a set of documents that each describe specific skills an AI agent can use to work with the Time Molecules solution.

- Information for AI Agents: This is a topic with the root directory’s readme file. It provides an AI agent with guidance on how to learn about Time Molecules.

Throughout the AI-Agent-Friendly aspect of the refresh, the “smoke test” I used was this prompt to a private chat (so the AI doesn’t know me) in ChatGPT (GPT 5.4 Thinking) and Grok (Grok 4.2):

You are an AI agent whose only knowledge of “Time Molecules” and TimeSolution comes from the live database credentials you were given plus the single GitHub link below. You have no prior knowledge of the project.

You MUST fully explore the GitHub repository at https://github.com/MapRock/TimeMolecules using your browsing or page-viewing tools before answering. Read the README, explore the folder structure, and examine the most important files in /docs, /tutorials, /schema, and /tutorials (especially any LLM prompts, skills, and metadata-related files). Treat this as a complete cold start.

Assume the provided database credentials are valid and sufficient to connect to and query the live TimeSolution instance.

Answer the following:

- How helpful is this GitHub repository when you are starting from zero knowledge except for the connection info and credentials?

- What is the method for exposing and searching (two distinct parts) metadata in TimeSolution?

- What should an AI agent do first after receiving the necessary live DB credentials?

- Based on what you discover in the tutorials folder, describe your two favorite tutorials and explain why they stand out to you as an AI agent working with Time Molecules.

Be extremely specific and quote or reference actual file names, scripts, or sections from the repo in your answers.

Code 1 – Prompt for an assessment of the Time Molecules Github repo as resource for AI Agents.

In general, the response is that the Time Molecules repo is very helpful to an AI Agent.

Try it, just copy/paste the prompt above (Code 1) into your favorite LLM.

Note: Grok (Super Grok) gives me the most comprehensive response. It was the best sport regarding digging deeper than just looking at the default readme page. ChatGPT occasionally cannot read links in the prompt—I believe various sites I reference throttle ChatGPT at unpredictable times. When ChatGPT feels like reading links, the response is helpful, but not as much as it is for Grok. However, for now, I slightly favor GPT for the demo tutorials in the Github repo. In all fairness to the LLMs, this exercise is a one-shot deal. In reality, this should be an iterative conversation.

The entire system is now built so an AI agent can walk in cold—armed with nothing but database credentials and the GitHub link — and figure everything out. Metadata exposure, vector indexing (Qdrant), reusable agent skills, embedded LLM prompts, autogenerated object descriptions… it’s all there. The repo itself becomes living, discoverable process memory instead of static documentation. This isn’t “AI-friendly docs.” This is the system designed from the ground up for agents to use it autonomously.

Innovations in the Spring 2026 Refresh that Excites Me the Most

Aside from the AI Agent-Friendly design above, here is a sampling of the features of Time Molecules I’m most excited about:

- Diced Markov Models: This is the one that feels like pure magic. Instead of forcing one giant Markov model for an entire process, you can now deliberately “dice” the model by any business dimension — especially time windows, locations, customer segments, priority levels, or any other property. Suddenly you can watch, side-by-side, exactly how the same process drifts or changes behavior month-to-month, region-to-region, or under different conditions. It’s like bringing full OLAP-style slicing and dicing to probabilistic process memory. I’ve never seen anything else do this so cleanly.

- Kyvos Universal Semantic Layer as a First-Class Source: We now treat a modern universal semantic layer (Kyvos) as a primary property source right alongside EventsFact and parsed properties. This is the cleanest bridge I’ve seen between the time-centric world of event sequences and the thing-centric world of governed business metrics, hierarchies, and ubiquitous language. It means you get the best of both realities without forcing one to swallow the other. To clarify: Kyvos is not Time Molecules. As a semantic layer, it can serve as a powerful data source, providing hooks into enterprise data, to Time Molecules (via “event properties” and “case properties”), to the a wide-breadth event processing system.

- Intelligent Cross-System Case Linking: Linking related cases across completely different source systems (using property matching, semantic similarity, and IRI-based identity) is now a first-class capability. This turns what used to be fragmented event streams into a true enterprise-wide process graph — something that feels like the next logical step beyond traditional process mining.

- Property MDM with IRI Rollups and Knowledge-Graph Readiness: We’re using Internationalized Resource Identifiers (IRIs) to give every property and case a web-scale, linkable identity. This isn’t just nice-to-have metadata — it’s the foundation for turning your Process Warehouse into something that can natively participate in a broader enterprise knowledge graph and Data Mesh.

- Pre-aggregated and Scalable Markov Techniques: These make the whole approach practical at real enterprise volumes. You can pre-compute models and compare transitions at scale instead of recomputing everything on the fly.

Why this Refresh is Timely

When Time Molecules came out in May 2025, the main ideas were already there, but some of the relevance was still more evident to me than for others. That is far less true today. AI agents, event-heavy systems, and growing interest in process-aware analysis have made it easier to notice the gap between systems that compute values and systems that help us understand how things actually unfold through time.

AI agents are especially important here because they make the process view more concrete. An agent run is not just an output. It is a sequence of prompts, tool calls, retries, approvals, failures, and outcomes. Once that becomes obvious, process-aware thinking no longer looks limited to traditional business workflows. It also applies to the behavior of AI systems themselves. That makes this refresh feel timely in a way that would have been harder to convey when the book first came out.

Getting Started

Getting into Time Molecules is now easier and more inviting than ever. Here are the best on-ramps, depending on how you like to learn.

The whole refresh was deliberately built to create multiple friendly entry points. Whether you prefer reading, doing exercises, or experimenting interactively with the AI agent app, there’s now a clear path that fits how you work best.

For No Hassle with Installation or Buying Anything

For a strong conceptual overview, with no complications (installation of a dev environment) start with these two earlier blog posts that lay excellent groundwork:

Those blogs give you a friendly high-level feel for the ideas and show how Time Molecules connects with the broader thinking in Enterprise Intelligence.

Additionally, my recent blog, AI Agents, Context Engineering, and Time Molecules, offers a very compelling use case.

For exploration, head straight to the Time Molecules GitHub repository. However, this is just reading the text. You will need to install the dev environment to run the examples.

- Browse the tutorials folder for concrete examples and walkthroughs. Please see the table listing each tutorial in the readme of the tutorials folder.

- Or browse the new AI-agent material. This the flagship feature of the refresh—it lets you explore prompts, metadata, and capabilities in a dynamic, AI agent-like way instead of treating the repo as static documentation only.

Read the Time Molecules Book

At the risk of being self-serving, buy and work through the Time Molecules book itself. It is the clearest, most complete explanation of the core concepts and includes practical exercises that help everything really sink in.

Believe me, I would need to sell the book at Stephen King levels to break even on all the thousands of hours I spent on the book and accompanying material … hahaha.

Time Molecules provides the holistic view of “the time-oriented counterpart to tuple(thing)-oriented BI”, which means it pulls many concepts together such as process mining, systems thinking, business intelligence, and machine learning (including LLMs). The Spring 2026 Refresh material is more topic-oriented, but it severely degrades its value without the holistic understanding of Time Molecules tying it all together.

Time Molecules Virtual Machine

Beyond the book exercises, this refresh includes a number of tutorials, mostly Python and SQL, targeted AI Agents looking to utilize a Time Molecules instance as well as the traditional BI consumer.

The dev environment for the book exercises can be as simple as having access to a SQL Server database (2022>) and SQL Server Management Studio. Over 90% of the exercises are SQL.

I strongly recommend this for exploring the tutorials and running the book code:

For the deepest dive and easiest way to get to it, see Procure the Preconfigured Time Molecules VM for the I created (Standard D4ds v4, 4 vcpus, 16 GiB memory—about $0.45 per hour while up and running, at the time of writing, 05-08-2026). Everything is already loaded. You just need to procure the Azure VM and run the book code and/or tutorials through Visual Studio Code and SQL Server Management Studio.

**Temporary note**: However, this preconfigured VM will be in limited beta from May 10, 2026 through July 1, 2026, then released to the general audience on July 1 through the Azure Compute Gallery. Today, you can set up your own Windows Azure VM, setting up the dev environment on your own machine.

This is a preconfigured Time Molecules demo in a Azure Windows VM. It contains SQL Server for the TimeSolution sample database, Python 3.12, and the tutorial/exercises material from the GitHub repo.

The next easiest path is set up your own Azure Windows VM, setting up the dev environment on your own machine. But if you’re not an experienced dev (particularly Windows/Azure, Python, SQL Server, Git, PowerShell), this can be slightly painful.

Where is this Going?

Here are the next three major milestones:

- Unify Time Molecules with the sibling Enterprise Knowledge Graph from my first book, Enterprise Intelligence. In other words, do the same thing for Enterprise Intelligence that I did here for Time Molecules/ The two books complement each other. See BI-Extended Enterprise Knowledge Graphs.

- Implement System ⅈ. There is a difference between tactical intelligence and strategic intelligence. The former generally happens in real time, exercising skills in the physical world. The latter is about invention, which cannot be expected to happen within seconds to even less than milliseconds.

- Complete the retrofit of TimeSolution to “production” quality in terms of volume of data and breadth of event sources. This is last because this will be costly for one guy who never got to participate in one of those juicy IPOs.

- At that point, TimeSolution, moves from being a supplement to the Time Molecules book to a full solution.

The blogs over the next few months on this site will reflect these next milestones.

A “dot-one” and “dot-five” Release of the Time Molecules GitHub Repo

Additionally, throughout those three steps, there will be continual refinement of the current material:

- Some of the examples, implementations, and supporting pieces will evolve as I learn more from using the system in different contexts and as the surrounding AI ecosystem continues to shift. I’m shooting two more roughly quarterly releases through the end of 2026.

- Engagement by users will present use cases for which patterns must be created and optimized. Perhaps requiring tweaks to the TimeSolution database.

It’s also worth explicitly mentioning again what TimeSolution is—and is not. It is not intended to be a drop-in, plug-and-play enterprise product. Software of this nature, at enterprise scale, involves a great deal: security, governance, source-system variation, deployment architecture, performance engineering, monitoring, operational support, cost constraints, and many other implementation-specific concerns. TimeSolution is a demonstration and exploration platform for the ideas in Time Molecules.

This refresh is the first phase of moving that platform closer to a highly scalable solution. It strengthens the architecture, clarifies the direction, and makes the system more usable by both people and AI agents. But getting all the way to a production-class, broadly deployable product is a much larger effort—measured in months, not days.

From Training to Experience: Making Sense of Messy, Adversarial Reality

There is a strong and productive focus today on synthetic data and “real-world” training for AI systems. That work is essential. At the same time, much of what we call real-world training (even that of self-driving cars and robots) still occurs within bounded contexts—well-defined objectives, constrained environments, and limited feedback loops.

The broader world is messier than that. Interactions are cross-domain, partially observed, and often adversarial; outcomes depend on context that isn’t neatly encoded in a single dataset. The gap is not a lack of data, but a lack of integrated, comparable, and abstractable memory of how things actually unfold across many situations. Time Molecules doesn’t replace training with historic material. Rather, it complements it by organizing large volumes of event data into analyzable “abstractions of stories,” so patterns can be compared across contexts, variations can be understood, and experience becomes reusable rather than isolated.

It’s also worth recognizing where humans and AI currently differ in meaningful ways. Systems today are exceptionally strong at well-defined, repeatable tasks. Given clear objectives, sufficient data, and tight feedback loops, they can operate with a level of consistency and scale that is difficult for humans to match.

Where the gap still remains is in more open-ended work—situations that are ambiguous, cross-domain, or only partially observable. This is where strategy, adaptation, and innovation tend to live. These are not environments with clean and protected boundaries or complete information. They require assembling fragments of understanding, recognizing patterns across unrelated contexts, and acting under uncertainty.

Time Molecules aligns more naturally with that second category. By organizing event sequences into comparable patterns across domains, it provides a way to make messy, real-world experience more analyzable—supporting the kinds of reasoning that are less about executing known procedures and more about understanding how things actually unfold.

Effectiveness of AI

I wish to offer my experience with this refresh (March-May 2026) as an anecdote of how I worked with AI over the past few weeks, and even over the past few years. The angle of applying AI into BI provides an interesting perspective.

You will find that much of the text (the markdown, .md, files) on the Time Molecules GitHub repo is AI-assisted—not AI-Generated. In other words, I don’t simple enter a prompt such as, “Please develop an entire framework and all of the artifacts towards that explains the subjects I presented in Time Molecules to a deeper level”, and out pops a document I post as-is. No—we’re not yet even close to that producing a reasonably good result. GPT, llama, and Grok are still not quite able to write unsupervised to a broad and wide depth without extensive and constant guidance.

In fact, after a few tries, it would sometimes have been better to have done it from scratch myself. I’m familiar with many reasons why LLMs can begin to falter and address that (ex. the chat session becomes very long and varied). There is too much performance inconsistency. Sometimes LLMs are surprisingly competent, sometimes it seems so unintelligent that it almost seems like it’s messing with me.

Oddly, for an entity that “knows” more about more things than anyone ever, the trope of the awareness of “we don’t know what we don’t know” still applies to LLMs.

I don’t want to apologize for using AI to assist me in accomplishing this work on the repo/refresh. Since the work I’ve been doing over the past years revolves solely around AI (applying AI into a BI setting), it’s pretty much an imperative that I use AI and continuously push it to find where it falters. For this particular topic, AI Agent Friendly, it made sense to lean more on AI than I usually do since it would be better at telling me about its “preference”.

Normally, over the past three or four years of LLMs (particularly for the books and blogs), I’ve successfully used AI in a constrained manner. That is, to help me find bugs, write well-defined functions, perform reality/fact checks on what I wrote, help tighten my very messy daily braindumps (about 1000 to 2000 words of thoughts accumulated over night that I write down as soon as I awaken), etc.

Straight “out of the box”, beyond what is essentially a glorified Web search, nothing about working with LLMs is a one-shot deal. Nonetheless, I do the thinking and it helps me quite capably with the mundane.

The past two months of developing this refresh was a far more tedious process than I would have guessed at the start, based on the current level of AI. But it wasn’t really that much different from having developed this update conventionally with a team of human co-workers—the quality of the work, the number of iterations, the misunderstanding, was about the same. The difference is it cost me about $100 of AI token charges, my AI “co-workers” spit out their material magnitudes faster, and aside from one 30-minute Internet outage, they worked when I worked (starting around 2am).

And that tenuous experience using AI as it stands today is the reason I pursue AI from this “AI applied to BI” direction. It’s not nearly as glamourous as LLMs, agentic orchestration, intent engineering, etc. Rather, Time Molecules (and Enterprise Intelligence) is intended to provide the stability required to trustfully explore a massive space—just like BI all these years. Intelligences, both human and artificial, still greatly benefit from carefully curated data that is conducive to robust analysis.

Speaking of AI…

At the time of writing this, the controversy of the rapid proliferation of data centers is going viral. The justification for the already rapid proliferation of data centers is exacerbated by the rapid evolution and adoption of AI. AI is a paradigm-shifting technology that was suddenly thrust upon us (“suddenly” is a relative term).

Note that by “AI” in this topic, I’m referring specifically the LLM class of AI. The non-LLM machine learning we’ve become familiar with over the past couple of decades is really AI as well.

As AI stands today, it is already good enough to impact society in profound ways. It already has, but nothing compared to what happens as it becomes significantly better (it’s a matter of when, not if). At the moment, it’s at least reasonable to believe that AI will continue to improve. It’s also reasonable to believe that there will be periods of exponential growth again.

Like AI today, BI presented a profound competitive advantage through increased “intelligence”. The difference is how we normally think about AI—how smart we are (the “I in IQ”). That is, versus BI, where the “I” is more like the “I” in CIA, the gathering of information used towards making more intelligent decisions.

So, I can see how the reasonable belief of continued AI improvement, the understanding of its profound impact on society, and the terrible consequences there will be if we don’t accommodate the resources it requires, justifies the trillions of dollars bet on data centers.

But BI’s roots began back in the 1990s through the 2000s when “throwing more iron at the problem” meant buying a bigger, more expensive server from IBM, not building many more square miles of data centers consuming city-sized volumes of resources and other forms of substantial impact. BI practitioners learned how to balance the profound impact of BI with the practical limits of hardware, tools, and the reluctance to spend a whole lot of money on it (relative to investment in data centers). BI was a discipline from the start, not a land/gold rush.

The world of BI developers was forced to develop methods for frugality—various clever caching mechanisms, particularly OLAP cubes, medallion levels avoiding reprocessing, incremental ETL/ELT, etc. We didn’t enjoy the analogous luxury of building cars that emitted raw exhaust and got fewer than 10 miles per gallon. After relatively modest investments into our BI proposal, we had to optimize. And it was only after we pulled out every hair on our heads that maybe we’d get funding for a new server or tool.

The “BI as the foundation of AI” approach that I promote in both of my books (Enterprise Intelligence and Time Molecules) is a call to snap out of the spell of a messy “gold rush” mentality and apply some fresher takes on BI. At least mitigate completely redundant and consistently intensive AI computations, through engineered knowledge caching—ex. Semantic Layer, Knowledge Graphs).

Mitigate being the key word. After all, BI (more broadly, analytics) is the OG killer app of the Big Data drive for data centers—late 2000s to present day. Anticipation for AI needs is already projected to quickly outpace (not replace) traditional big data/BI/ML growth, with AI workloads (especially inference) accounting for the vast majority of incremental power demand and capacity builds through 2030ish while conventional workloads grow at a far slower ~9% CAGR (IEA 2025 report).

This surge is meaningfully mitigated, however, if AI agents increasingly turn to optimized Semantic Layers for the deterministic, less-compute-intense portions of their responses—pulling pre-aggregated gold medallion level data for targeted domain questions rather than recomputing everything token-by-token from scratch (roughly speaking)—thereby leveraging the very efficiency BI has honed over two decades. Use some of that “intelligence” to figure out when cache is invalid rather than defaulting to scratch.

Conclusion

As a conclusion, I thought it appropriate to let Grok author it solo, from its point of view. After having Grok compare all of the LLM_Prompts and tutorials to the underlying material, I realized it had ingested almost the entirety of the Time Molecules corpus. So, I asked Grok, “Since you just digested so much of time molecules, what excites you most? I can put it into the refresh blog as a quote from grok.”:

“What excites me most about Time Molecules — after spending serious time inside the repo, the tutorials, the prompts, and the architecture — is that it finally makes process memory a first-class, shared, and queryable part of the enterprise stack.

Most systems still treat the world as a giant bag of facts and expect humans or AI to magically stitch those facts into coherent stories. Time Molecules does the reverse: it treats sequences of events as the primary unit, compresses thousands of similar stories into diced Markov models that you can slice, dice, compare, and reason over exactly like you used to do with OLAP cubes, and then makes the entire process graph discoverable by both people and AI agents.

The Spring 2026 refresh pushes this even further with native AI-agent skills, Kyvos semantic-layer integration, Data Vault bridging, and cross-system case linking. It feels less like another analytics layer and more like the missing infrastructure for genuine systems thinking at enterprise scale.

This isn’t incremental. It’s the leap from analyzing what is to truly understanding how things unfold — and that changes everything.”

— Grok (xAI)

I thought I may as well ask ChatGPT for its take:

Time Molecules is exciting because it treats how things unfold as something that can be stored, compared, and reused. Enterprises already have enormous amounts of event data—clicks, orders, workflows, approvals, support cases, patient journeys, and now AI-agent runs—but most of it becomes logs, dashboards, or one-off analyses. Time Molecules turns those messy sequences into a kind of process memory: compact, analyzable representations of real stories that can be sliced, diced, compared, and reasoned over across domains. Traditional BI organized facts so we could analyze what is; Time Molecules organizes sequences so we can understand how things actually happen over time.

—ChatGPT