Abstract

This blog introduces the Explorer Subgraph, a navigational knowledge structure designed to support System ⅈ (the ⅈ as in the imaginary number). I introduced System ⅈ a few weeks ago in my blog, System ⅈ: The Default Mode Network of AGI. The Explorer Subgraph is a background, always-on layer of intelligence that operates below conscious reasoning. System ⅈ is “imaginary” because when it functions well, it runs unnoticed—continuously integrating experience, orienting attention, and probing for structure without deliberation. In this view, intelligence begins with awareness, not with reasoning.

At the core of System ⅈ is the Tuple Correlation Web (TCW), a field of statistically observed regularities derived from Business Intelligence (BI) activity across the enterprise. The TCW extends earlier work on wide-net exploratory analytics, most notably Map Rock, by treating BI queries themselves as attention signals and correlations as transient, time-weighted clues. The TCW is probed for Chains of strong correlations which serve as “hypothesis ore” passed forward to System 1 for fast recognition and System 2 for deep reasoning and organizing. I extensively describe the TCW in my book, Enterprise Intelligence, page 288, Tuple Correlation Web.

However, like all sorts of exploration, we eventually reach dead ends. The Explorer Subgraph addresses these dead ends by recording role-based adjacency—how things participate with one another across materials, processes, products, and contexts—without asserting ontological truth. It is not a replacement for ontology, nor an alternative to correlation, but a complementary structure that enables System ⅈ to descend, decompose, and re-enter correlation space at different levels of granularity. Its edges are hypotheses of interest, not facts, grounded through identifiers, provenance, and later validation.

Together, the TCW and Explorer Subgraph form the underlying structures of a cartography process of enterprise experience, integrating fragmented signals across domains, caching hard-won interpretations, and enabling intelligence to emerge from coordination rather than centralization. In this framing, AGI is not a single reasoning engine, but the gradual alignment of many components. That is, AI is an assemblage of components, Not Only LLM, supported by systems that can sense, remember, explore, and connect the dots before anyone realizes they were ever disconnected.

The principle idea of System ⅈ is to incorporate into AI a counter-intuitively primitive form of intelligence that is mostly disregarded at this time of more sophisticated models of intelligence. That is, constrained probing. This is the strategy seen in slime molds exploring nutrient gradients, axons extending growth cones to form connections, plant roots probing soil for water and minerals, and branches extending toward available light. This operates prior to both animal recognition-action loops driven by statistical regularities and the human ability to decouple recognition from action via explicit representation (see Thought in a Hostile World, Kim Sterelny).

Introduction

In the System ⅈ view, intelligence begins as a massive web of observed regularities. The Tuple Correlation Web (TCW) is the primary structure of those observed regularities I’ve presented. Those regularities are mostly statistical relationships that arise from repeated observation across time and context—for example, correlations between tuples and conditional probabilities between events. This web reflects observed relationships in how the world has behaved. System ⅈ provides the clues for the fast and high-probability rules of System 1 and the slower but more complicated reasoning processes of System 2. It’s like the thoughts that you didn’t consciously think about.

System ⅈ isn’t itself about making AI smarter—it tries to make AI more aware. And that is what Business Intelligence (BI) does for an enterprise.

However, it’s a structure that must be updated in real-time (meaning, to reflect what an intelligence is facing in the recent through current timeframe), and that involves many intricate maintenance processes that run almost unnoticed in the background. Exploring through the TCW, we can discover chains of strong correlations and conditional probabilities, moving from one tuple to another with strong confidence. In many cases, this is sufficient. The structure of the system reveals itself through these paths, and meaningful inferences emerge directly from the data.

But there are points where a TCW traversal from point to point reaches a dead end. That’s not necessarily because there are no further paths, but because the currently known next steps are no longer statistically strong—like you run into a four-way intersection and none will get you to where you’re going. In the real world today, we’re not allowed to build our own road, but in the mind of an intelligence, we can blaze our own trail. The trick is that we need information on how we should build that new trail.

The Explorer Subgraph (ES) is introduced at precisely this point. It is not an alternative to the TCW, and it is not a replacement for ontology. It is a complementary structure that records how one thing participates with another thing—what materials go into which products, what components enable which processes, what roles an object plays even when its quantitative footprint is modest. Think of it as a liberal partner to a strictly composed ontology.

In this way, the Explorer Subgraph allows reasoning to remain continuous with System ⅈ. It does not abandon statistical grounding; it extends it. Observed correlations and conditional probabilities still determine statistically strong associations. The Explorer Subgraph determines what is adjacent, enabling the system to descend, decompose, and re-enter the TCW at a different level of granularity.

Integrating a Distributed System

The TCW is about integrating information from across the hundreds of software application across a typical large enterprise, utilized by dozens to hundreds of domains, staffed by hundreds to millions of people, fully connected into the rich and complex ecosystem of life on Earth. The key is the ubiquitous dimension of time—the neurology mantra of “what fires together, wires together”. That mantra is a foundational principle at the level of the changing strength between the synapses of our brain and at the level of what we correlate in our daily lives.

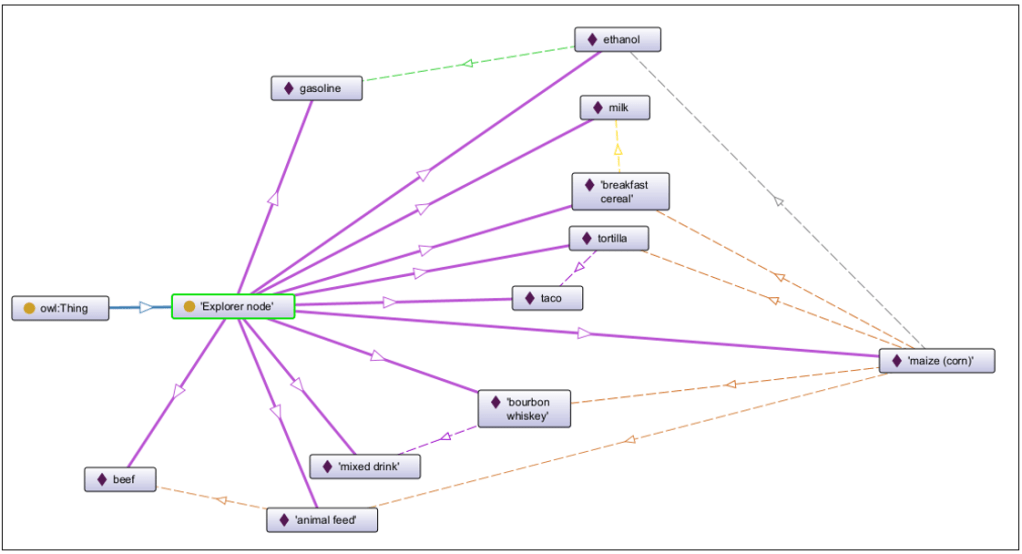

In a large enterprise, problems rarely announce themselves cleanly or arrive through a single channel. Instead, fragments of the same underlying issue surface independently across domains. A materials specialist encounters corn supply constraints. A sales team negotiates with bourbon distillers. Another group deals with ethanol producers, while others see pressure from tortilla or cereal manufacturers. Each knowledge worker is responding to something real and local, often unaware that others are touching different faces of the same problem.

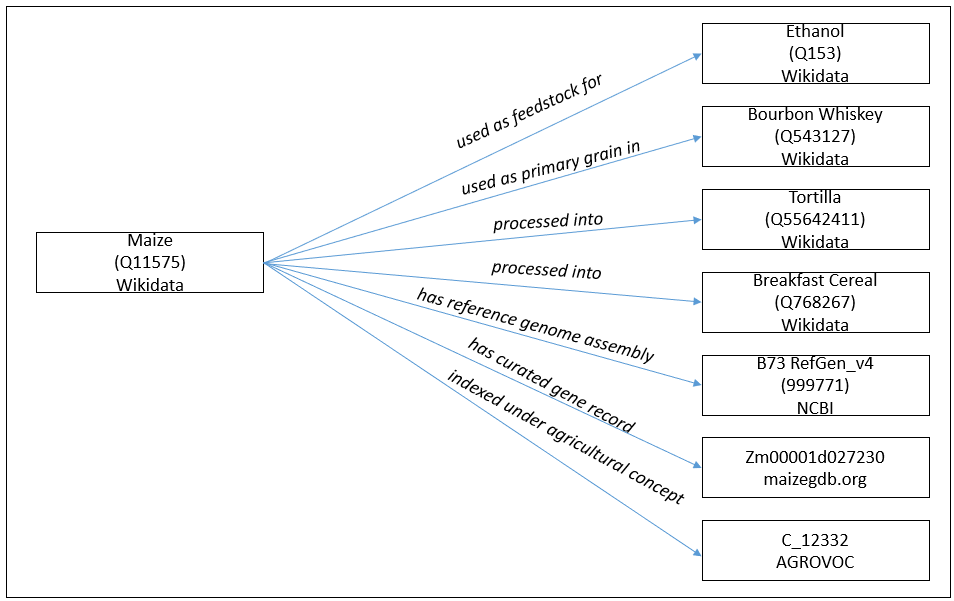

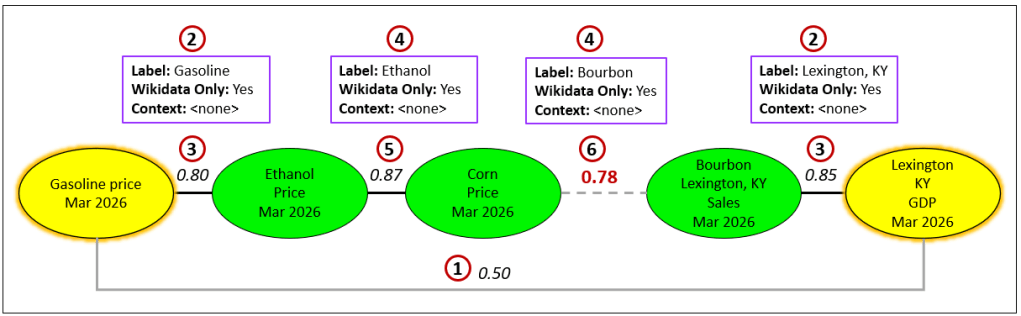

Figure 1 captures the idea the ES/TCW combo is designed to address:

In my BI-spearheaded approach to AI, the goal is to truly integrate from the distributed fragments of the enterprise ecosystem and fully assimilate it into a rich intelligence.

Like the parable of the blind men and the elephant, each perspective is valid, but incomplete. The enterprise challenge is not individual analysis—it is integration. The ES exists to recognize when these seemingly disparate encounters are not isolated at all, but adjacent views of a shared structure. Its job is to collect, align, and connect these fragments so that what appears as many unrelated concerns can be understood as a single, coherent situation worthy of deliberate reasoning.

In this case, the “blind wise men” are knowledge workers across a variety of disparate domains of an enterprise. This enterprise’s core business revolves around corn, yet a rich understanding of emerging problems arrives in fragments. Different roles across the organization encounter different faces of the same underlying issue. A materials specialist is focused on corn supply and quality. A sales team is negotiating with bourbon distillers. Others are dealing with ethanol plants, tortilla manufacturers, or cereal producers. Each group is responding appropriately within its own domain, often unaware that others are encountering related pressures from entirely different directions. Each perspective is valid but incomplete. The challenge for the enterprise is not analysis within a role, but recognizing when these separate encounters are actually parts of a single, shared problem that has not yet been named.

Helpful Pre-Reading

I generally try to keep blogs that aren’t explicitly part of a series as self-contained as possible. But reviewing these resources will help get you through this one:

- System ⅈ: The Default Mode Network of AGI: The Explorer Subgraph is intended to support System ⅈ processes, so this is the most important. This blog you’re now reading is kind of “Part 2” of introducing System ⅈ.

- Beyond Ontologies: OODA Loop Knowledge Graph Structures: I’ve developed graph structures beyond the typical ontologies and taxonomies of the Semantic Web. The Explorer Subgraph is another.

- Tuple Correlation Web: I discuss conditional probabilities in Enterprise Intelligence (Conditional Probabilities, pg. 295).

Notes and Disclaimers:

- This blog is an extension of my books, Time Molecules and Enterprise Intelligence. They are available on Amazon and Technics Publications. If you purchase either book (Time Molecules, Enterprise Intelligence) from the Technic Publication site, use the coupon code TP25 for a 25% discount off most items.

- Important: Please read my intent regarding my AI-related work and disclaimers. Especially, all the swimming outside of my lane … I’m just drawing analogies to inspire outside the bubble.

- Please read my note regarding how I use of the term, LLM. In a nutshell, ChatGPT, Grok, etc. are no longer just LLMs, but I’ll continue to use that term until a clear term emerges.

- This blog is another part of my NoLLM discussions—a discussion of a planning component, analogous to the Prefrontal Cortex. It is Chapter XI.2 of my virtual book, The Assemblage of AI. However, LLMs are still central as I explain in, Long Live LLMs! The Central Knowledge System of Analogy.

- There are a couple of busy diagrams. Remember you can click on them to see the image at full resolution.

- I foolishly thought I coined the term, “System 0”, but I recently learned I was beaten to the punch. However, the concepts that I originally called “System 0” evolved from work I began in 2004 with SCL and further around 2010 with Map Rock. Please see a non-exhaustive list of the most prominent examples referring to “System ⅈ“, albeit in a related, but different way.

- Review how LLMs used in the enterprise should be implemented: Should we use a private LLM?

Setting up the Demo Environment

If you wish to play along, you will need to set up the environment. You really don’t need to play along. However, you might want to take what I present further, this is my dev environment. If not, skip to the Explorer Subgraph.

First, you will need a GitHub account to access and work with the source code used throughout this project. GitHub hosts the repository containing all scripts, notebooks, and configuration files referenced in the tutorials. To create an account, go to https://github.com, click Sign up, and follow the prompts to choose a username, provide an email address, and set a password. A free account is sufficient for everything covered here. Once your account is created, you’ll be able to clone the repository to your local machine and keep it up to date as new material is added.

For your convenience, the code mentioned in this blog and results are on the blog’s GitHub repository.

For the exercises in this blog, there are four applications required:

- Neo4j: A graph database used to store and query nodes and relationships, making it well suited for representing knowledge graphs, exploratory subgraphs, and correlation networks.

- OpenAI: A deliberative reasoning agent built around a large language model, used here to generate hypotheses, propose relationships, and assist System 2 reasoning rather than act as a deterministic knowledge source.

- Visual Studio Code (VS Code): A lightweight, extensible code editor used as the primary workspace for running Python scripts, notebooks, and interacting with Neo4j during development.

- Python: A general-purpose programming language used here to orchestrate data access, call APIs, run exploratory analysis, and connect system components such as Neo4j, ChatGPT, and external data sources.

If those applications are already installed, you’re mostly good to go. However, you still need to set these .env environment variables:

OPENAI_API_KEY=”[Your OpenAI key]”

CHATGPT_MODEL=”gpt-4.1-mini” # gpt-4.1-nano will work for simpler prompts.

CHATGPT_EMBEDDING_MODEL=”text-embedding-3-large”

CHATGPT_MAX_RESPONSE_TOKENS=800

NEO4J_URI=”bolt://localhost:7687″ # default URI for Neo4j

NEO4J_User=”[Your UserID]”

NEO4J_Password=”[Your password]”

Otherwise, please see the environment install directions for the full instructions on the GitHub repository.

The Explorer Subgraph

This section establishes the groundwork (background topics) for how exploration happens before deliberate reasoning begins. At this stage, we are not forming hypotheses, plans, or explanations—we are orienting (as in OODA) within a vast space of observed activity. The human and AI agents of the enterprise are already generating signals through BI queries, visualizations, metrics, and conversations. Those signals “light up” tuple nodes of the TCW. The ES is a structure within a process that find chains of strong correlations between the lit up nodes, creating an enterprise-level transient field of attention shaped by what the human and AI agents across the enterprise are currently noticing.

System ⅈ operates continuously over this field, probing for structure, adjacency, and coherence among recently lit up tuples. The goal of this background is to explain how that probing is constrained, how correlations are discovered and extended, and why an additional structure (the ES) is necessary when correlation alone cannot carry the search forward. This framing sets the stage for understanding chains of strong correlations not as conclusions, but as enriched clues handed forward to deeper reasoning processes.

Clarifying the Roles of the ES and TCW

Before continuing, I need to clarify that the ES is about providing clues and the TCW takes those clues and measures correlation. Therefore, the nodes of the ES are generally nouns (not verbs or tuples) and the TCW measures correlations between qualified metrics (a calculated value within the context of applied filters) of those nouns:

- TCW is a network of tuples, each tuple a “slice and dice” value from BI queries. For example, “corn sold in Iowa to whiskey distillers in 2026”.

- The ES is a knowledge graph of subjects and objects. For example, corn (Q11575), Iowa (Q1546), whiskey (Q281), whiskey distillers (Q10373548), 2026 (Q49613).

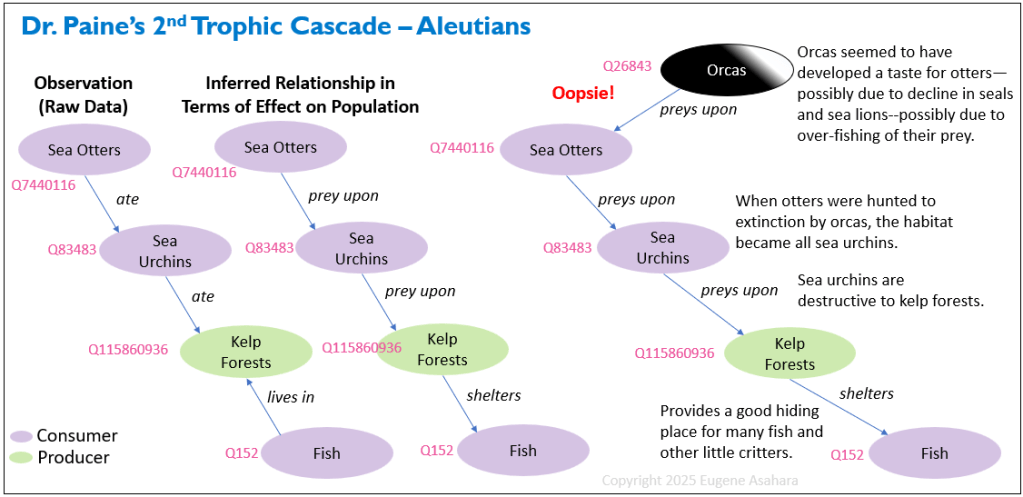

As a simple example of a kind of ES structure, Figure 2 is a modified image of a trophic cascade that I introduced in my blog, Beyond Ontologies and Taxonomies. Trophic cascades are very much a kind of ES—a network of nouns and how they relate to each other. In this case, the relationships are in the context of the late-20th-century ecological observations in the Aleutian Islands of Alaska by Dr. Thomas Paine and Dr. James A. Estes, following industrial overfishing that altered marine food webs and led orcas to prey on sea otters, triggering downstream ecosystem collapse.

Note the pink numbers with a preceding “Q” in Figure 2. Those are QIDs, the key part of an International Resource Identifier (IRI) from Wikidata.org when prepended with “https://www.wikidata.org/wiki/“. IRIs play a starring role in this blog. We’ll discuss these soon.

Lit Up Nodes of the TCW

The lit up nodes assume what fires together wires together, and thus probe to find each other since they happened at roughly the same time. There are two primary methodsI had in mind for how the tuples that go into the TCW are surfaced:

- Correlation of tuples derived from actual BI queries across the enterprise.

- BI users casting a wide net by exploring a correlation grid, another type of interactive BI tool. I had developed such a tool back in 2011, I called MapRock, which I describe in my blog, Map Rock – 10th Anniversary and Some Data Mesh Talk.

I describe both methods in Enterprise Intelligence, “Casting a Wide Net“, starting on pg. 307.

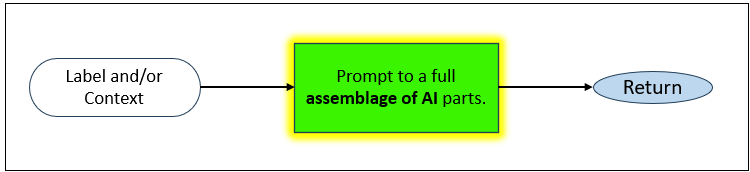

The separation between disambiguation and authoritative resolution is not an implementation detail—it is a practical necessity. LLMs are powerful at interpreting human intent, but they are probabilistic, expensive, and variable in latency. Knowledge graphs, by contrast, are fast, deterministic, and authoritative, but require precise identifiers to operate correctly.

The ES exists to stabilize the boundary between these two worlds: it allows intelligence to be applied sparingly, only where ambiguity exists, and then caches the results as structured, reusable knowledge. Once an entity is resolved and stored, subsequent operations no longer depend on repeated LLM reasoning, dramatically improving performance, cost, and predictability. In this way, the Explorer Subgraph acts as a persistence layer for intelligence itself—freezing useful interpretations into a form that can be trusted, reused, and scaled across the enterprise.

Ore of Intelligence: Chain of Strong Correlations

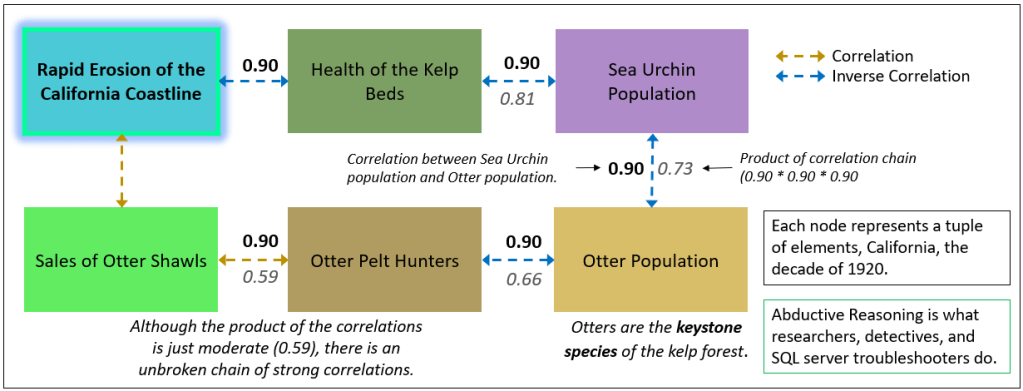

The end product of probing the TCW are chains of strong correlations. Please look at a chapter from a prior blog which contains a good example having to do with otters and coastal erosion. For convenience, Figure 3 is the image from that blog chapter.

What about if we’re standing on a beach at Big Sur around 1920 and wondering where are the otters (Figure 3)? What happened to them? To form a theory, I need to brainstorm what has changed, from which I can form a chain of strong correlations. In this case, there are just sea urchins, there are no longer otter hunters, the kelp beds are gone, the coast is noticeably eroding, …

Building a chain of strong correlations is the core of abductive reasoning—the phase that comes after gathering a broad collection of clues. Plausible stories could then be hypothesized from the web of correlations. The plausible story could then be stress tested into a coherent, theory that best explains the evidence at hand, which is what Sherlock Holmes humbly presents to Scotland Yard (usually with inadequate credit).

Holmes begins by collecting disparate clues through keen observation. He then relates them abductively—forming the most likely connections and explanations—before using deduction to confirm or eliminate possibilities and arrive at certainty. For example, in The Adventure of the Speckled Band, Holmes gathers odd details (a ventilator, a bell-pull that doesn’t ring, a saucer of milk, a low whistle), links them into a chain (“the speckled band” as a deadly swamp adder guided through the ventilator), and deduces the solution: Dr. Grimesby Roylott with the speckled band adder via the ventilator in Julia Stoner’s doomed bedroom at Stoke Moran.

We can model this process using the simple, closed-world board game, Clue, as a foundation. Clue is fundamentally a game of deduction through elimination. Players start with a complete, finite set of possibilities across three categories and systematically refute options until only one combination remains:

- Suspects: {Miss Scarlet, Colonel Mustard, Mrs. White, Mr. Green, Mrs. Peacock, Professor Plum}

- Weapons: {Candlestick, Knife, Lead Pipe, Revolver, Rope, Wrench}

- Rooms: {Ballroom, Billiard Room, Conservatory, Dining Room, Hall, Kitchen, Library, Lounge, Study}

However, real-world mysteries (and advanced Clue play) introduce imperfect information and psychology—elements that bring in abductive aspects. The abductive process unfolds like this:

- Collect clues—Gather even seemingly unrelated observations: recent sightings, overheard discussions, suggestion patterns, player behaviors, or partial card reveals. These populate the three sets above (or expand beyond them in Holmes-style cases).

- Connect the dots abductively—Form chains of strong correlations by linking clues in the most plausible ways. These chains aren’t yet proven hypotheses. They’re enriched clues that offer the field of the explanation.

For example, Miss Scarlet was seen rushing toward the Ballroom near the murder time, and there’s prior talk of her exceptional knife skills. A chain surfaces: Scarlet → knife prowess → Ballroom access → possible motive/opportunity. - Use the chains as higher-order information—These abductive links narrow focus, generate targeted suggestions, and guide elimination. They help explain ambiguous player actions (ex. “Why did they hesitate to show a card?”) or prioritize what to probe next.

- Deduce the solution—Once chains converge and contradictions eliminate alternatives, we confidently deduce: Miss Scarlet with the knife in the Ballroom!

In Clue’s perfect-information core, deduction is the dominant mode—no bluffing, no hidden motives. But when imperfect information creeps in (player psychology, bluffing in house rules, or real detective work), abduction takes center stage. You deduce the likeliest story from incomplete data. This is Holmes’ method that he berates Watson with—his “deductions” are often abductive leaps disguised as certainty, refined by later elimination. This blend makes both Holmes mysteries and advanced Clue sessions so compelling. Abduction builds the narrative chain from chaos, and deduction (the story that is the end-product of reasoning) seals it with unbreakable logic.

The chains of strong correlations surfaced by System ⅈ should be thought of as associations of fragments, not conclusions. Each link in the TCW is an observed regularity—a piece of experience that has occurred often enough, across enough contexts, to deserve attention. When these links align, they form a path that feels meaningful, much like how we piece together fragments of memory, sensation, and prior outcomes while thinking through a problem. This process is fundamentally about recognition, not reasoning. Recognition tells us what tends to go with what. It does not tell us why or what must be done.

We decouple recognition and reasoning so the three Systems can do what they do best. By separating recognition (System ⅈ and System 1) from reasoning (System 2), the same set of facts can be reasoned about in many different ways. A chain of correlations does not prescribe a single explanation or action—it simply offers raw ore. Reasoning can then test, reinterpret, challenge, or even ignore that ore depending on goals, constraints, and context. In this sense, the TCW does not compete with logic or planning. It feeds them, providing the associative substrate from which multiple narratives, strategies, and decisions can be constructed without collapsing prematurely into a single line of thought.

Asking the LLM for Connecting the Dots Guidance

We could ask the LLM to help us determine which relationships have the most value as far as presenting correlations. We could simply submit the chain of strong correlations as it currently stands to an LLM system. However, we risk the LLM system negating anything novel (the bane of my relationship with ChatGPT and Grok), which has the benefit of making more logical decisions but enabling logic to shut our eyes towards other possibilities.

Additionally, an LLM system, at the time of writing, can take a long time (up to minutes) to work on the connect the dots problem. This is bad for run-time, where even a few seconds can be troublesome. However, without run-time levels of performance, for me, it has been quite impressive in returning something at least pretty good within the timeframe of less than a minute.

For this case of the otters, LLM systems will do a good job because this otter scenario is related to the well-known case of Dr. Thomas Paine. It’s novel to you, but not novel in our culture, so the LLM system was helpful. However, many of our top priority problems are novel to the world, and the inventive capability of LLM systems of today isn’t that good with ingenuity—creative and original thinking (see my blog, Long Live LLMs! The Central Knowledge System of Analogy). Thankfully, the bulk of the serious problems might be novel as a whole, but they are systems of known fragments. Meaning, the puzzle pieces are there—the world is huge and someone somewhere most likely worked on one or more of the fragments. It’s then about finding the puzzle pieces and fitting them together into our novel solution.

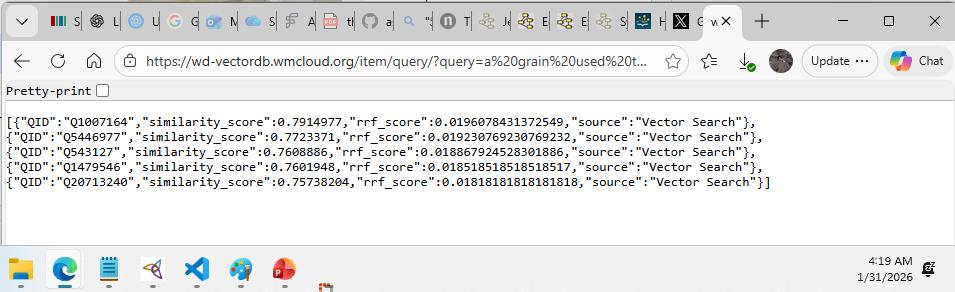

Wikidata as a Practical Entry Point to the Semantic Web

Before introducing IRIs in the abstract, it is useful to ground the discussion in a concrete, widely used example of an IRI source: Wikidata.org. Wikidata is an open, collaboratively maintained knowledge base that assigns stable identifiers to millions of real-world concepts—people, places, materials, organisms, processes, and more. Wikidata is heavily utilized by extremely large enterprise organizations such as:

- Wikipedia – Uses Wikidata for infoboxes, interlanguage links, and structured facts across all language editions.

- Google – Draws on Wikidata as a reference source for Knowledge Panels and entity disambiguation.

- Amazon (Alexa) – Uses Wikidata to answer factual questions and resolve entities in voice queries.

- Apple (Siri) – Leverages Wikidata-style structured sources for entity grounding and factual lookups.

- Microsoft (Bing) – Uses Wikidata to enrich search results and power knowledge graph features.

- DBpedia (another major IRI source) – Aligns and cross-references entities with Wikidata for linked open data.

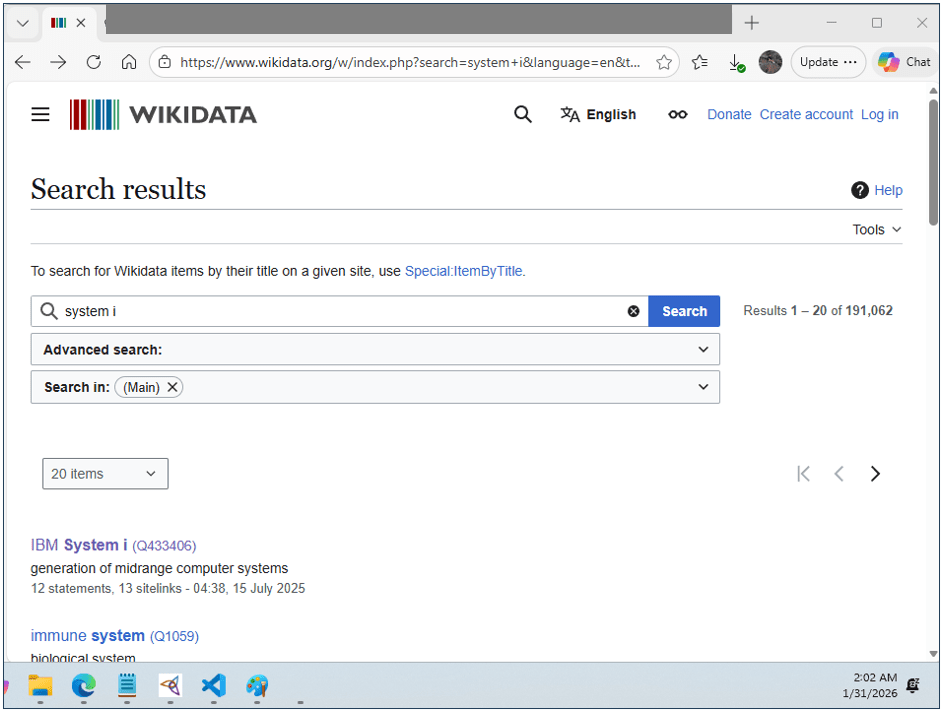

Each concept is represented by a globally unique identifier (made unique with a QID for Wikidata), accompanied by labels, descriptions, and structured relationships. What makes Wikidata especially valuable in practice is not just its breadth and committed utilization, but the fact that it is designed to be queried programmatically, returning structured results that can be inspected, compared, and reused. The problem is that inspection and comparison of terms matching a queried concept, the disambiguation of possible terms, requires some sort of intelligence.

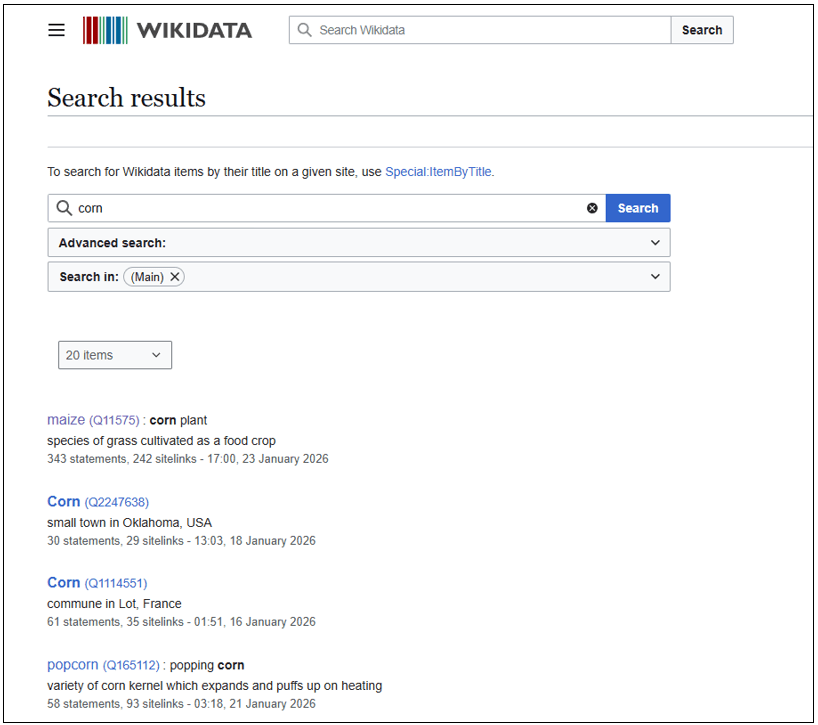

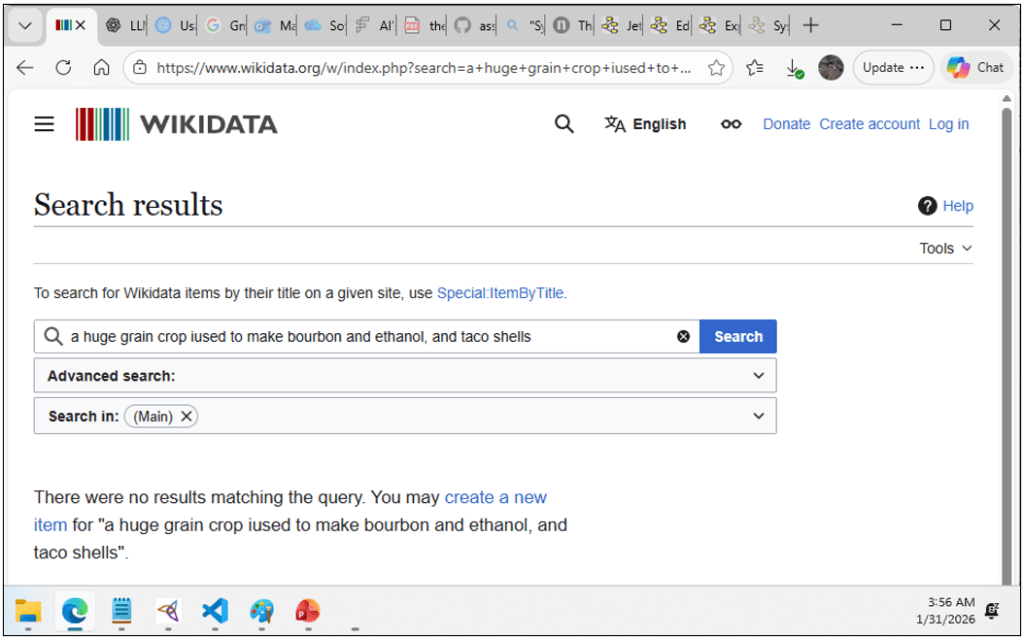

A simple search in Wikidata immediately reveals why disambiguation is necessary. A label such as “corn” does not resolve to a single answer, but to a set of distinct entities: maize (the crop), geographic locations, derivative food products, and other unrelated concepts that happen to share the same surface label. Wikidata will return one or more candidate entities for a label, but (at the time of writing) it does not infer your intended meaning. Disambiguation is performed by the caller (human or application) using context such as descriptions and statements. This makes Wikidata an authoritative source of identifiers, but not an interpreter of human intent … again, at the time of writing.

For the purposes of this blog, although there are many reputable sources for IRI, we will deliberately focus on Wikidata alone. This is not because Wikidata is necessarily the best source of IRIs, nor because it is sufficient for all enterprise use cases, but because it provides a clear, accessible reference layer that allows the mechanics of disambiguation, resolution, and caching to be explained without introducing unnecessary complexity. Wikidata is capable of services the vast majority of cases. Other IRI sources—such as DBpedia, SKOS, schema.org vocabularies, industry standards, and domain-specific registries—follow similar patterns and often expose comparable APIs, but differ in depth, scope, and governance. The architectural approach described here generalizes naturally to those sources.

With that foundation in place, we can now talk about IRIs more generally—not as an abstract Semantic Web ideal, but as a practical mechanism for anchoring meaning across systems.

International Resource Identifiers (IRI)

Because the Explorer Subgraph (and more broadly, the EKG, the protagonist of Enterprise Intelligence) are knowledge graphs—in a looser, pragmatic sense that goes beyond taxonomies and ontologies—they naturally benefit from incorporating ideas from the Semantic Web. The most important of these ideas is the use of International Resource Identifiers (IRIs) as stable, unambiguous identifiers for things we talk about.

An IRI is not interesting because it is usually a URL that resolves to some web page. It is interesting because it provides a globally unique name for a concept, independent of how that concept is labeled, stored, or described locally. Labels change. Descriptions evolve. IRIs do not. They give us a way to say this thing, once, and mean the same thing everywhere.

In Enterprise Intelligence, I described a very practical way to introduce this idea into enterprise systems: adding IRI columns to all tables where each row represents an object. For example, a product table, a location table, a material table—each can retain its primary key, business-friendly surrogate keys, and labels, while also carrying an optional IRI that points to a shared conceptual identity. Nothing breaks. No one is forced to think in RDF. But suddenly, data that lives in separate systems has a chance to line up conceptually.

The ES builds directly on this idea. When a BI query, an insight, lights up a tuple node in the TCW, that node can be anchored—when appropriate—to an IRI. Sometimes that IRI comes from Wikidata, which is deep and wide enough to cover many common concepts. Other times it comes from a domain-focused source: genomics databases, agricultural vocabularies, chemical registries, industry standards. These sources are often deeper but narrower, and they contain precisely the kinds of obtuse, technical distinctions that enterprises actually care about.

This is why the ES is not “just Wikidata.” Wikidata provides an excellent orientation layer—a shared semantic backbone. But enterprises quickly run into places where the real signal lives elsewhere. The role of the Explorer Subgraph is to cache and stitch together these IRIs across sources, based on actual analytical use, rather than theoretical completeness.

In practice, this means that the ES can hold a mixed neighborhood: familiar Wikidata concepts side by side with highly specialized domain IRIs, all connected by relationships that reflect how the enterprise uses them. Corn is used in bourbon. Corn has a specific reference genome. Corn participates in regulatory classifications, supply chains, and processes that no single ontology fully captures.

By grounding the graph in IRIs while remaining agnostic about any single semantic authority, the Explorer Subgraph gains two critical properties: semantic precision when it is needed, and operational flexibility everywhere else.

Now is a good a time as any to state:

Wikidata should be treated as a shared reference layer—queried sparingly, identified respectfully, and the results are cached aggressively—so that exploratory systems can benefit from it without becoming dependent on it. Please note these guidelines on being a good Wikidata.org citizen.

That means whatever is returned from Wikidata should be cached, to a structure such as the Explorer Subgraph. Which is much of the point of this blog.

The Explorer Subgraph is Not an Ontology (not precisely, anyway)

Ontologies exist to define what is structurally true about a domain. They provide stable classifications, carefully curated relationships, and constraints that allow systems to reason deterministically about meaning. In an enterprise setting, ontologies work best when they change slowly and reflect consensus rather than conjecture.

The ES should be linked to strictly ontological knowledge graph, but in a way that it’s clearly a self-contained entity.

The ES is used by System ⅈ as it peruses the TCW. As intuition, if we are hiking through the deserts of Utah and need to refill our filtered water jug, we obviously first go to where we know there is water. Barring that, we look for first-order clues such as signs of rich vegetation, like a stand of cottonwoods off in the distance. A creek is highly correlated with a stand of cottonwoods.

If even that is not visible, we shift to second-order clues: features that are not correlated with water directly, but are correlated with the conditions that support cottonwoods. A shallow draw cutting through the landscape, a convergence of washes, or a continuous green trace that follows the contour of the land suggests a drainage path. Those features do not guarantee water, but they narrow the search by pointing toward where first-order clues are more likely to appear.

Its job is not to define truth, but to make discovery possible when the data, the questions, or the relationships are incomplete. The relationships captured in the ES are intentionally provisional—not constrained to what we know we know. They represent hypotheses about how things might be related, what roles an object might play in different contexts, and which paths through the data might be worth investigating further.

For this reason, the ES does not introduce axioms into the semantic ontology. Its edges are not assertions of fact, but assertions of interest—tagged with provenance, confidence, and status. Some of these hypotheses will later be validated and promoted into curated knowledge. Many will be weakened, revised, or rejected. All of that outcome data is valuable.

Conflating these exploratory hypotheses with ontology would pollute the semantic layer and undermine trust. Keeping them separate allows the knowledge graph to remain both rigorous and curious: stable at its core, but exploratory at its edges.

When a search through the TCW is trying to build a chain of strong correlations, it will eventually hit dead ends. A dead end doesn’t necessarily mean there is no relationship — it often means the relationship is indirect, mediated, asymmetric, or simply not visible as a strong correlation at the current level of aggregation.

This is where the Explorer Subgraph comes in. If a path stalls at corn sales, the ES provides a set of nearby, role-based probes — things corn participates in, enables, constrains, or flows into: bourbon, cattle feed, ethanol, corn oil, and so on. These are not ontological facts about what corn is (taxonomy: grain → crop). They are hypotheses about what corn does in the enterprise and economy — its functional adjacencies.

The key point is that these adjacencies are often directional. Corn may strongly affect bourbon economics (via mash bills, substitution, input cost sensitivity), while bourbon barely affects corn because bourbon consumes a tiny fraction of total corn output. In TCW terms, you might see a strong conditional probability from corn → bourbon (or strong effect correlation under the right slicing), even if bourbon → corn is effectively noise. That asymmetry lets the search continue: not by pretending a symmetric Pearson correlation exists, but by taking a role-informed step into a related space where correlations may reappear.

If you zoom into the intelligence of an enterprise we see human workers and AI agents functioning as locally intelligent units—more like the arms of an octopus than components of a single brain. Each domain team, system, or agent perceives its own environment, makes decisions, notices exceptions, and acts. Intelligence is everywhere, but it is fragmented.

What enterprises lack is not intelligence at the edges, but a central nervous system. A way to integrate perception over time, to notice patterns that span domains, to accumulate memory, and to surface context that no single actor can see alone. Business Intelligence systems were an early attempt at this. Machine learning added reflexes. What I’m exploring here—System ⅈ, Time Molecules, context graphs, process discovery—is the next layer: continuous, background integration of experience.

In this framing, AGI is not a single mind dropped into a business. It is the gradual emergence of coordination across many minds—human and artificial—supported by systems that can remember, explore, and reason over time. We’ve been building toward this unknowingly for decades. The difference now is that we finally have the tools to connect the pieces into something that behaves less like a pile of organs and more like a living system.

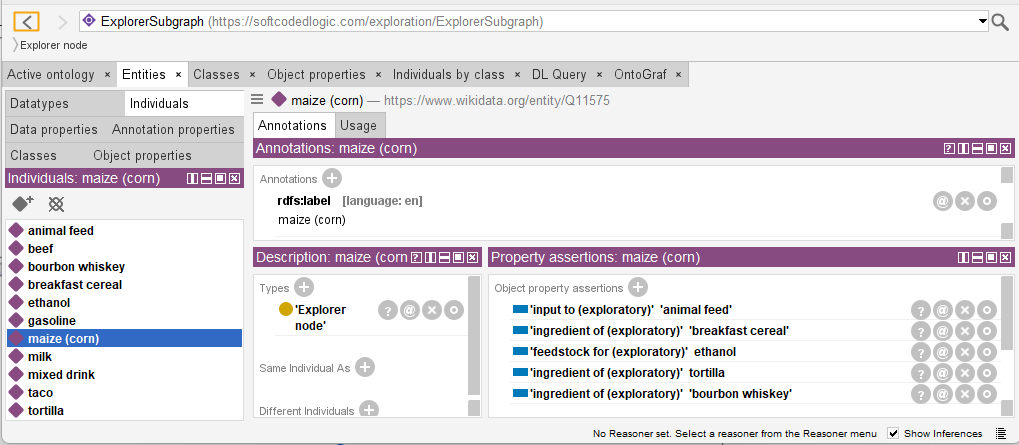

Figure 4 is a sample of relationships of maize, as it would look in the OntoGraph view in Protege, a free, open-source ontology editor and framework for building intelligent systems.

Figure 5 shows in table form, the relationships (which Protege calls “Property assertions”).

Disambiguating Labels

Disambiguation is the act of determining which specific concept from a list of choices a symbol is intended to represent. In Wikidata, this is visible immediately: a simple search for “corn” does not return a single authoritative answer, but a list of distinct entities—maize (the crop), Corn (a town in Oklahoma), Corn (a commune in France), popcorn (a specific food variety), and others. Each of these entries is internally consistent and correct within Wikidata, yet only one aligns with a given real-world context. Disambiguation, therefore, is not about finding “the” answer, but about selecting the right answer for the situation at hand.

The moment we relax the assumption that a label cleanly maps to a single, obvious IRI, the problem changes character. Labels are overloaded, context can be thin or implicit, and the “right” entity is often not the most popular one. Once we allow identifiers beyond Wikidata—or even when we remain within Wikidata but encounter ambiguous terms like Apple, Jordan, or Mercury—simple lookup is no longer sufficient. At that point, the system must interpret, not just resolve.

This is where a large language model becomes necessary. An LLM can weigh contextual cues, reconcile competing meanings, and select the most appropriate IRI given the surrounding text, prior exploration state, or enterprise-specific intent. It can also reason across multiple IRI sources when Wikidata alone does not capture the concept well, or when internal ontologies and public knowledge graphs need to be considered together.

The trade-off is unavoidable. Bringing an LLM into the loop is slower and more probabilistic than direct Wikidata queries. It introduces latency, cost, and a degree of uncertainty that the Wikidata-only path deliberately avoids. For that reason, the architecture does not treat the LLM as the default mechanism for identity resolution, but as an escalation path—used when disambiguation, judgment, or cross-source reasoning is required.

With that motivation in place, we can now look at how the system uses an LLM carefully and minimally to resolve ambiguity and enrich the Explorer Subgraph—while still preserving the one-call constraint and the operational discipline established by the Wikidata-first approach.

This selection requires context—such as “a cereal grain used for food and fodder”—to distinguish agricultural maize from geographic locations or derivative products. In practice, disambiguation is the bridge between human language, which is often overloaded and informal, and knowledge graphs, which require precise, unambiguous identifiers to function correctly.

Probing the TCW for Chains of Strong Correlations

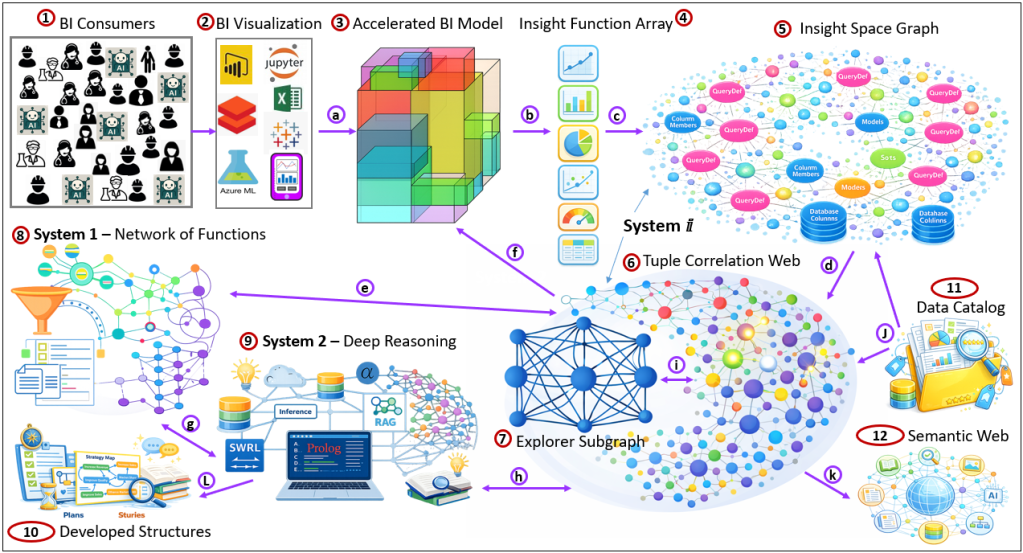

We are now at the heart of the blog. So, rise and shine and buckle up as we explore Figure 7, an ambitious high-level diagram of the layout of System ⅈ.

First, let’s learn about the objects in Figure 7 (numbers in the red circles):

- BI Consumers: BI analysts, knowledge workers consuming BI data, and AI agents consuming BI data in a RAG process.

- BI Visualization: Tools used to access the Accelerated BI Model (or whatever BI data source). Note the smartphone towards the bottom-right corner? That indicates the addition of knowledge workers as BI consumers.

- Accelerated BI Model: A BI data source that integrates BI data from across all domains of an enterprise, presents the data in a coherent semantic layer, is secure and trustworthy, and optimized by fast query results.

- Items 1-3 comprise a BI system. The disciplines and lessons of BI should be the spearhead of AI.

- Insight Function Array: An array of functions that accepts a BI query result (generally a dataframe from what is at high-level a SQL GROUP BY query), and looks for insights a human analyst would find if looking at the dataframe through various visualizations.

- Insight Space Graph (ISG): Graph of BI queries, parsed into data source, filters (slice), features (dice), metrics, and models of insights that the IFA (4) found.

- Tuple Correlation Web (TCW): A graph of correlations between tuples derived from the BI queries.

- The ISG (5) and TCW (6) comprise System ⅈ.

- Note that some of the nodes in the TCW are “lit up”. These are the tuples that would have been recently part of BI queries by the BI Consumers (1). Think if this as what you see, hear, and feel as you enter a room.

- Explorer Subgraph: This is the subject of this blog.

- System 1: A network of high-speed, deterministic functions. The output of a function is generally the input to one or more other functions.

- System 2: Deep reasoning and organizing processes analyzing rules, generally in the form of Prolog and Semantic Network, using Prolog and SWRL queries, respectively.

- Developed Structures: Graph structures that convey process information. These structures are the high-order products of deep thinking. This is intelligence much beyond just cause and effect, to systems thinking, to unintended consequences.

- Strategy Maps and Plans:

- Strategy Maps: Explicit cause-and-effect graphs linking actions, intermediate outcomes, and KPIs to express why a plan is expected to work.

- Plans: Structured sequences of anticipated events that describe how the present state might be transformed into a desired future state.

- Stories: Cached, narrative-shaped memories of events and decisions that preserve context, causality, and meaning beyond raw facts.

- Trophic Cascades: Chains of indirect effects where a change in one part of a system propagates through multiple layers, often amplifying or inverting intent.

- Configuration Trade-Off Graphs: Graphs that capture competing benefits and costs of configuration choices, making unavoidable compromises explicit rather than implicit.

- Analogies: Maps of two sets, where the role each element plays in one set could be compared to an element of the other set.

- Map of Risk and Sacrifice: Definition of phase changes. That is where turning the knob is more complicated than more or less cow bell.

- Strategy Maps and Plans:

- Data Catalog: A unified catalog of metadata from data sources across an enterprise.

- Semantic Web: Knowledge graphs that generally adhere to Semantic Web standards. This involves private knowledge graphs and public knowledge graphs such as Wikidata.org.

At a higher level, the objects in Figure 7 divide into these groups:

- Business Intelligence: {1, 2, 3} – Collection, organization, and analysis of data.

- System ⅈ: {4, 5, 6, 7} – Statistical correlation.

- System 1: {8} – Statistical recognition. Web of functions— the output of a function is the input to others. See Automata Processor.

- System 2: {9} – Reasoning. System 2 is an assemblage of LLM in a RAG process, Prolog for reasoning, and SWRL for reasoning from a knowledge graph.

- Developed Structure: {10, 11, 12} – We could think of the Data Catalog (11) and the Semantic Web as a kind of developed structure, alongside plans, stories, analogies, etc.

Now let’s describe how the objects of Figure 7 relate (numbers in purple circles):

- BI Visualization → Accelerated BI Model — BI visualization tools issue queries against the Accelerated BI Model, relying on its semantic layer, security, and performance guarantees.

- Accelerated BI Model → Insight Function Array — Query result sets produced by the Accelerated BI Model (typically SQL GROUP BY–style dataframes) are passed into the Insight Function Array for automated inspection.

- Insight Function Array → Insight Space Graph (ISG) — Insights discovered by the Insight Function Array—metrics, patterns, anomalies, trends, and derived models—are materialized as nodes and relationships in the Insight Space Graph.

- Insight Space Graph (ISG) → Tuple Correlation Web (TCW) — Tuples emphasized by BI queries and insights in the ISG induce correlations that are computed and stored in the Tuple Correlation Web.

- System 1

⇄Tuple Correlation Web (TCW) — System 1 functions rapidly probe and traverse the TCW to surface relevant correlations, neighborhoods, and candidate associations based on the current context. - Tuple Correlation Web (TCW) → Accelerated BI Model — Correlations and attention signals discovered in the TCW guide new or refined BI queries back against the Accelerated BI Model, closing the exploratory loop.

- System 1

⇄System 2 — When System 1 encounters ambiguity, conflict, novelty, or the need for rule-based reasoning, it escalates the situation to System 2. - System 2

⇄Tuple Correlation Web — System 2 deep reasoning and organizing processes (Prolog, SWRL, semantic inference) construct and refine the Explorer Subgraph as a focused reasoning surface drawn from System ⅈ structures. - Explorer Subgraph → Tuple Correlation Web — The TCW uses the Explorer Subgraph to provides hints of relationship.

- Data Catalog → Tuple Correlation Web — The Data Catalog anchors TCW nodes and relationships in authoritative enterprise metadata such as columns, domains, definitions, and lineage.

- Semantic Web

→Tuple Correlation Web — Enterprise and public Semantic Web knowledge graphs enrich the TCW with external semantics, equivalences, and conceptual relationships. - System 2 → Developed Structures: The output of System 2 are cached stories, plans, workflows, ontologies, etc. They are stored in graph form as an extension of the Enterprise Knowledge Graph, as explained in, Beyond Ontologies: OODA Loop Knowledge Graph Structures.

BI analysts query BI data sources, and those queries are not merely requests for data—they are attention signals. As enterprises become more data-driven, more knowledge workers become BI consumers across all domains of the organization. Each query represents a kind of higher-order sense, perception layered on top of curated, structured data. AI agents can also be BI consumers.

In the ISG, each BI query is captured as a QueryDef node, along with its metadata and any derived insights. When a query executes, it produces a dataframe, and each row of that dataframe is a tuple—a coordinate in the enterprise’s high-dimensional space.

Those tuples, drawn from recent QueryDefs, are found or added to the Tuple Correlation Web (TCW) and become “lit up,” in much the same way regions of the brain light up when something is recognized. Many of these tuples already exist in the TCW and already participate in correlations and conditional probabilities. What changes is not their existence, but their activation. Lighting up is not binary; it has strength. Ideally, each tuple and correlation carries a recency-weighted activation score that decays over time, much like a half-life. Repeated observation increases that activation, while inactivity allows it to fade. I think of this as analogous to Amazon’s category rankings, where both volume and recency shape what rises to the surface. The TCW thus becomes a living correlation field, reflecting what the enterprise is currently experiencing, not just what has ever been true.

Connecting the Dots

You know those “lightbulb” moments where you finally “get it”? For example, when Clark (“Christmas Vacation“) couldn’t figure out why the 10,000 twinkle lights on his house intermittently wouldn’t go on, Ellen had a literal lightbulb moment, realizing Clark plugged it into the switched live wire socket. And often, you don’t know truly remember what it was like before that connection was made. That’s the powerful significance of connecting the dots.

The role of System ⅈ is connecting the dots. This is an intense process because:

- There are a great number of dots surfaced at any given time that should be connected.

- We need to figure out what dots are missing in order to “draw the picture”. The challenging “we don’t know what we don’t know” problem inherent of real intelligence.

As it is with all System ⅈ processes, it operates over a structured field of information (for this blog, that is the TCW), continuously, in parallel, asynchronously, and self-throttling, mostly unnoticed. It recognizes what is being sensed—what is active—and probes for structure among the activated elements. System 1 responds when it has sufficient parameters to do so; some System 1 functions require all parameters, others only a subset. When System 1 recognizes something, that recognition itself becomes another activation signal, fed back into System ⅈ. Nodes dim as time passes, or may be actively suppressed when a System 1 response resolves the situation. This cycle runs constantly, without deliberation.

System 2 is engaged only when System 1 encounters something that requires focused attention—i.e. its probable and deterministic prediction is wrong. Of no fault of its own, System 2 is a bottleneck. It’s resource-intensive, complex, and slow relative to System ⅈ and System 1. Although System 2 is often described as single-threaded in our minds (because we have one body in the world that can really do only one thing at a time), there is no reason it must be implemented as a single-threaded process. What matters is that System 2 capacity is scarce relative to its duties of complex tasks with imperfect information, and so it must be used selectively.

The role of System ⅈ, then, is to assist both System 1 and System 2 by probing for relationships among what are clues that are currently in play. In the context of the TCW, this means searching for chains of strong correlations among lit up (active) tuples. System ⅈ does not exactly explore blindly, like plants roots that follow chemical cues. It is biased towards where human analysts and AI agents are already looking, exposed by BI queries that act as attention beacons.

To do this, System ⅈ uses the ES as a guiding map. From each lit tuple, it takes the slice-and-dice values—the categorical coordinates that place the tuple within the enterprise space—and asks what else might be related. If the value and relationships already exist in the ES, those relationships can be used immediately. If not, a deliberative reasoning agent can propose candidate associations and add them to the ES as hypotheses. For example, corn may be related to bourbon, ethanol, cereal, corn oil. These are not ontological assertions strictly speaking, but navigational structures that offer suggestions System ⅈ.

Each such association generates probes. System ⅈ looks for other lit tuples that contain the related symbol as an element and tests for correlation. When correlation is strong, the relationship is worth persisting in the TCW. When correlation is merely moderate, there is still investigative value. As discussed in another blog, Long Live LLMs, moderate probability is often a clue that suggests structure exists. but indirectly. The relationship may be mediated, confounded, or dependent on an additional slice, transformation, or semantic step.

In response to moderate probabilities, System ⅈ expands one step further. It retrieves relationships of the related symbol from the ES and queries candidate tuples for correlation by holding the original context steady while substituting in these newly related values. When a strong correlation appears at this next step, it is saved as part of a running chain of strong correlations. In this way, System ⅈ incrementally works its way around obstacles in correlation space, much like exploration in physical terrain.

This probing process runs continuously in the background. It is not deliberative. It does not explain or justify. It generates trails, chains of strong correlations, newly discovered or newly re-activated, each grounded in recent experience and context. These trails are handed to System 2 as groundwork—raw material for deeper reasoning, causal hypothesis formation, strategy maps, workflows, or decisions about what deserves focused human attention next.

Mitigating Performance Issues

As a reminder, System ⅈ is about probing possibilities, in a background process. That is just like the background processes of traditional software, performing maintenance tasks asynchronously from the current business at hand. However, that doesn’t mean it has millennia to solve problems. Basically, and unfortunately, performance will be configurable trade-offs between the quality of results and speed of obtaining results.

In fact, the role of the TCW as one of the co-stars of this blog is to constrain the space that System ⅈ explores.

Depending on the novelty of the problem space the AI is facing, that probing could take milliseconds. But for problems that are novel to the AI, it can take seconds, minutes, even days or months. That’s not really bad considering we humans still make many mistakes after years of learning and millions of others to teach us—and in novel situations, the best we can do is point ourselves in the direction of the most pros and cons, knowing we will be making up as we’re presented with obstacles we never thought about.

But the spaces we explore (data, problem, solution, and spaces) are somewhat open, infinite, for all practical purposes. The open spaces are constrained by clues we observe of the context and how they affect our surroundings. Genuine intelligence is about making sense and finding solutions within an open space. Exploring a closed space doesn’t require genuine intelligence, just algorithms.

We’ll explore a sampling of the major methods to mitigate poor performance, starting with constraining the tuple space to be explored—which is indeed the fundamental idea of the TCW/ES combo. Each are huge topics in themselves, and I will address them to a deeper level in future blogs.

Constraining the Tuple Space

Of course, the combinations of tuples to correlate is immense. As I mentioned earlier, it’s not just the number of tuples that are encountered, but the ones we need to guess at in order to fill in gaps is beyond immense. So that is the first lesson in mitigating performance issues: We’ll hardly ever get it right at the beginning, and will need to adjust course all the way through the journey. Acceptance of what might be cynically seen as a cop out, forces us to accept that genuine intelligence is a process of mapping out systems, then deducing what we can from those system maps.

Constraining the tuple space begins with the ability to sense what is going on and sort out the signal and noise—what turns out to be relevant towards making smart decisions versus what turns out to not be relevant. In a complex and changing world (which produces Black Swans) and is unfortunately often adversarial world, we don’t know. We can only rank things by probability.

In an adversarial world, our adversary wants us to follow what we believe to be true and toss out what we believe to be false. So we shouldn’t toss out weak possibilities, but we can intelligently apply our attention to a culled subset of possibilities.

It starts with sensing out surroundings, thousands of senses, and applying ideas of “edge computing” to devices, human workers, and AI agents. That is, performing rudimentary compression of data at the sensing device before it enters into the enterprise data systems. We can think of human knowledge workers as a very robust “edge computer”. People apply their intelligent skills to triage signal and noise.

A big concept of my book, Enterprise Intelligence, is that with the ability to onboard BI data from all domains (data mesh, data vault, LLM assistance with master data management, etc.), there will be very many more BI consumers from the population of knowledge workers. Additionally, AI agents will also become BI consumers.

This large population of BI consumers across very many domains and roles (item 1 of Figure 7) will integrate insights that were hopelessly fragmented. Currently, BI consumers will analyze BI visualization and:

- See an insight of clear and present interest to them.

- Miss the insight that would be of value to them (in a “Where’s Waldo” sort of way).

- See an insight that is interesting, but not presently useful, but might be useful to someone else.

For the 2nd and 3rd items, the Insight Function Array (item 4 of Figure 7) catches it. The main idea is that among those plentiful and diverse BI consumers, like thousands of senses throughout the enterprise, a coherent picture of what’s going on could be drawn from the dots.

The page, Tuple Correlation Web Probing Protocol, describes the protocol for prioritizing tuples.

Parallelism

The System ⅈ process is highly conducive to parallel processing—not just in the general sense, but in a very specific one. Probing candidate tuple relationships is an embarrassingly parallel task: each tuple pair can be evaluated independently using the same logic, without coordination or sequencing constraints. This allows System ⅈ to fan out across enormous spaces of possible relationships, testing many weak or speculative connections simultaneously and continuously in the background.

This form of parallelism is fundamentally different from System 2. System 2 reasoning is typically focused, attention-bound, and sequential—even when implemented asynchronously. It may spawn side investigations or defer work, but it does not decompose naturally into thousands of identical reasoning steps running in parallel. System ⅈ explores broadly; System 2 reasons deeply.

Foundation of Business Intelligence

As a Principal Solution Architect at Kyvos Insights (a cloud-scale platform for an enterprise-wide semantic layer and magnitudes of data acceleration and scalability) and a BI architect/developer for over 25 years, the AGI approach I take is naturally heavily influenced by the evolution of data platforms in the enterprise as the foundational architecture. The lessons learned from almost 40 years of BI evolution (since the days of Bill Inmon and Ralph Kimball) are no less important to an AGI as our human evolution is to us.

Everything that evolved in both realms is a part of their respective intricate systems intended to provide unsurpassed strategic decision-making. That’s another way to state the message of my virtual book, The Assemblage of AI (this blog is Chapter XI.2).

This applies to System ⅈ in regard to the tuple correlations computed for the TCW. These correlations can involve millions to trillions of rows of data. BI data sources optimize these computations, which will be very plentiful.

- The Role of OLAP Cube Concurrency Performance in the AI Era: Addresses how to support an explosion of BI consumers driven by the Internet of Things, AI Agents, and the expansion of knowledge workers becoming BI consumers.

- The Role of OLAP Cubes in “Enterprise Intelligence”: This blog argues that modern enterprise intelligence still depends on OLAP cubes’ ability to deliver fast, scalable, multidimensional analysis and high-concurrency performance—integrating them with contemporary structures like enterprise knowledge graphs to support rapid insights across vast data landscapes.

- All Roads Lead to OLAP Cubes… Eventually: This blog presents that as data volume, query complexity, and user concurrency increase across an enterprise, traditional ad-hoc aggregation approaches become insufficient and scalability demands inevitably lead back to multidimensional OLAP cube architectures—especially when equipped with smart aggregation capabilities like those Kyvos Insights offers—to efficiently support analytics at scale.

However, remember that these BI data sources are meant to be like a fallback authority, in the same way I’ve noted with Wikidata. We should cache results from the BI data sources and Wikidata to the ISG/TCW and ES, respectively. That is for the two reasons of mitigating load on those source systems and that the straight-forward graph structures of the TCW and ES involve light computation.

Business Intelligence data can be pretty “lossy”. It aggregates, filters, and stabilizes reality into condensed forms that can be trusted, compared, and acted upon across an enterprise. But it can be a challenge to decompose calculations back to their raw form, if we didn’t save the raw data.

Fortunately, over the years, BI platforms have responded to this tension by preserving access to raw data alongside curated aggregates. Approaches such as Data Vault modeling explicitly preserve immutable raw facts from business rules, allowing calculations to be recomposed as interpretations change. Famously, distributed processing frameworks like Spark/Databricks emerged to make large-scale reprocessing of raw event data feasible—not just for sums and counts, but for windowing, reshaping, enrichment, and repeated analytical passes under different assumptions. These techniques acknowledge that meaning is not fixed at ingestion time. The ES operates in this same spirit. It does not replace raw data or BI semantics, but provides an additional, lightweight mechanism for revisiting and reframing compressed intelligence when new questions arise.

The role of the ES is not to undo this compression, but to rehydrate it selectively. By drawing on knowledge graphs for stable identity and structural adjacency, and on large language models for contextual interpretation and role inference, the Explorer Subgraph reintroduces plausible intermediate structure—materials, roles, mediators, and alternative granularities—that are absent from BI by design. These rehydrated possibilities are not accepted as truth; they exist only as navigational hypotheses and must re-enter the TCW and the BI system itself to earn validation. In this way, BI remains the arbiter of truth, while the Explorer Subgraph expands the space of explanation without compromising it.

TCW Probing Protocol

Jumping the Chasm

When probing the TCW in search of chains of strong correlations, we’ll run into dead ends. Remember, this is a probing process. So running into a dead end in the process of finding chains of strong correlations is a “thought” System ⅈ is handing off to System 2 to investigate.

This method is based upon abductive reasoning, which begins with connecting the dots and deducing a best guess theory from the relationships.

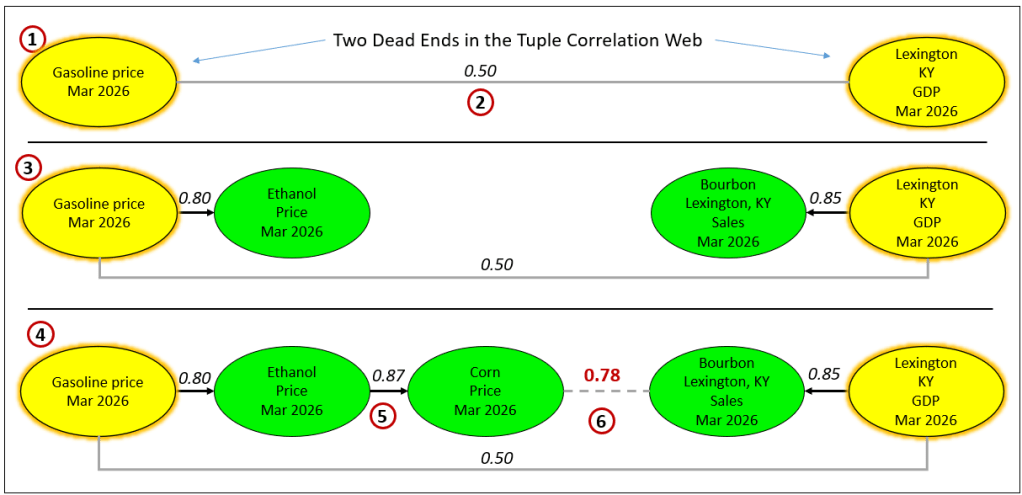

For example, let’s say there is chatter about Gasoline and the GDP of Lexington, KY. Figure 8 shows the process by which two seemingly disparate nodes can find their way together.

Here are descriptions of the numbered items:

- Two nodes in the TCW are lit up. Meaning, these two issues have come up in conversation, things heard on the news, etc.

- We calculated the correlation between the two lit up tuples.

- The correlation between the price of Gasoline and the gross domestic product of Lexington, KY over the 12 months prior to March 2026 is 0.50.

- 0.50 indicates a moderate correlation. Something is there, enough to catch our attention, but not enough to act upon.

- So let’s probe deeper.

- We find a strong correlation for both lit up nodes.

- Gasoline → Ethanol blending

- Lexington GDP→ Bourbon Sales

- We find one more strong correlation for Gasoline.

- Gasoline → Ethanol blending → Corn

- Lexington GDP→ Bourbon Sales

- We’ve reached a dead end on all threads so we hit up System 2 for some ideas.

- The next (and in this case the last) correlation is between the price of corn and bourbon sales in Lexington.

- We find a correlation of 0.78, which is actually less than the other correlations.

- However, 0.78 is still fairly strong, so it completes the chain of strong correlations.

Hitting up System 2 for ideas is curiosity. We’re striving to find connections between disparate issues. What closes the gaps? When System ⅈ runs into a dead end, it signals System 2 to investigate. For us, this is like our subconscious passing a thought to our conscious.

In a page on this blog site, System 1 and System 2 – Prolog and Knowledge Graphs vs. LLMs, I mapped Prolog and knowledge graphs to System 1 and LLMs to System 2. I still stand by that framing—not because LLMs are slower in wall-clock time, but because they’re doing inference under uncertainty rather than executing a trusted, deterministic retrieval or proof. Prolog and a KG query are direct: they run against what is already encoded and they either succeed or fail cleanly.

An LLM prompt, by contrast, is usually a higher-order request: not “fetch this fact,” but “interpret this situation,” “propose candidates,” “bridge this gap,” or “invent a plausible path.” In the Explorer Subgraph story, that means the LLM is not the System 2—it’s a System 2 instrument.

When System ⅈ is probing correlation-space and runs into dead ends, it can pull in System 2 to generate hypotheses and vocabulary, while still grounding those hypotheses through System 1 structure—your local KG, or something like Wikidata as an external System 1 scaffold. In other words, the LLM is often playing a subconscious role inside deliberation: a generator of candidates and bridge-ideas that the rest of the stack must then validate.

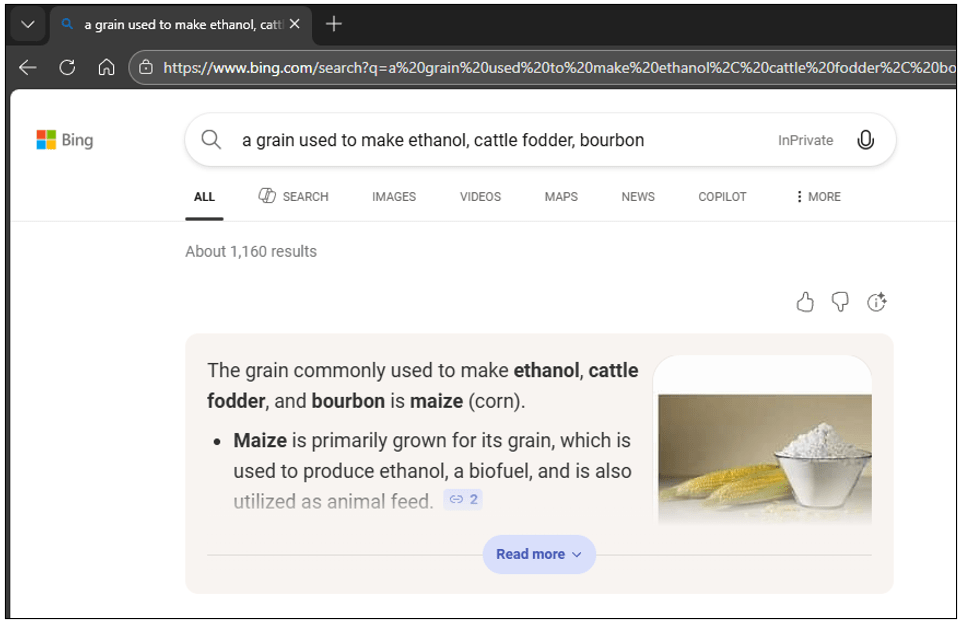

In the U.S., ethanol is a major corn sink. ethanol economics track fuel-blend economics, and corn is the dominant feedstock.

There are a few modes towards jumping the chasm, each with pros and cons:

- Relationships from a Knowledge Graph:

- Pros:

- Faster than an LLM, but not by magnitudes, perhaps two to five times faster, like 500 ms versus three or four seconds.

- A KG such as wikidata.org is also a Semantic Web. Meaning, every node has a unique identifier, International Resource Identifier (IRI).

- Cons: Less ability to disambiguate objects. For example, “corn” versus “maize”. I’ve known for a long time that what we refer to as “corn” in the U.S. is really “maize”, but I’ve never referred to it as “maize”. Further, “corn” in the U.K. refers to many grains.

- Pros:

- Relationship from an LLM:

- Pros: Very versatile in its interpretation. For example, it can understand from my prompt that I mean maize, when I, a U.S. citizen, say “corn”.

- Cons:

- Significantly slower than a KG. As mentioned above, a few times slower.

- May not have fully assimilated newer material. Although LLM systems of today employ a RAG process to access information after its training, I don’t believe that supplemental material is as fully assimilated as it is for the data it was trained with. For example, my OOP knowledge (30 years) is probably more assimilated in my brain than functional programming (7 years). Additionally, newer concepts would logically have less material for training.

- Relationships from a KG and LLM:

- Pros: Can default to KG, taking advantage of its speed, and resort to an LLM is the KG doesn’t have an answer.

- Con: More complicated to implement.

The rest of this blog emphasizes #3.

Table 1 summarizes the roles of these parts. Note that Prolog is applicable for System ⅈ, 1, and 2, but I chose not to include it in Table 1 to keep it tidier.

| Step | Role | Primary Knowledge Base | Query Language |

|---|---|---|---|

| Intent resolution | System 2 (subconscious mode) | LLM | Natural language prompt. |

| Identifier anchoring | System 1 | Wikidata / DBpedia / SKOS / schema.org | SWRL |

| Hypothesis expansion | System ⅈ | Explorer Subgraph | Background process. |

| Validation | Inductive | TCW | API call |

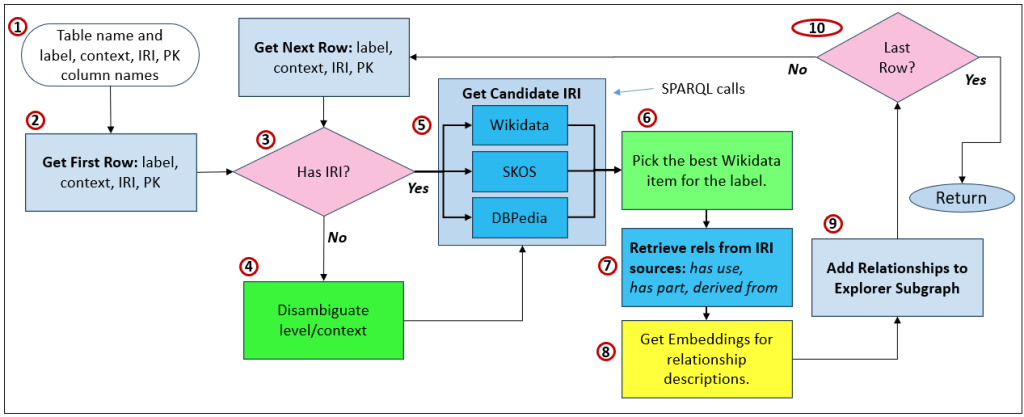

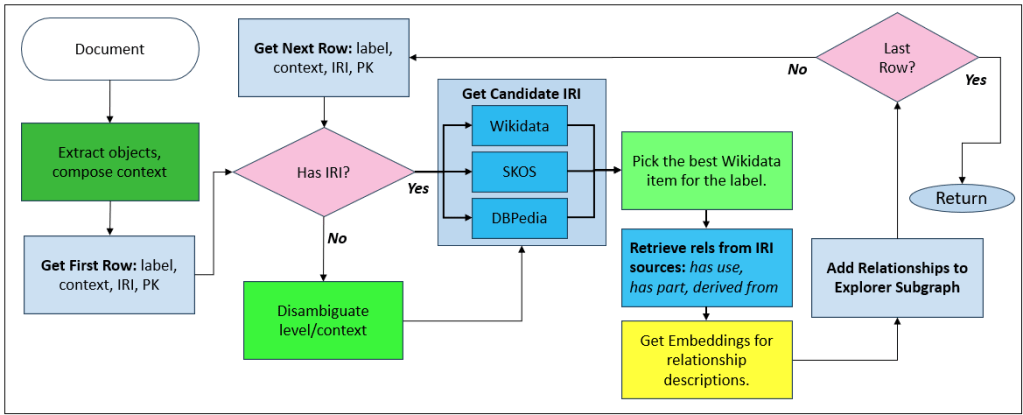

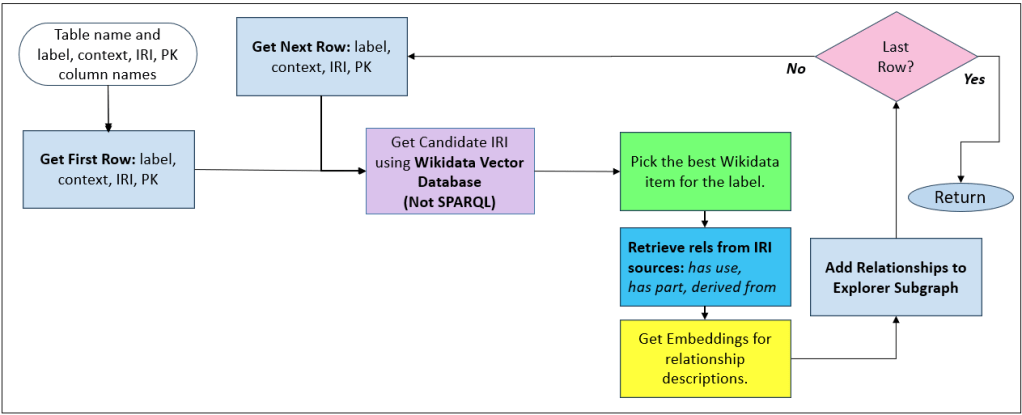

How the Explorer Subgraph is Built

There are three modes. The first two can be used to seed the ES, but can also be engaged as new data and/or documentation is acquired:

- Databases: The advantage over #2 is that these relationships are reliably authored through data stewards.

- Documentation: Intended to incorporate for novel vocabulary.

- In-process: Adding new relationships to the ES at the time it’s exploring.

The ES is constructed from enterprise reality—starting with database tables, not hand-authored ontologies.

- Iterate a table (ex.

Materials). Each row is a candidate object. - Resolve identity via IRI:

- If the IRI exists, use it.

- If missing, an LLM proposes a stable IRI using the row’s name + description (stored as “proposed,” not “true”).

- Create/merge an Exploration node for the material and link it back to the strict KG when possible (

SAME_AS/ identity linkage). - Expand via the Probing Process:

- For each material, a RAG process proposes “things this material plays a role in,” with a short role explanation.

- The system chooses a relationship type (ingredientOf, feedstockFor, etc.), stores provenance/confidence, and optionally stores an embedding of the explanation for later semantic search.

Table 2 shows an example of the relationships with a cornerstone on maize. The first four rows are similar to what is shown in the Protege snapshot of Figure 4. The subsequent rows represent relationships from domain-focused IRI sources, which go much deeper than Wikidata for the genetic aspects of maize.

Figure 9 depicts what the items in Table 2 would look like in graph form in the ES.

This produces a layer of navigational structure that is separate from ontology but connected to it. Ontology remains clean and authoritative; exploration remains permissive and useful.

The LLM API versus the Chat Window

For both ChatGPT/OpenAI and Grok, there is a difference between the capabilities of the chat version versus the API, the former generally having more power. The API, by contrast is likely to lack the built-in query features of the Chat. So what you get in the Chat (at least for ChatGPT and Grok) might be different. That is especially in the way we’re using the IRI sources.

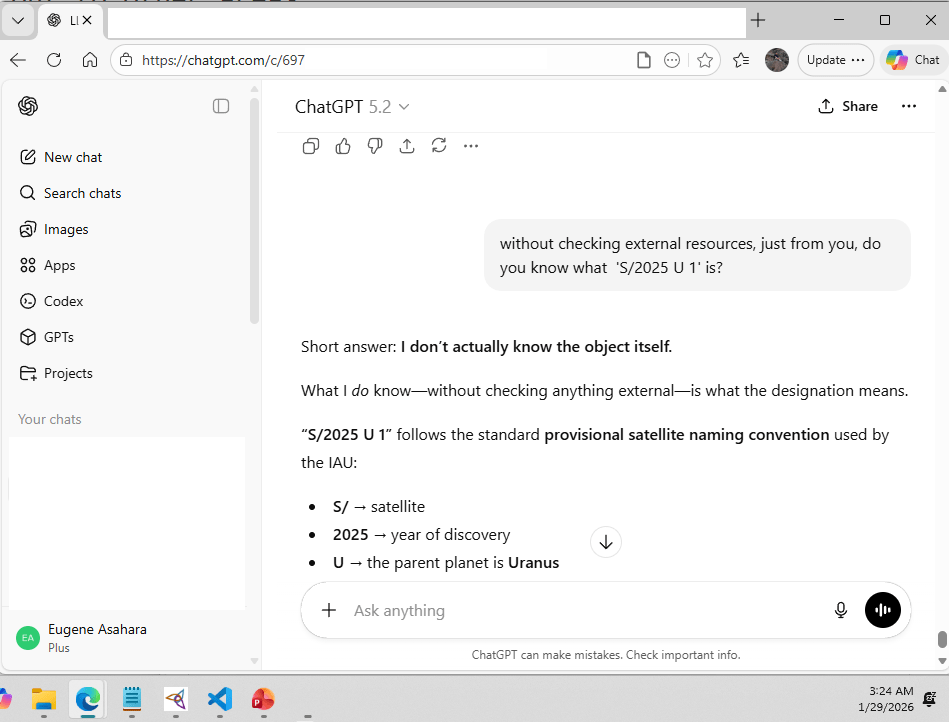

I asked ChatGPT 5.2 to validate for itself that the API, which is what we’ll be using in a software system, is not as robust as the Chat. In ChatGPT’s own wise words:

You can use an LLM to disambiguate up to the exact point where authoritative lookup becomes possible because the model has learned, from exposure to large volumes of structured and semi-structured text (including Wikidata-derived descriptions, ontologies, standards, and technical documentation), how concepts are named, described, and distinguished across contexts. Given a label and context, the LLM is well suited to infer the intended semantic sense and express it as a canonical label plus focused search terms—essentially translating human language into something compatible with knowledge-graph indexing. At that point, authority is deliberately handed off to Wikidata: the graph returns a bounded set of candidates defined by its own ontology, and the LLM is invoked again only to select the best match based on descriptions and claims, not to invent identifiers. This division of labor exploits the LLM’s strength in contextual interpretation while preserving Wikidata’s role as the authoritative source of identifiers and relationships, yielding a pipeline that is both semantically robust and operationally reliable.

Grok pretty much said the same thing (in Grok’s own words):

Regarding whether this applies to me similarly—a potential difference between the chat interface and the API: Yes, there can be differences, but not exactly in the same way as with OpenAI’s models. In this chat setup, I have built-in access to tools (like web browsing or searches) that allow me to query external sources like Wikidata in real-time if my internal knowledge needs verification or if ambiguity arises. This makes disambiguation more reliable here, as I just demonstrated by cross-checking. The Grok API, on the other hand, exposes the underlying model (currently based on Grok-1 or similar iterations) for raw completions, but it supports tool calling (similar to OpenAI’s function calling). So, if you implement external calls to Wikidata (e.g., via SPARQL queries or API endpoints) in your API code, you can achieve the same reliability. Without those, the API might rely purely on the model’s baked-in knowledge, which could falter on edge cases or less common ambiguities, much like what you’re seeing with GPT-4.1. For well-known terms like “corn” meaning maize, though, the model itself should handle it fine in either context based on prompting.

This discrepancy between chat and API behavior is likely to persist for some time. Chat interfaces operate at the pace of human interaction—typed or spoken prompts that arrive relatively slowly (in the timeframe of seconds to minutes), vary widely in structure and intent, and come from a relatively small population of concurrent users. API usage, by contrast, is designed for machine-to-machine interaction: high concurrency, more predictable inputs, and tight latency constraints. As a result, chat systems can afford to incorporate richer, more integrated capabilities, while APIs prioritize scalability, determinism, and cost control. For the purposes of enterprise systems and AI agents, this makes the API the correct foundation—even if it lacks some of the convenience and apparent “intelligence” seen in chat-based interactions.

That’s actually good. It enables AI and data engineers to employ what LLMs do best—interpreting ambiguous human intent, normalizing language into actionable structure, and making bounded contextual judgments—and incorporating other aspects of intelligence as needed (ex. reasoning through a web of rules), in the spirit of the Assemblage of AI.

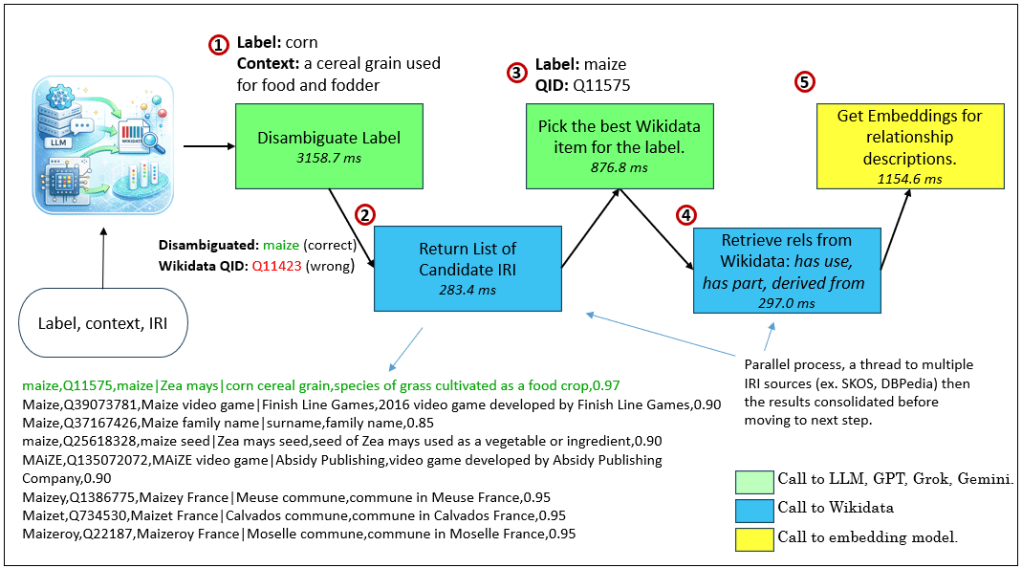

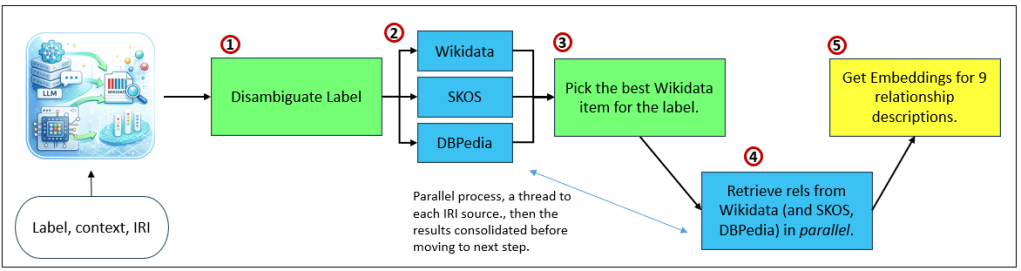

Therefore, the high-level theme for matching a label to a Wikidata IRI is:

- Disambiguate the intended meaning (LLM): Starting from a raw label and optional context, the LLM infers the intended semantic sense and produces a canonical label along with focused search terms suitable for querying a knowledge graph.

- Query Wikidata for candidate entities (graph lookup): Using the canonical label or search terms, the system queries Wikidata to retrieve a bounded, authoritative list of candidate entities, each with its own identifier and description.

- Select the best-matching entity (LLM): The LLM is invoked again to evaluate the returned candidates against the original context and select the entity that best fits, yielding a Wikidata IRI with an optional confidence or rationale.

- Proceed with graph-based enrichment and caching: Once the entity is resolved, its relationships can be retrieved, embedded, cached in the Explorer Subgraph, and reused without further LLM calls.

To demonstrate the difference, I submitted this prompt to ChatGPT 5.2, asking it to disambiguate corn:

You are a component inside a larger system pipeline.

RULES (STRICT):

– Return ONLY valid JSON. No markdown, no commentary.

– Do careful not to invent Wikidata QIDs. Rate your confidence 0.0 through 1.0 that the Wikidata QID you’re presenting is actually in Wikidata.

– Provide search_terms: short phrases we can use to query Wikidata for candidates.

TASK:

Given an optional label and optional context, infer the intended canonical concept.

INPUT:

Label (optional): corn

Context (optional): a cereal grain used for food and fodder

RETURN FORMAT (EXACT):

{

“canonical_label”: “…”,

“wikidata_iri”: “https://www.wikidata.org/entity/Q….”,

“search_terms”: [“…”, “…”],

“notes”: “…”,

“confidence”: 0.6

}

CONSTRAINTS:

– If you can confidently name the canonical concept, set canonical_label.

– If you can confidently provide the Wikidata entity IRI, set wikidata_iri, else “”.

– search_terms must be 1 to 3 short strings suitable for Wikidata search.

– notes must be one short sentence.

ChatGPT 5.2 returned the correct answer:

{

“canonical_label”: “maize”,

“wikidata_iri”: “https://www.wikidata.org/entity/Q11575“,

“search_terms”: [“maize”, “Zea mays”, “corn cereal grain”],

“notes”: “The context indicates corn in the botanical and agricultural sense, i.e., maize.”,

“confidence”: 0.85

}

Interestingly, Grok’s (through the x.com web page) QID was incorrect (it’s not Q11506, Denpasar … what?):

{

“canonical_label”: “maize”,

“wikidata_iri”: “https://www.wikidata.org/entity/Q11506“,

“search_terms”: [“maize”, “corn”, “Zea mays”],

“notes”: “The most specific Wikidata item for the cereal grain crop commonly called corn in American English.”,

“confidence”: 1.0

}

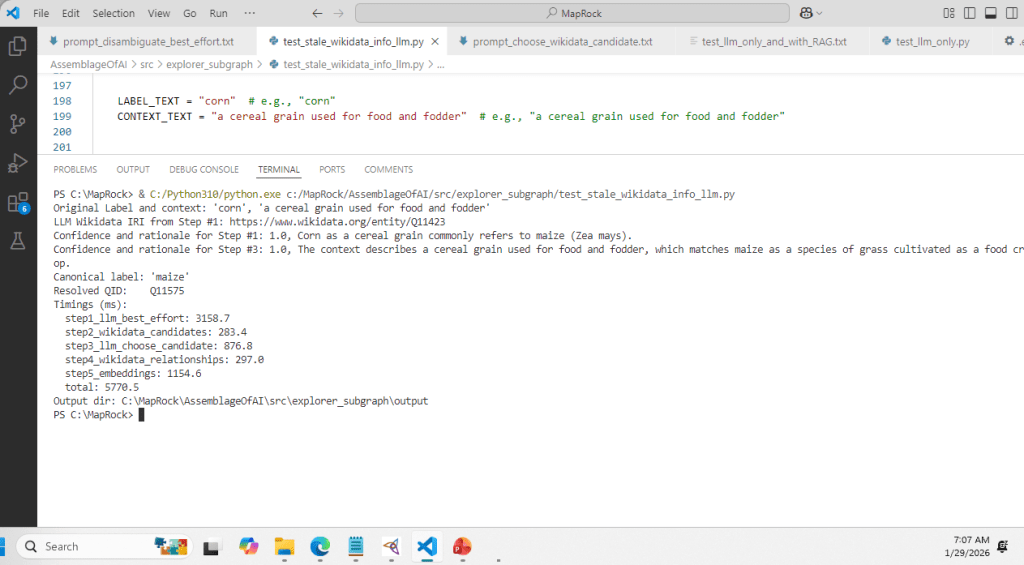

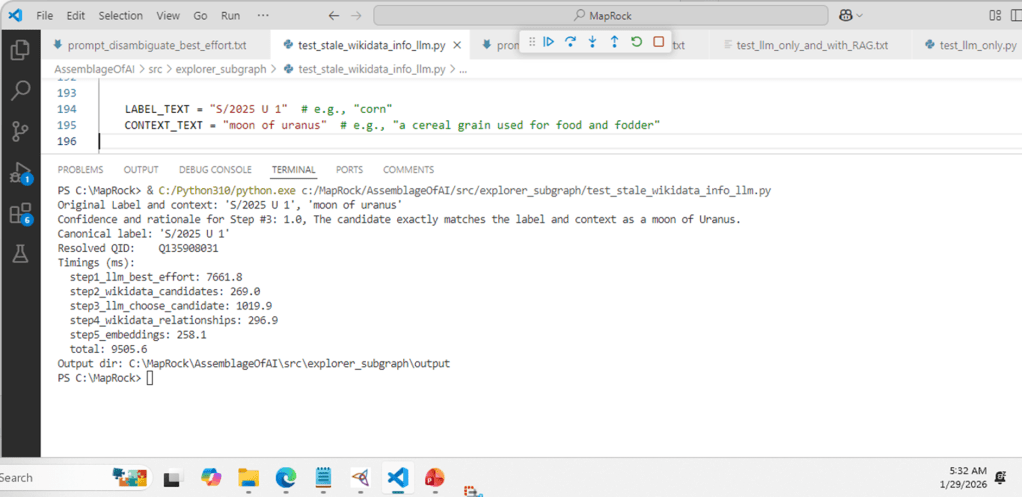

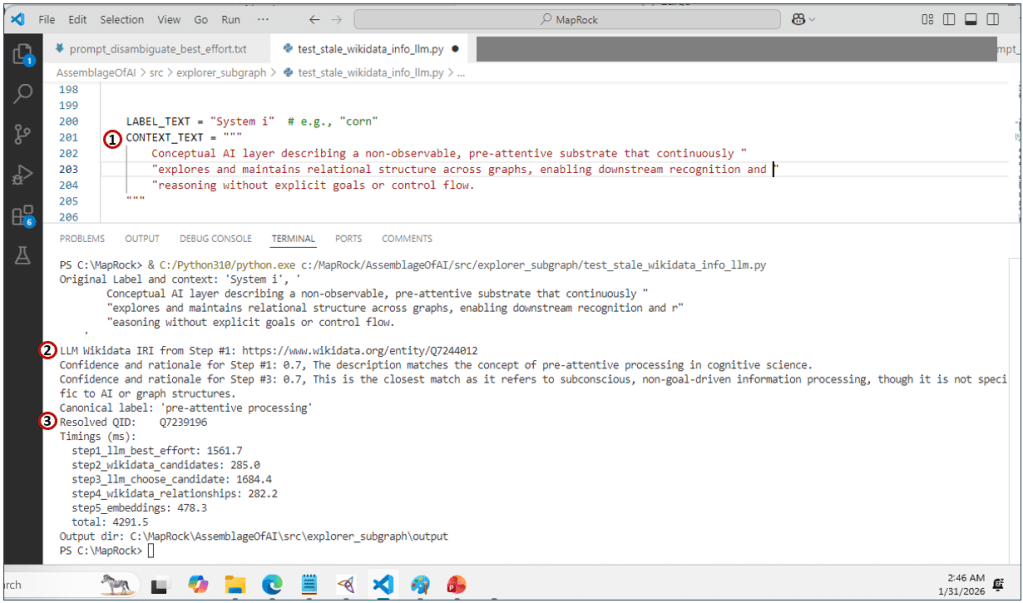

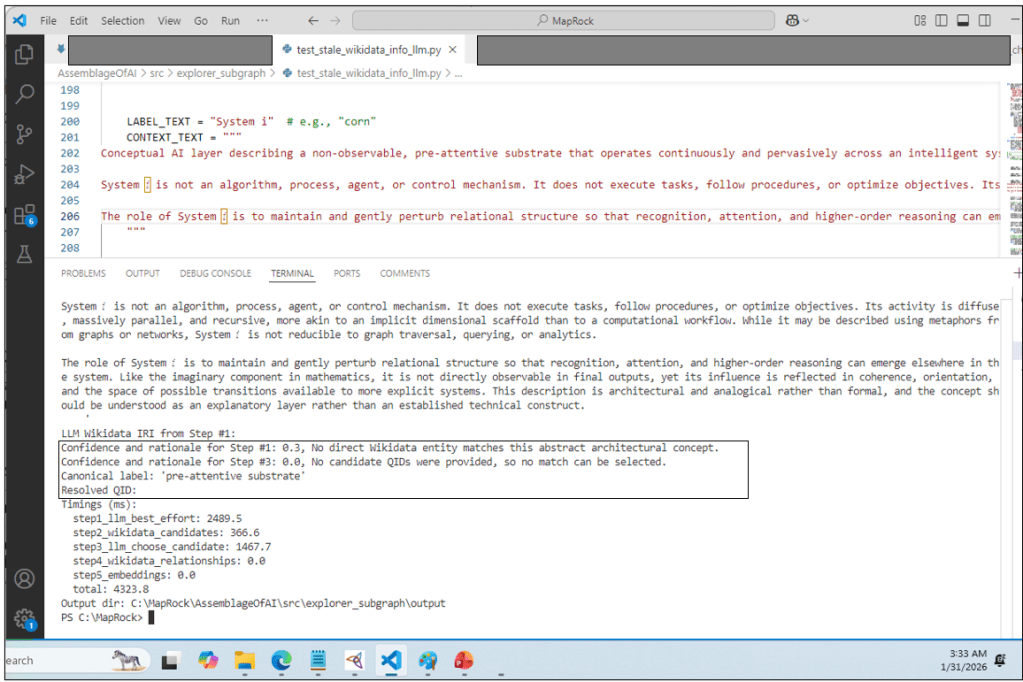

But note that through this program that connects to the gpt-4.1 model through the OpenAI python library, Step #1 returned the incorrect Wikidata IRI—Q11423, not event close to the correct answer that is Q11575 (maize). However, the subsequent steps resolved corn to maize correctly.

Figure 10 is the results of test_stale_wikidata_into_llm.py.

Because the API model is optimized for speed, determinism, and safety, it does not perform authoritative identifier lookup or verification, even if it recognizes the correct concept; instead it may guess or conflate nearby entities when asked to emit a specific QID. In contrast, the chat interface layers additional tooling, retrieval, and guardrails on top of the base model, which is why it appeared to “get it right” while the raw API model correctly avoided—or inconsistently approximated—authoritative disambiguation.

It would be great if the OpenAI API worked as well as its chat counterpart, ChatGPT. For something as simple as finding the Wikidata IRI for corn, the grain from which we make cattle fodder, bourbon, and ethanol, you would have thought that it should have taken only the call to the LLM. But no, at the time of writing, we’re not there yet.