Abstract

In The Assemblage of AI architecture, System 2 is modeled after the deliberate reasoning processes described in human cognition. But rather than focusing on psychology, this article examines what System 2 produces inside an AGI system. The outputs are not merely answers to questions but structured artifacts such as stories, plans, procedures, and models. These artifacts encode knowledge in reusable form and become building blocks for reasoning across the Enterprise Knowledge Graph.

Human intelligence rarely invents solutions from nothing. If we do, it’s one of those so-called “happy accidents” we’re astute enough to notice. Instead, we learn by recognizing patterns in the world and recombining them through analogy. Evolution works this way. Animals learn this way. Human culture works this way.

But humans do something unusual, beyond other critters. Our deliberate reasoning and organizing (System 2) produces artifacts—plans, strategies, playbooks, and models—that store and transmit knowledge we leverage across time.

These artifacts form the building blocks of our culture. We’re at the dawn of the post-LLM era of AI, where understanding the products of System 2 thinking becomes critical, because LLMs excel at pattern recognition but depend heavily on structured artifacts created by humans. In this blog, we’ll explore how evolution, mimicry, analogy, culture, and business intelligence (BI) systems reveal the same underlying pattern—and what that means for designing intelligent systems.

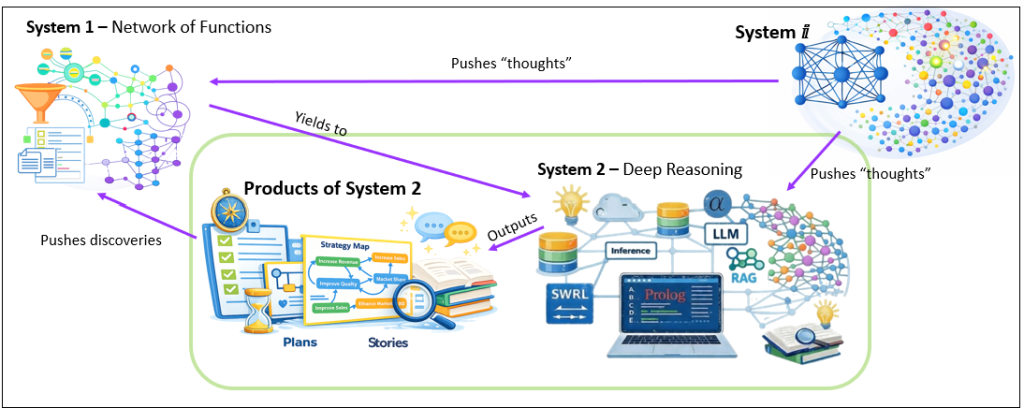

Figure 0 is a very high-level illustration of how the Systems interact. Please see, Probing the TCW for Chains of Strong Correlations, for a deeper version of Figure 0 along with explanation.

Preface

As ludicrous as it may seem, let’s entertain for a moment that humanity isn’t as brilliant as we think we are—at least not in the way we usually think about it. Meaning, the mind-boggling level of technology we have today seems to indicate that we must be brilliant—our ingenuity created it.

The myth says we invent from thin air—that genius is some kind of internal lightning strike. But when you watch how progress really happens (the stuff that happens before the curated white paper or Instagram posts), it’s usually messier and more ordinary. We notice patterns that are already out there—those half-mistaken “hey, this reminds me of that”—something in our heads clicks just right. It serves as an analogical base from which we morph it to our needs. Most “original” ideas are really morphing of an analogy—a shape borrowed from somewhere else, forced to fit a new problem until it snaps into place.

Biological evolution has left us a massive library of analogies from which we can choose and blend. It built the first workable solutions through blind, relentless copying at the gene level—physics setting hard limits, Earth’s topology shifting underfoot, DNA being only finicky enough to allow for the occasional screw-up turning out useful precisely because the environment wouldn’t sit still. In that world, occasional mistakes are by-design, not bugs. They were the variation engine. Nature didn’t design the eye, but rather, it stumbled toward it, kept what worked, and let what no longer worked fade to history.

Then higher animals show up and you get something new: mimicry. Mimicry is cheap compared to invention. If we think of the proverbial infinite number of monkeys typing for infinite time, War and Peace is to human intelligence as a coloring book is to mimicry. And mimicry is the basis of learning. You don’t have to rediscover hunting or knapping flint from first principles if you can copy the pack. You don’t have to reinvent survival if you can imitate the elder. A young animal learns by borrowing behavior that already survived the world at least once.

Humans took that same trick and took it to levels evolution never imagined. We don’t just mimic behaviors—we mimic structures, at least as a starting point:

- We watch birds fly and don’t merely flap our arms—we abstract lift and wings and airflow into something we can rebuild.

- We watch wolves hunt in packs and don’t just run together—we abstract roles and coordination and signal into tactics and organizations.

- We see an octopus disappear and we don’t just hide behind a rock—we abstract camouflage into materials, patterns, deception, stealth.

And the mechanism underneath that abstraction is analogy: pull a shape out of one domain, re-home it in another, and see if it holds.

Once a human analogy holds, it’s assimilated into our culture. The solution gets copied by others, written down, taught, ritualized, operationalized. It becomes a meme in the original sense—not “a joke on the internet”, but an idea that replicates if it’s born in the right place at the right time. It mutates as it spreads. Most mutations are noise; a few fit the new circumstances better than the old, and those stick. That’s why a good idea doesn’t arrive as a final form. It arrives as a rough draft that survives long enough to be refined.

And that brings me to The Intelligence of a Business. Since the days Bill Inmon and Ralph Kimball got BI off the ground a few decades ago, we have been building an intelligence of a business—often without admitting that we were modeling it after our own. No blasphemy intended, but we’ve been building analytics platforms in our own image (it’s the only example of true intelligence we have). We built memory (warehouses). We built attention (dashboards). We built language (metrics, dimensions, semantics). We built habits (reporting cycles). We built reflexes (alerts, thresholds, anomaly checks). All of it feels “obvious” now, which is exactly what culture does to successful inventions: it makes them look inevitable.

But there are deeper points hiding under all of this:

- Mimicry is sufficient for building a strong System 1—Fast pattern recognition, trained instincts, the ability to act without re-deriving the universe every morning. We notice a pattern that clicks for us and train ourselves to perfect it through many repetitions, just like we would by training a decision tree machine learning model. Sometimes we train ourselves to mimic the pattern with high fidelity, sometimes we allow the pattern to evolve to varying degrees into something else.

- System 2 is rarer in the animal kingdom, and in humans it’s highly developed—but it doesn’t float above the world as pure logic. It leans on the same foundation. System 2 still starts with analogy. It’s what lets you take what you already know, transplant it into a new situation, and then grind it into a solution that can be shared. System 1 fine-tunes skills, what we observed.

If the pattern we’d like to mimic is something well-defined that we wish to learn, this is like training a “narrow intelligence” machine learning model such as a convoluted neural network (CNN) that can recognize faces or a decision tree that helps decide whether to sell or buy a stock. If the pattern we notice reminds us of a problem we’re trying to solve, we know it’s not as simple as mimicking it. But we sense it’s a plausible starting point, an idea, from which we can adapt to our needs.

The difference between an ML model like a CNN (or decision tree, association, etc.) and an LLM is that the narrow focus of the CNN is about learning and mastering a skill over the course of many repetitions. Whereas the broad, deep but imprecise nature of LLMs enables us to think, to notice similarity between two disparate things and map the corresponding qualities in the hope they can provide insight towards generalizing deeper meaning. Both are equally important components of intelligence.

That’s what this blog is about. It’s not “how System 2 thinks”, but what it produces. The artifacts it leaves behind when the analogy hardens into something usable—recipes, plans, decision trees, strategies, checklists, playbooks, post-mortems. They are all products of System 2. In this blog, System 2 is in the context of AGI, but using the System 2 of our brains as an analogous starting point.

The Products of the System 2 of our brains are to human culture as every feature of every creature is to evolution’s library of living solutions.

So when we pat ourselves on the back for “creating”, most of it is really a sophisticated remixing of what we garnered from our senses. The genuinely novel stuff is the exception, not the rule. And if that’s roughly how intelligence actually operates—notice patterns → remix via analogy → plow the results back into the soil for the next round—then the way we build AI should perhaps mirror it.

Whether that’s a perfectly accurate picture of human cognition or not, it’s the AI working model I’ve been riffing on since about 2004. It’s still yielding insights and will break at many places, but that’s OK since analogies are meant to be broken.

This blog is a continuation of The Complex Game of Planning, but this is really a blog that reigns in the big concepts I’ve been laying out over the past few years, in particular the last few months. This blog is the beginning of the end of my virtual book, The Assemblage of AI, the “third act” (the closing arc in which prior forces converge into outcome).

In fact, the overarching concept of this work, the intelligence of a business, is indeed analogy. It’s an analogy that attempts to bridge the self-assembly of evolution and the purposefully constructed systems of human society.

Prerequisite Reading

In all of my blogs, I try to include as much background as possible in order to avoid extensive prerequisite reading. Of course, it’s not possible to compress what I’ve already written about without losing nuance. I also include as many links as possible, including an extensive glossary. Lastly, I’ve organized the blogs in my virtual book, The Assemblage of AI. So for you really adventurous and bored folks, that’s the reading order to get the best of what I’m presenting.

As the title of my previous blog, Interlude Before the Third Act of The Assemblage of AI, indicates, the shape of the next few blogs over the coming months is taking a different direction. Over the past few months, I’ve introduced concepts that led up to an integration, beginning with this blog. That is, the integration of the subjects of the blogs below.

At the least, please see the topic, Probing the TCW for Chains of Strong Correlations, from my recent blog, Explorer Subgraph. There is an illustration of the overall architecture, including how Systems ⅈ, 1, and 2 fit together.

This is an awful lot, so I’ve arranged this list in order of importance. I’d say that the first four might be enough:

- Long Live LLMs! The Central Knowledge System of Analogy: This defines the roles of LLMs in my framework. That role is of the know-it-all friend who knows a lot about a lot of things. This friend can also organize.

- Analogy and Curiosity-Driven Original Thinking: Original thinking arises not from isolated facts, but from curiosity-fueled analogy—stitching together procedural patterns across disparate domains like nature, history, and business to spark truly novel solutions that preserve human creative agency in an AI-augmented world.

- The Complex Game of Planning: Plans are about how to get from State A to State B (the intent). It’s usually not a single step, the map isn’t fully materialized, and the sequence of tasks often requires parallel threads. The Game of Dōh describes a methodology for building plans (one of the primary products of System 2) from strategy maps.

- Stories are the Transactional Unit of Human-Level Intelligence: Stories—compressed, breathing vessels of procedural knowledge, context, challenges, and lessons—serve as the true transactional unit of human-level intelligence, far surpassing static ontologies or statistical fragments by enabling parallel, abductive reasoning and strategic insight that current AI architectures still struggle to replicate.

- Beyond Ontologies: OODA Loop Knowledge Graph Structures: This offers a few examples of story formats.

- Conditional Trade-Off Graphs – Prolog in the LLM Era – AI 3rd Anniversary Special: Conditional Trade-Off Graphs revive Prolog and semantic networks as indispensable partners to LLMs—enabling deterministic, transparent reasoning over trade-offs and contextual exceptions in complex systems, where vibe-coded drafts meet auditable logic to forge truly hybrid, human-retaining intelligence in the AI era.

- Reptile Intelligence: An AI Summer for CEP: This is an explanation of System 1 in my framework. A web of functions (WoF), each fast, simple, highly-probable but not exact.

- Outside of the Box AI Reasoning with SVMs: The freedom to push the boundaries with some idea of how far we can go before things get scary. This prevents us from needing to be overly-cautious because we don’t know where the “red line” (a boundary we are not supposed to cross) is at. This is really Part 1 of Chains of Unstable Correlations.

- Thousands of Senses: True intelligence—whether human or enterprise—depends on “thousands of senses”: a rich, real-time flood of granular event streams from IoT, agents, and processes that far surpasses static KPIs or symbolic ontologies, enabling vivid, context-aware systems thinking and resilient decisions in adversarial, dynamic realities as explored in Time Molecules.

Notes and Disclaimers:

- This blog is an extension of my books, Time Molecules and Enterprise Intelligence. They are available on Amazon and Technics Publications. If you purchase either book (Time Molecules, Enterprise Intelligence) from the Technic Publication site, use the coupon code TP25 for a 25% discount off most items.

- Important: Please read my intent regarding my AI-related work and disclaimers. Especially, all the swimming outside of my lane … I’m just drawing analogies to inspire outside the bubble.

- Please read my note regarding how I use of the term, LLM. In a nutshell, ChatGPT, Grok, etc. are no longer just LLMs, but I’ll continue to use that term until a clear term emerges.

- This blog is another part of my NoLLM discussions—a discussion of a planning component, analogous to the Prefrontal Cortex. It is Chapter XII.1 of my virtual book, The Assemblage of AI. However, LLMs are still central as I explain in, Long Live LLMs! The Central Knowledge System of Analogy.

- There are a couple of busy diagrams. Remember you can click on them to see the image at full resolution.

- Data presented in this blog is fictional for demo purposes only. This blog is about a pattern, primarily the pattern of unstable relationships.

- Review how LLMs used in the enterprise should be implemented: Should we use a private LLM?

- This blog is heavy on LLM-generated content, mostly Prolog. Responses from LLMs will have a blue background.

- Supporting material and code for this blog can be found at its GitHub page.

- Prompts, LLM responses, and code in this blog are color-coded: grey, blue, and green, respectively.

Introduction

My conjecture is that innovation usually begins with analogy and continues through the re-shaping of that analogy through an iterative and recursive process of re-shaping more sub-analogies. Progress happens when a pattern from one system is successfully mapped onto another.

System 2 is the solutions architect of AGI, the part that excels at noticing complex patterns. System ⅈ is the proverbial guy who notices all the problems, but offers no solution. System 1 is operationally focused, it does the “real” work of recognizing what is happening in the world and executing sequences of learned actions. System 1 doesn’t sense nor invent—if it can be illustrated in a decision tree or manual, System 1 does it. But when something doesn’t go quite right, System 2 is called upon to architect a solution to get from the current undesired situation to the desired situation.

To illustrate the roles of Systems ⅈ, 1, and 2 we’ll first get System 1 out of the way by looking at it through an operational OODA process, viewed through the lenses of an enterprise and your doctor:

- Observe:

- Enterprise: System 1 takes in data from thousands of senses implemented in a complex event processing system. It recognizes many failure events queries across disparate parts of the enterprise that seem associated.

- Doctor: Gathers symptoms and lab results for a patient presenting with an illness.

- Orient:

- Enterprise: System 1 interprets the observed signals and compares them with prior incidents, known patterns, and operational context to understand what might be happening in the system. If this is an unknown issue, the proper analysts, SMEs, architects, and decision makers compose a plan.

- Doctor: The doctor interprets symptoms and test results in the context of medical knowledge, past experience, and patient history to form possible explanations. If there isn’t a strong match to the diagnosis, the doctor will consult with colleagues and/or specialists.

- Decide:

- Enterprise: Assuming an issue is identified, efforts are coordinated.

- Doctor: A treatment plan is selected, appropriate for the diagnosis.

- Act:

- Enterprise: The plan is executed.

- Doctor: Prescriptions are made and necessary procedures and/or follow-ups are ordered.

The operational OODA process could come to a halt at Step 2-Orient or Step 3-Decide. Because OODA is about operational execution, we need to recognize a situation and select the appropriate tactic which has been tested and for which we are trained. If either step fails, we can take a chance on a best guess (even though everything in a complex world is a best guess) or we can retreat and pass the problem to System 2 to formulate a solution.

The products of System 2 are cached reasoning and organization of clues (thoughts) and requests from System ⅈ and System 1. System 2 creates theories forged and hardened from analogies that make worthy hypotheses—a plausible path to take. Hypotheses are not actual working models (implemented theories), but they are the bright ideas uniquely capable of being generated by humans that separates us from other critters.

Where do these hypotheses come from? They are existing products of System 2—theories developed for past issues, encoded as states, plans, and stories. The library of encoded forms is the fountainhead for analogy. Hypotheses are the fun, sometimes easy part. But it’s followed by the not as fun part of forging and hardening them into tested theories. That’s System 2.

Hypotheses are worked into theories, which are stored, and in turn themselves become candidate hypotheses for future theories. A hypothesis is a candidate if it resembles the current state of a problem currently being addressed. In that case, we have an analogy lighting up a plausible path! System 2 hardens by testing out the hypothesis. Like it is in real life, failure constrains the solution space, sometimes full branches of exploration, and we can learn why.

Distant Analogies and Almost Identical Cases

Analogies come in every degree of distance, and that’s exactly what makes them powerful—and what vector spaces in LLMs capture so elegantly. At one end are tight, domain-specific matches: a SQL Server performance tuner instantly recognizes “buffer cache pressure” because they’ve seen the exact pattern (high page life expectancy drops, wait stats, etc.) dozens of times—it’s near-identical reuse, the closest point in embedding space.

At the other end are distant, cross-domain leaps: great white sharks and tigers are nowhere near phylogenetically related, yet both are apex predators optimized for ambush in their niches. Both are large creatures, with big, sharp teeth, and an array of keen sensors. An emergent property is they’re not often in danger from other creatures. What inferences could we make of a tiger from qualities of a great white shark?

Evolution repeatedly “rediscovers” similar functional solutions in unrelated lineages because the problems (energy-efficient predation in sparse-resource environments) converge on analogous structures. LLMs navigate this spectrum geometrically: cosine similarity can match near-identical problems tightly, while still surfacing looser structural analogs when prompted to think creatively (ex. “this enterprise bottleneck reminds me of predator-prey dynamics in ecosystems—where’s the equivalent of overgrazing urchins here?”). The vector space doesn’t care about biology or taxonomy; it cares about preserved relations. That’s why distant analogies can spark genuine insight in System 2, while close ones provide reliable, low-risk pattern application.

Genes, Memes, and Encoded Forms

Consider this ladder: evolution (genes)→culture (memes)→encoded forms

Evolution is the original R&D lab, a grunt-force lab of combinatorial explosion, stocking the planet with working solutions embedded in bodies and behavior. Human culture is what happens when we start copying, tweaking, and passing those solutions along as memes instead of genes. And the Products of System 2 are what make culture durable—plans, rules, playbooks, checklists, models, diagrams—the “carryable” forms of knowledge that can survive the mind that produced them.

I’m going to call these encoded forms. System 2 takes a messy stream of lived reality and compresses it into something stable enough to carry, share, and reuse. These encoded forms are not analogies in themselves. They begin life as mere stories (or plans, models, rules…). They only become analogies the moment one of them structurally resembles—or maps usefully onto—the situation we are facing right now.

The products of System 2 are plans, stories, and instructions that it reasoned through and organized to get from State A to State B. Getting from State A to State B is why we reason. It’s the problem we must solve. Once we’re convinced it’s a good thing to do, the question is then how. That question could require an answer as simple as a word or two to an entire treatise on how to resolve a problem. Here is a non-exhaustive list of encoded forms that System 2 can produce:

- Object: A distilled, often atomic or structured output of reasoning—ranging from a single label or value to a richly detailed JSON-like bundle of properties—that captures a resolved concept or entity for reuse.

- List: A reasoned, prioritized, or sequenced enumeration (e.g., a grocery list, checklist, or prioritized action items) that systematically organizes elements needed to solve a problem or achieve a goal.

- Assessment (of a situation): A reasoned inventory and interpretation of observed elements, relationships, and signals in a context—including flagged risks, opportunities, or anomalies—that provides a coherent snapshot beyond raw perception.

- Problem: A clearly articulated, reasoned definition of a gap, obstacle, or undesired state, framed as a mature narrative that specifies what needs resolution to move from current reality to a desired one.

- Plan: A forward-looking, reasoned blueprint that sequences actions, resources, contingencies, and milestones to bridge from a starting state to a targeted outcome.

- Story: A causally connected, temporally structured narrative that encodes procedural knowledge, context, challenges, decisions, and outcomes, making complex lessons portable and relatable across situations.

- Instructions: Step-by-step, reasoned directives that guide execution from initial conditions to completion, often including conditional branches, warnings, and rationale for each action.

- Recipe: A specialized set of instructions tailored to transform raw inputs (ingredients, components, or preconditions) into a desired output, typically sequential but potentially incorporating parallel or conditional processes.

- Design: A reasoned schematic or specification—whether physical, logical, architectural, or procedural—that defines structure, relationships, constraints, and intended function to enable reproducible creation or implementation.

Planning, organizing, and designing can all be generalized to a description of how we get from State A to State B. Not merely Point A to Point B, but situation A to situation B.

I think at the high levels, Stories are the Transactional Unit, as the base. All products of System 2 reasoning could be abstracted to stories. That is, we start from State A and wish to get to State B. When we read a story, a fictional novel, the State A is set up. We don’t know State B, that’s the fun of reading a novel, but it nonetheless exists. The fun isn’t so much in knowing State B, but in how we got there.

From a Problem and Clues to Hypothesis

When I began writing Enterprise Intelligence over three years ago, I mused about fine-tuning an LLM with the hundreds of pages of notes and blogs I currently had (page 19, My Research Assistant, ChatGPT). Although LLMs are more than noticeably smarter than three years later, I still struggle with LLMs providing any real insights. They still mostly force the square pegs I feed them into the round holes that they know.

It’s important to remember that the current state of AI is both impressively capable and frustratingly limited at the same time. It operates in a kind of cognitive uncanny valley. Dependency on AI systems is growing, by design, because the investment behind them aims to make them unprecedentedly useful across domains. And they are. But right at the frontier of creation—where new ontologies are being formed, where metaphors carry structural weight, where ideas are being fused rather than summarized—performance dips. That’s where the amalgamation of learned knowledge starts to blur intent rather than sharpen it, and where fluency can mask conceptual drift.

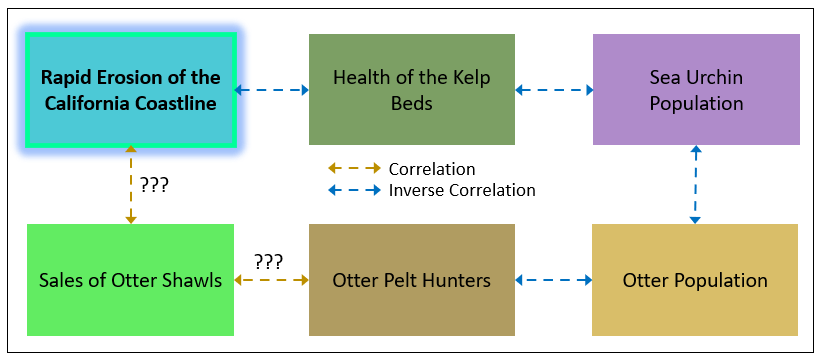

Figure 1 is an example of a story, a case study—in the form of a static picture. Every person in the world has thousands of stories to tell. In the same way that every feature of every creature is a solution to something, each story holds a potential insight towards finding a resolution to a problem. That is the idea of this blog.

Let’s take this story through a few forms as an example of a product of System 2. In a “temporary chat” (incognito), I pasted Figure 1 and asked ChatGPT:

Think of this figure as a model existing in my head. Translate it into words I would use to express this model currently in focus in my mind to my friend in a succinct, but sufficiently understandable way.

Prompt 1 – Translate the chain of relationships shown in Figure 1 into words.

Its response:

I’m thinking about the California coastline as part of an interconnected ecological and economic loop.

At the center of it is kelp bed health. When kelp forests are healthy, they help stabilize the coastline and reduce erosion. So kelp health and coastline erosion move in opposite directions — more kelp, less erosion.

Kelp health is also tightly linked to sea urchin populations. Urchins graze on kelp, so when urchin numbers go up, kelp declines — another inverse relationship.

Urchin numbers, in turn, are controlled by otter populations. Sea otters prey on urchins, so more otters means fewer urchins, which indirectly allows kelp to recover.

But otter populations are affected by otter pelt hunters — more hunting pressure reduces otter numbers.

Now bringing in the human market layer: sales of otter shawls likely drive (or at least correlate with) the number of pelt hunters — demand incentivizes hunting.

And there’s also a suspected link between shawl sales and coastline erosion — not direct, but mediated through the whole chain: fashion demand → hunting → fewer otters → more urchins → less kelp → more erosion.

So overall, it’s a cascading system where consumer behavior, predator–prey dynamics, and habitat structure all feed into the physical stability of the California coast.

Response 1 – Translated snapshot in Figure 1 into words by ChatGPT.

In a new incognito window (no awareness of prior chats or even who I am), I asked ChatGPT:

Please create Prolog that expresses this: <the response ChatGPT provided in Response 1>

Prompt 2 – Translate story of erosion to Prolog.

For this basic example, we’ll simplify System 2 down to an LLM system (ex. ChatGPT, Grok). Today’s LLM systems are pretty smart, very good coders. With implementations of features such as RAG, chain of thought, mixture of experts, ReAct, etc., they are fairly formidable reasoners in themselves. However, to be clear, I not saying LLMs alone are the System 2 process, which would invalidate my whole The Assemblage of AI theme. But they are a primary pillar of it, as I discuss in Long Live LLMs.

Its full response to Prompt 2 is in english_to_prolog.pl

We now have a visual (Figure 1), story (Response 1), and Prolog version of the same set of relationships. The story version enables us to enrich or modify what is in the visual by loosening the confines of the picture. For example, we can add rules to the story that aren’t expressed in Figure 1:

- Perhaps dams upstream from rivers are preventing replenishing of sand of the beach. Are there dams?

- The hunting of otters might already be illegal, so this isn’t the problem, just an unfortunate correlation that points to a wrong causation.

The generated Prolog should include rules that reflect those items.

The Prolog version makes the story concrete by minimizing the ambiguity inherent of our spoken languages—which is by design. Language is meant to communicate in the messy real world where versatility of communication is essential, whereas code (Prolog is code) is highly specific, which is ideal for well-defined, mechanistic situations.

Let’s take it up to another level of specificity. The Prolog includes a list of entities as shown in Code 1:

entity(kelp_bed_health).

entity(coastline_erosion).

entity(sea_urchin_population).

entity(sea_otter_population).

entity(otter_pelt_hunting_pressure).

entity(otter_shawl_sales).

Code 1 – Entities defined by the Prolog created from Prompt 2.

We’ll use ChatGPT to find what are called International Resource Identifiers (IRI). A major source is Wikidata.org. Please see, Wikidata as a Practical Entry Point to the Semantic Web, a topic within a previous blog.

From that Prolog you created, which as a whole represents the context, create a table of objects matched to a the most appropriate, wikidata.org object, along with your confidence of the match, the QID, and the QID label.

Prompt 3 – Match the entities in the Prolog to IRIs, in this case, Wikidata QIDs

Note: Remember that ChatGPT differs from GPT through an API. The former is immersed within a system. See The LLM API vs. the Chat Window.

Response 2 is the response from ChatGPT to Prompt 3. For the entities in our otter example, ChatGPT matched them to a globally recognized identifier.

| Prolog object | QID | QID label | Confidence |

|---|---|---|---|

kelp_bed_health | Q575913 | kelp forest | 0.78 |

coastline_erosion | Q1542312 | coastal erosion | 0.95 |

sea_urchin_population | Q83483 | Echinoidea | 0.70 |

sea_otter_population | Q41407 | sea otter | 0.97 |

otter_pelt_hunting_pressure | Q36963 | hunting | 0.62 |

otter_shawl_sales | Q878138 | fur trade | 0.45 |

Most entities have fairly high confidence, but note that otter shawl sales has a QID, but the confidence is only 0.45. There isn’t a QID for otter shawl sales, so ChatGPT thought fur trade was the closest. That is fairly good, but there is something closer: Maritime Fur Trade.

If we replace the symbols in Code 1 with the QIDs, we have a semantically connected Prolog, as shown in Code 2:

entity(wd:Q575913). % kelp_bed_health → kelp forest

entity(wd:Q1542312). % coastline_erosion → coastal erosion

entity(wd:Q83483). % sea_urchin_population → Echinoidea (sea urchins)

entity(wd:Q41407). % sea_otter_population → sea otter

entity(wd:Q36963). % otter_pelt_hunting_pressure → hunting

entity(wd:Q878138). % otter_shawl_sales → fur trade

Code 2 – Symbols replaced with IRI.

The simple example we just covered is an expression of the basic idea how System 2 takes a thought (the picture of Figure 1) and transforms it into a semantically connected product (the Prolog with symbols replated with an IRI).

System 2 Artifacts and Encoding

The products developed by System 2, at the highest level, consist of:

- States: States are snapshots, collections of items. For example, a market basket, a set of lab results indicating health or the presence of a disease, the point indicating a smiling or frowning face, or the conditions for a hurricane. This is the simplest product of System 2. It’s essentially a machine learning model which could be created by a human data scientist or an automated process (ex. AutoML, the capability of Azure Machine Learning that automatically tries algorithms + preprocessing + hyperparameter tuning on your dataset and picks the best model). This type of output of System 2 becomes incorporated into System 1.

- Plans: Plans are the instructions for taking us from State A to State B. For example, the plan to build a tunnel that substantially cuts commute congestion and time. Plans could be specific (ex. the plan to build the Likelike tunnel) or abstract (ex. treatment plan for addressing pancreatic cancer). Plans involve decision points (more so with abstract plans), sub-plans, and variable dependencies (ex. local laws).

- Stories: Stories are the sequence of events that led from a situation (“Once upon a time …”) to a conclusion (“… and they lived happily ever after …”). Stories are a recollection of events after the fact. They can be the trace of events during the execution of a plan, the case notes of a customer support worker or police officer, or an organization of a jumble of facts into a plausible hypothesis or even a theory of what happened. Note that data science experiment notebooks (Jupyter and Databricks) tell a story too.

System 2 employs multiple procedures and tools to organize a bag of facts into plausible hypotheses and theories. As mentioned earlier, at the center of System 2 are LLMs augmented with processes involving the likes of RAG, chain-of-thought, mixture of experts, ReAct, etc. System 2 can also include Prolog (heavier Prolog than what we created as a product of System 2 above), the master of deduction, and reasoning within a knowledge graph of ontologies using SWRL. I explore System 2 combing LLMs, Prolog, and knowledge graphs to deeper depths in Thinking Reliably and Creatively.

A snapshot is an encoded state. A story is an encoded sequence of states (a trace). A plan is an encoded generator of traces. And a Markov model is an encoded aggregate of many traces—what the system tends to do, on average, when it runs.

In the context of this blog, I see LLMs playing two roles in System 2. That’s consistent with my association of LLMs with System 2 in a previous blog.

The first is obviously as what is today a formidable “reasoner” (some say, “rationalizer”). LLMs appear to be good at reasoning partly because they are very good at recognizing and applying analogies—because of the way their internal representations organize language into patterns of relationships rather than just words. Even when two passages appear unrelated, those shared linguistic structures create a kind of baseline similarity in embedding space. Later I’ll return to how those same structures also make analogical reasoning possible.

LLMs are, at their core, engines of analogy. Really, it’s baked into their architecture. Every token you feed an LLM gets projected into a high-dimensional vector space where meaning isn’t stored as discrete facts but as geometric relationships. Words, phrases, even entire concepts that share similar roles or structures end up close together in this space; the famous toy example is that the vector difference between “king” and “man” roughly equals the difference between “queen” and “woman,” so simple arithmetic uncovers the analogy.

More profoundly, the entire transformer (attention layers, feed-forwards) computes weighted similarities across contexts, effectively asking: “What past patterns are most analogous to this one right now?” Retrieval in RAG pulls the nearest analogs; chain-of-thought chains them step-by-step. LLMs don’t reason from first principles—they surf a vast sea of human-encoded analogies, interpolating new ones by remixing the old. That’s why they organize so fluidly when prompted well, and why they rationalize outputs by surfacing the closest matching precedents. In the intelligence of a business, this makes them an unparalleled accelerator for System 1 pattern-matching, feeding raw analogical insights into System 2 for hardening into durable encoded forms.

Second, LLMs play the role of serializer/deserializer of very flexible communication between components—in this framework, a relatively expensive and highly utilized function. LLMs are not as rigid as APIs and not as prone to error and constrained by the slowness of manual efforts of people. For a human, LLMs translate a conceptual model in a person’s head into words which they speak or write down. And it translates words they hear or read into a conceptual model in their head (deserializes text to thought).

I wrote in Enterprise Intelligence, Prologue, page 2:

LLMs integrate knowledge from our collective writings into yet another encoded format. A LLM is a reduction of terabytes of writings into a massively dimensional set of vectors. The high-dimensional vector format of LLMs resembles the synapse network of our brains more than our synapse network resembles our written text.

In the context of the intelligence of a business framework, it translates words into encoded forms—Prolog, RDF/OWL, ML models (pickle files), and even functions written in a programming language such as Python and C#, which could be deployed onto a scalable platform such as Azure Functions.

LLMs as Translators

A high-end translator of human-to-human conversation isn’t only fluid with two or more languages, but is educated on the cultures of the primary speakers of the language. For example, English from the USA and Japanese speakers from Japan. The American and Japanese cultures are widely distinct. There is more to translation than just mapping words and organizing them syntactically. The intelligence required to apply cultural nuance raises a translator to a higher level.

Similarly, in the ETL (Extract, Transform, Load) processes of BI, there were traditionally the drag-and-drop UIs (starting with SSIS back in the early 2000s) that automated much of the human-driven crafting of pipelines. It was that last 10% of nuance that required human intelligence to fill in what was really 80% intellectual effort. In analogy terms:

ETL drag and drop tools are to human expertise and versatility in crafting ETL pipelines as mapping words and syntactic organization are to the cultural awareness of a translator in the language-to-language translation of human conversation.

In the intelligence of a business framework, that applies to the second of the two roles of LLMs I just mentioned: LLMs play the role of serializer/deserializer of very flexible communication between components. LLMs accept fuzzy input and output a robustly crafted response.

I don’t see serialization as just a way to transmit from human to human even though AI agents technically don’t need it. The role it still plays is that it naturally forces coherence. An incoherent communication from one human to another is worthless. The coherence doesn’t need to be perfect. Humans can infer some things based on their overall knowledge, knowledge of the current domain and even down the conversation level.

At the time of writing, today’s LLM system still loses the nuanced meaning of text I write, filled with novel notions, that I ask it to tidy up. I usually start my day with a brain dump of ideas that coalesced in my head over a good night’s sleep (well, good for me, anyway) into notepad, with no worrying about formatting or AI helpers to interrupt what I’m trying to convey. Of course, it’s messy, but I review to ensure it’s coherent and reflects what I intended to say. After a few mornings, I have the raw material for a new blog. A few thousand words.

I pass the notes in their entirety to ChatGPT requesting that it tell me my intent. It’s usually frustratingly off. Although LLMs are noticeably better today than they were even a few months ago, they can still be a little, “Hello … McFly …”, when presented with ideas off the beaten path, as we’ll see later in the 7-11 in Japan is the Opposite of ___ in Texas exercise..

The Products of System 2

System 2 is about thinking, in comparison to System 1 being about doing. System 2 is like the catch-all exception handler after System 1 tries to handle a problem, as illustrated in the code, llm_exception_handler.py.

For example, if we’re hiking through a meadow and see a big brown blob on the other side. System 1 first triggers the “stop in your tracks” reaction and attempts to identify the object. Bear? Tree stump? Brown boulder? Exhausting its known possibilities, it yields to System 2 to ad-hoc figure it out.

System 2 must generate a plan to identify the object or at least reduce the likelihood of danger. The plan is not random movement or speculation. It is a deliberate sequence designed to gather discriminating evidence. But before organizing a plan, System 2 must have a conceptual view of what is out there and how those objects relate to each other. What is the primary goal? What do I have to work with? What are the risks? What are the constraints?

In the case of the large brown blob in the meadow, System 2 might formulate a plan that goes something like this:

- Increase resolution: move closer, but cautiously.

- Change angle: alter perspective to reveal depth and contours.

- Observe over time: look for motion, breathing, or shifting shadows.

- Gather environmental signals: tracks, scent, disturbed grass.

- Re-evaluate hypotheses after each new observation.

Each step is chosen because it maximally separates competing explanations. A bear will eventually move. A stump will reveal grain and roots. A boulder will exhibit consistent geometry from multiple angles.

System 2 is therefore not simply “thinking harder”. It is designing an experiment. It is constructing a temporary decision tree, selecting the next action based on expected information gain. The output is a structured sequence—a plan—that narrows ambiguity through intentional interaction with the environment.

When the ambiguity is resolved, the plan and its outcome become an artifact. The composed procedure detailing how uncertainty was reduced can later be encoded, generalized, and potentially integrated into System 1 as a faster recognition pattern.

In this way, System 2 acts as an architect of new operational capability. It builds what System 1 will later execute reflexively. Besides the plan System 2 devised, it also “recorded” the story. That interesting story will be told in the saloons across the land, probably helping someone else.

The products of System 2 are mostly integrated into the System 1 structure. Again, System 1 is operational, so it’s kind of like System 2 is a development team building software for the enterprise, and System 1 is the product deployed into production.

To recap:

- System 1 attempts recognition. It asks, “What is it?”

- System 2 designs experiments. It asks, “How do I find out?”

System 2’s output is more than a decision. It produces these artifacts that benefit future encounters for the hiker and people he might encounter:

- A strategy (a web of relationships between the objects and concepts in play)

- A plan (sequence of tasks, decision points)

- A trace or story (a log of what action was taken at each step and the change of state due to the action)

- Possibly a new System 1 function that defines a state: “large brown shapes in meadow at dusk tend to be boulders in this region”.

States and Functions

States are the easiest to deal with since states are like recognitions of single objects, checklists of qualities—checklists with a helping of IF-THEN. In contrast, stories and plans are complicated compounds, made up of sequences and rules. Objects and states are transient—meaning they come and go, sometimes within timeframes that are barely perceptible (ex. the ever-changing states of a basketball game), fleeting (no emails), to minutes (ex. dinner at a restaurant).

Examples of states:

- The recognition of seeing a person and their apparent mood.

- The state of the game table of a Texas Hold’em match. I describe the process of defining of table states with a cluster algorithm in Time Molecules, “Synthetic Events from Machine Learning”, page 101.

- A scene from a photo—ex. a family picnic, an alpine lake,

- We’re at a tipping point. For example, the instant you sense your cat has had enough petting, or that instant in judo where you sense your opponent is off balance and you should execute your throw (kuzushi).

Levels of Recognition and Compute Speed

In Levels of Intelligence, I describe four levels of intelligence:

- Simple Recognition: Fast, reflexive, push-button reactions to immediate triggers with minimal processing (ex. a paramecium reversing direction from harmful chemicals or a fly reacting to a shadow). False negatives are costly, but false positives are acceptable. In Prolog, it’s direct mappings like: escape_fly(Trigger) :- Trigger = shadow. This is the primal, survival-oriented base level of System 1-like intelligence.

- Robust Recognition: Networks multiple factors into multivariable rules to handle variability and context while reducing false positives (ex. a rabbit identifying a fox from combined features like sharp teeth and bushy tail). It composes complex logic from simpler rules, often mirrored in ML models or deterministic code. This level advances intelligence by reliably recognizing patterns across diverse inputs.

- Iterative Recognition: Handles imperfect or incomplete information through hypothesis testing, refinement, and experimentation (ex. a doctor iteratively mapping symptoms to diagnoses via tests/questions, or a reptile testing partial views of prey). Prolog recursion supports this validation loop. It elevates intelligence by proactively filling knowledge gaps, bridging toward deliberate System 2-style reasoning.

- Decoupled Recognition and Action: Separates perception/identification from decision-making, allowing evaluation of consequences and strategic choice before acting (ex. recognizing a bear but deciding on whether to flee, stand ground, or stay calm based on context like being armed or unarmed). Prolog encodes this as conditional rules weighing multiple factors. This represents the highest level of intelligence—symbolic, reflective, and strategic—integrating the prior levels and enabling human-like planning in frameworks like Enterprise Intelligence.

These levels form a progressive hierarchy. Each adds a superpower, building on the previous, moving from automatic reflexes → reliable pattern-matching → adaptive hypothesis-testing → thoughtful, consequence-aware action. The post uses Prolog examples to show how symbolic logic can implement and extend these capabilities beyond pure statistical LLMs.

Recognition can happen in System 1 or System 2, the difference being that System 1 recognitions are simpler and near instant, and System 2 probably involves a multi-step process and will most likely take longer to execute). System 1 models will mostly be ML models which accept a set of parameters, run it through a series of simple computations and output a value.

Simple Prolog could be System 1 rules. They could accept a package of facts and custom rules as a parameter, execute a Prolog query, and return a result.

Here are two pairs of characteristics about System 1 functions that sound like Clark Griswold stating his crunch enhancer was semi-permeable but not osmotic (Christmas Vacation). But there is a difference between the words of each respective pair.:

- System 1 functions are probabilistic but also deterministic: System 1 functions may produce probabilistic outputs, but their execution is deterministic. The same inputs produce the same probability. If a function is expected to return a quick, rote, hasty answer, it’s possible that it might be more confident about some answers than others.

- System 1 functions are distributed but not asynchronous: There can be thousands, even many more, System 1 functions. They should be widely distributed across a cluster of servers (such as in Azure Functions of AWS Lambda Functions). If a function must pause to query external data or wait on another process, the computation shifts into System 2.

System 1 is deterministic, low-latency, and has no external dependency. Table 1 summarizes the items above:

| Pair | Relationship |

|---|---|

| probabilistic vs deterministic | execution vs meaning of output |

| distributed vs asynchronous | architecture vs execution timing |

| semi-permeable vs osmotic | structure vs process |

Note: Clark Griswold’s statement is intended to be paradoxical nonsense, but it can be true. His crunch enhancer is semi-permeable, but because it’s not osmotic, presumably it doesn’t allow liquids into the cereal, there’s no value to the semi-permeable quality. Maybe it allows certain gasses in.

Strategy Maps

The prominent example of state in the framework of the intelligence of a business is a snapshot of KPI statuses throughout the enterprise. This is the same as being in the hospital hooked up to all sorts of devices recording what’s going on, and taking the concurrent readings (all values at the same time).

Strategy maps are relationships between KPIs. Each node is a KPI, and the relationships hold a few major properties:

- The relationship between the status values of the KPIs. This is a correlation value that measures how strongly the KPI statuses go up and down together or how strongly one goes up and the other goes down (inverse correlation).

- A label of the intent of the relationship. For example, between customer satisfaction and return visits, the intent is to retain customers.

- Some relationships represent a fear (a risk). For example, excessive customer complaints led to bad press.

Snapshots of the strategy map are the elements of sequences in the Game of Dōh (a System 2 process), which I discuss in The Complex Game of Planning. I also discuss strategy maps in:

- Revisiting Strategy Maps: Bridging Graph Technology, Machine Learning and AI

- Prolog Strategy Map – Prolog in the LLM Era – Holiday Season Special

- Beyond Ontologies: OODA Loop Knowledge Graph Structures

- Bridging Analytics and Performance Management

Stories and Time Molecules

Although I see System 1 as mostly a web of functions (where the output of a function is the input to other functions), System 1 also includes linked Markov models from a Time Solution, which is the product of my book, Time Molecules.

As a reminder, stories are a sequence of events. Most stories are the output of systems that are implemented in the real world from plans. If the stories can be matched to the systems that emitted the event types of the story, the story is a case of the system.

Time Molecules is all about stories. Everything we sense, across all people, across all the machines that perform services for us, emit countless events that track what is going on internally and what is expressed externally. Saying we capture only a small fraction of the events is an understatement like 1000010000 is a big number. From all these events we pluck out sets of events from which we can compose stories about what happened—which is process mining.

We could hardly even capture the events in our own house. Every prepared meal, every visit to the restroom, every crisis, attending to every chore, every ritual before and after sleep, even every sleep is a story. Each story is a case or cycle of a process—for example our morning ritual preparing us for the day.

The subtitle of Time Molecules is: “The BI Side of Process Mining and Systems Thinking“. In one sentence, process mining is about organizing the countless events of countless types into stories. It’s not an easy process, but it does lend itself to automated help. It reminds me of Master Data Management, a process that was extremely tedious, but progressively improved with help of machine learning—especially with the arrival of LLMs.

Similarly, process mining is assisted by machine learning, and LLMs are pretty good at taking stabs at organizing a jumble of events into stories. Fortunately, in an enterprise, we always know where captured events come from—software system, customer or vendor or partner, IoT device, AI agent, etc. Events usually include attributes such as an identifier for an online customer browsing a web site. So pulling out sequences—stories—of the activity of customers to a web site isn’t hard. Like most things, some are easier than others.

For plucking out the tough stories lost in the muck—those with hardly any clues, the 1% that take up 99.9% of the effort—that can be a background process of System ⅈ running an algorithm that is basically a variation of constrained trial and error. It’s the MDM equivalent of mapping all the John Smiths (or Zhang Wei—SuperGrok says there are more) of the world across dozens of software systems. BTW, that’s a pretty good analogy.

To recap, within a sea of events from across many streams, are sequences of events. Think of it as the wide variety of molecules (from as simple as O2 to proteins, to DNA) floating in the sea. Each sequence of events that we identify tells a story. Some of those stories are similar, perhaps some including or missing one or two odd events, a few events in a different order, or some taking a longer or shorter time between events.

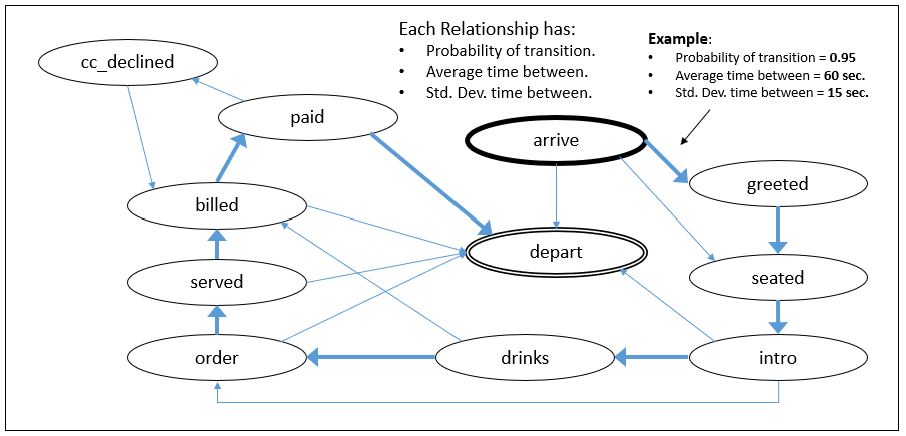

If we aggregate those similar stories, we will have an “average” of the sequences—a Markov model (“Markov chain” is proper, but it’s not a chain, more like a web). It is the average of the cycles of the process which produced the events. This is what I call time molecules, especially when related Markov models that reflect related processes are linked. These Markov models are analogy candidates when envisioning a new process.

A system can output variations of stories because it can take different paths depending on context, characteristics of the involved objects, exceptions (something wrong happens), etc. By combining stories from the same system, we create a model that tells us the probability of an event following an event.

Beginning with Arrive, the thick blue lines trace the most prominent story.

Map Story Components to Markov Models

Conversations that go on in applications such as Jira, Slack, ServiceNow, and Teams tell a story. For example, the story of how a highly-valued customer was prevented from leaving for another vendor. Those stories are mostly in text. However, if we could parse the stories into events and map the events to those in a Markov model, they could automatically add to the knowledge.

Here are the events types involved in a break-fix incident. That includes a trip to the doctor, a broke-down software, a plumbing problem:

- chief_complaint: Identify the chief complant, the main problem.

- backgound: Gather background of the what led the customer to the expert.

- diagnosis: Express to the customer your understanding of the problem. This is the lead hypothesis based on chief complaint and background.

- setup_sensors: Set up the data gathering devices.

- collect_data: Collect data from the sensors.

- analyze_data: Analyze the data from the sensors.

- treatment_plan: Based on background information and sensor data, select a course of action and present it to the customer.

- estimate_cost: Estimate the cost of executing the treatment plan.

- present_treatment_cost: Present the treatment plan and estimated cost to the customer.

- customer_rejects_treatment: The customer rejects the treatment plan and/or the cost.

- customer_accepts_treatment: The customer approved the treatment plan.

- execute_treatment: Execute the steps based on the treatment plan.

- test_treatment_application: Test that the treatment resolved the problem.

- prepare_report: Prepare a report on what transpired during this incident.

- present_report: Present the report. it could be in a meeting or just submitting the report.

- customer_accept_outcome: Customer accepts the outcome of the treatment.

- customer_rejects_outcome: Customer rejects the outcome of the treatment.

- bill_submitted: We bill the customer for the resolution.

- customer_paid: The customer has paid the bill.

See, the full event set in the GitHub repository.

Time Molecules, Sequence and Ordinal Statistics Columns, page 130.

We’ll use SuperGrok to demonstrate the idea. Prompt 4 presents a text story, a case study by a plumber on a customer visit. Prompt 4 specifies two files that are on the GitHub repository:

- The full text of the plumber’s case notes.

- A document describing the categories of event type. The purpose of Prompt 4 is to categorize the case to the closest case type among the categories:

- Troubleshooting Service: Reactive, incident-based troubleshooting and repair services performed by skilled experts to diagnose and resolve unexpected problems

- Routine Service: Proactive, recurring, or scheduled routine service delivery by trained providers for expected, non-emergency tasks

Please read this file, a log of events: https://github.com/MapRock/assemblage-of-artificial-intelligence/blob/main/src/products_of_system_2/plumber_residential_call.md

From these descriptions of event sets, https://github.com/MapRock/assemblage-of-artificial-intelligence/blob/main/src/products_of_system_2/event_sets.md

please classify the log of events to an event set.

Please provide a 0-1 score and a summary of your reasoning.

Prompt 4 – Classifying a case study into a general type.

Response 4 is the answer from SuperGrok. It correctly and very confidently (0.98 score) classified it as a Troubleshooting Service.

Classification: Troubleshooting Service Event Set Confidence score: 0.98 (very high match, minor room for edge-case interpretation only because the log is plumber-specific rather than a generic template)

Summary of reasoning:

The provided log is a complete, real-world residential plumber service call that began with an unexpected customer-reported problem (lukewarm hot water + reduced pressure in the upstairs shower after heavy weekend use).

Response 4 – The generalized story type.

Now that we know we’re dealing with a troubleshooting service, we’ll ask an LLM to derive the events for a troubleshooting service from the plumber’s case log.

Prompt 5 is the to SuperGrok. The prompt references two files, again, both on the GitHub repository:

- Event types for a troubleshooting service.

- The full text of his case notes. Same file as for Prompt 4 above.

Read these two files. The first is a CSV, the second is text of an incident service log:

EVENT_TYPES_URL = “https://raw.githubusercontent.com/MapRock/assemblage-of-artificial-intelligence/main/src/products_of_system_2/troubleshooting_service_event_types.csv”

NOTEBOOK_URL = “https://raw.githubusercontent.com/MapRock/assemblage-of-artificial-intelligence/main/src/products_of_system_2/plumber_residential_call.md”

The events in EVENT_TYPES_URL are in what is the average ordinal value. Meaning, an event types are not always in the listed order, but that’s the usual order of events. Parse out NOTEBOOK_URL. Each notebook entry has a date for a header. For each of the notebook log entries, match the description to the closest event type. Output a table of the date of the entry, the event type, and the description of the entry.

Prompt 5 – Deriving event from a story to a set of event types.

Here are the results, which are surprisingly good.

| Date | Event Type | Description |

|---|---|---|

| 03-10-2026 — 09:15 AM | chief_complaint | Received a call this morning from the homeowner about the upstairs shower. They said the hot water has been coming out only lukewarm for the past few days, and the pressure seems weaker than usual. According to them the problem seemed to start sometime after they had several guests staying over the weekend and everyone was using the bathrooms more than normal. The customer sounded concerned that the water heater might be failing or that something was wrong with the shower valve. Scheduled a visit for tomorrow afternoon to take a closer look. |

| 03-11-2026 — 01:05 PM | diagnosis | Arrived at the residence and spoke again with the homeowner in person. They repeated that the shower water never really gets hot anymore and the stream coming from the shower head seems noticeably weaker. They mentioned that the kitchen sink and bathroom sink still get hot water eventually, but the shower takes longer and never reaches the same temperature. I told them my first thought is that it could be a partially clogged shower cartridge, mineral buildup in the head, or possibly an issue with the mixing valve restricting the hot side. Let them know I would start by measuring water temperature and pressure at several fixtures to narrow it down. |

| 03-11-2026 — 01:30 PM | setup_sensors | Set up a few basic checks before taking anything apart. Connected a small pressure gauge to the shower arm and used a thermometer to see what temperature the hot line was actually reaching. Ran the shower for several minutes while watching the gauge and checking the temperature of the hot supply line coming from the heater. Took notes on the pressure readings and also checked the kitchen faucet to compare the hot water temperature there. |

| 03-11-2026 — 02:05 PM | analyze_data | Collected the readings from the tests. The pressure at the shower is noticeably lower than at the nearby sink, which suggests something is restricting flow specifically in the shower assembly. The water heater itself appears to be delivering hotter water than what is reaching the shower. After looking at the numbers and feeling the supply pipes, it seems likely the mixing cartridge in the shower valve is partially clogged with mineral deposits, which would both reduce pressure and limit how much hot water can pass through. |

| 03-11-2026 — 02:30 PM | present_treatment_cost | Explained my findings to the homeowner. Told them the most probable fix would be removing the shower handle and replacing or cleaning the mixing cartridge, and while I’m there I’d also inspect the shower head for buildup. Mentioned that if the cartridge is heavily scaled it’s usually better to replace it entirely rather than clean it. Provided an estimate for the parts and labor to replace the cartridge and reassemble the valve. |

| 03-11-2026 — 02:50 PM | customer_accepts_treatment | Customer reviewed the estimate and asked a few questions about whether the water heater itself might still be involved. After explaining that the heater is producing adequate temperature and that the restriction appears localized to the shower valve, they agreed to proceed with replacing the cartridge. |

| 03-11-2026 — 03:10 PM | execute_treatment | Shut off the water supply and removed the shower handle and trim plate. Pulled out the existing cartridge and found significant mineral buildup around the internal ports, which would definitely restrict flow. Installed a new cartridge, flushed the lines briefly to clear debris, and reassembled the valve and handle. |

| 03-11-2026 — 03:45 PM | test_treatment_application | Turned the water back on and ran the shower again. Pressure at the head is now noticeably stronger, and the temperature rises properly within about a minute. Let the shower run for a few cycles of hot and cold to make sure the mixing valve is operating correctly and that there are no leaks behind the trim. |

| 03-12-2026 — 09:10 AM | prepare_report | Checked back with the homeowner after they used the shower this morning. They confirmed the water is now getting hot again and the pressure feels normal. Prepared a brief report summarizing the issue, the diagnostic steps taken, and the replacement of the clogged mixing cartridge. Submitted the invoice for the service call and replacement part. |

| 03-18-2026 — 02:25 PM | customer_paid | Payment received from the homeowner for the completed repair. Issue appears fully resolved with no additional complaints reported since the work was completed. |

Response 5 represents the events that happened during the plumber’s customer visit. It’s a point by point story of the visit. Because we’ve generalized the events to what is specified for troubleshooting services, this story could be added to a Markov model in Time Molecules, strengthening the probabilities of the Markov model.

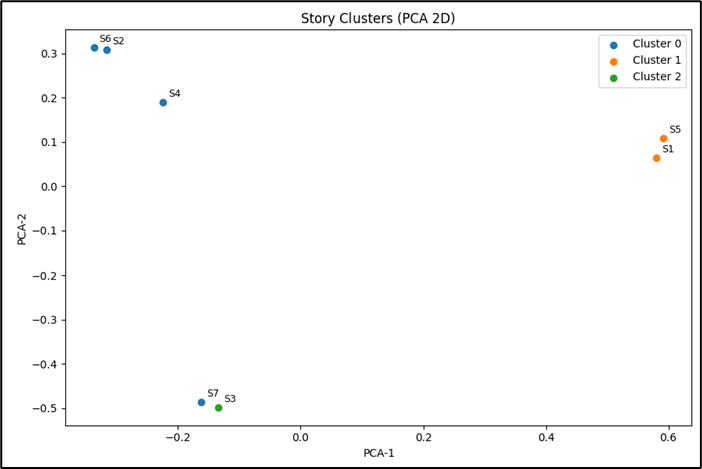

Generalizing Things

Once System 2 artifacts exist in textual form, they can be generalized, represented computationally, and compared across a large corpus of knowledge. Embedding models allow stories, plans, and procedures to be mapped into vector space where similar patterns cluster together. This enables an AGI system to detect analogies between artifacts and reuse prior reasoning structures when encountering new problems.

In many scenarios, there are sets of objects that can result in impossibly large numbers of unique sequences:

- ICD10 codes were supposed to be more detailed, but for analysis, it might help to aggregate.

- Websites may contain thousands of pages. These pages could be generalized in one or more dimensions. For example, by subject, product, purpose (story, instructions, etc.).

Procedure.

- For each object, have an LLM create a summary of about 100-150 words.

- Generate an embedding and store the embedding, object identifier, and the LLM-generated summary into a vector database.

- After the documents are added, find the top matches that have a similarity score of, say, 0.85 or higher. For each of those, find its top matches, and the ones that appear often probably belong to a group.

- For the ones belonging to the group, construct a prompt including all the summaries and have an LLM create a summary of those summaries. That’s the name of the generalization.

Please see cluster_stories.py for the code and example_stories.txt (a set of stories to cluster) for the full stories.

Figure 3 shows the clustering of story summaries generated by GPT:

Table 2 lists the cluster each story was assigned to and the distance from the center of the cluster. For example, out of the two stories assigned to Cluster 1 (Story 1, 5), Story 5 is closer to the centroid of Cluster 1, meaning Story 5 is more typical of Cluster 1 than Story 1.

| Story | Cluster | Distance from Centroid |

|---|---|---|

| Story 1 | 1 | 0.1893 |

| Story 2 | 0 | 0.5978 |

| Story 3 | 2 | 0.0000 |

| Story 4 | 0 | 0.5978 |

| Story 5 | 1 | 0.1893 |

| Story 6 | 0 | 0.6542 |

| Story 7 | 0 | 0.7117 |

These clusters we’ve created enable us to aggregate a number of stories into a smaller number of categories. In this case, seven stories are aggregated into three categories. The great value of this is that if we wanted to study sequences of events, we can aggregate what could be a very large number of sequences into fewer.

Match a New Story

With a stable set of clusters—trained on a large number of stories (which is not the case for Table 2)—we can attempt to match a new story to one of those clusters. Prompt 6 is a new story we will match to a cluster. We’ll submit it to ChatGPT:

Please create a one-sentence summary of this story: My grandfather knew much about botany. He was an expert at grafting plants, especially azaleas, fruit trees. But not just that, propagation in general, orchids, Easter lilies. I used to hang out in this huge hothouse in the yard, where he kept his experiments.

Prompt 6 – A new encountered story.

Summary: An expert’s extensive knowledge and experimentation in a specialized field created a rich learning environment for the narrator.

Step 9 of cluster_stories.py matches the story in Prompt 6 to one of our clusters. Cluster 0 is the best score (the smallest distance from the cluster centroid:

- Cluster 0 distance = 0.7790

- Cluster 1 distance = 1.0434

- Cluster 2 distance = 1.0177

Markov Model as a Case Tracker and Predictor of the Next Event

In a world where we must iteratively obtain information, which may take some time, we shouldn’t just sit idly and wait, but rather context switch to something else. When our information finally arrives, we need to bookmark where we’re at with the task we switched to and switch back picking up where we left off.

When a Markov model is deployed to System 1, it acts as a tracker (it tracks where we’re at) and timer.

Time Molecules vs. Web of Functions for System 1

Time Molecules and a Web of Functions (WoF) are candidates for the primary role of System 1. Both are made up of ML models that compute quick and invariant (same output to the same input) predictions. Specifically, time molecules are Markov Models and the WoF are made up of any type of ML model. The Markov Models are linked by common events and the functions of the WoF are linked because the output of models is the input (or at least part of the input) to other models.

Both are System 1. However, and this is just hypothetical, if I were to map them to parts of our human brain, I would map Time Molecules to the neocortex and Reps (software I wrote that implements Non Deterministic Finite Automata) to the basal ganglia and amygdala, where System 1 is mostly thought to reside. My feeling is that reptile intelligence is more about recognizing states than about predicting what comes next. After all, Reps is composed of NFAs, which as Finite State Machines. Even if it seems reptiles do time their actions, I think it’s more a matter of recognizing what the right time to act looks like.

My hypothesis is that for a reptile, what looks like intelligence is just recognizing a state (fuzzy recognition) and executing an action associated with that state. It’s like waiting for the clock to hit 5:00 PM and heading out the door. That’s very IF-THEN. The key for what appears to be intelligence is that there are a great number of states that can be recognized with an associated action.

I often think of the Boolean IF-THEN as more “reptile level”, and probability of Bayesian P(A|B) as more human level.

However, for people (and smarter mammals), I did write that Stories are the Transactional Units of Human-Level Intelligence. Markov Models are an average of the event transitions of a set of stories. Humans have a better intuition for time because thinking is about manipulating the future, not just responding to it. But recognizing and timing are not mutually exclusive to reptiles and humans, respectively. We also recognize states and an automatic action, and that works well for the vast majority of our behavior. But we can also decouple recognition and action, where our response considers more data and conjures up another response.

Instead of recognizing states (snapshots) we recognize stories that happen in 4D spacetime. Could it be that the AI analogue of the minicolumns of our neocortex are Markov models—the aggregation of stories? A structure that is both recognizable but is more than a collection of qualities—a recognition of sequence, not just a fuzzy snapshot of an instant of time, but that fuzzy snapshot as just the first event in a learned model of probable next events.

Plans and Prolog

Markov models reflect the steps of the cycles of a process—whether the process is a visit to the doctor, the onboarding of a new hire, or the creation of a watercolor. That tells us what the process does, but how was it built? In other words, how did we build a doctor, hiring process, and watercolor artist? We first had to design a plan.

The thing about plans is there are some answers we don’t have up front. We like to “get our ducks in a row”, but some things need some time, for example, required permits that will take a long time but we don’t need it yet to continue. We may need to plan contingencies for many factors not blocking progress yet. The things we know we don’t know.

That brings me to Prolog. Not because it’s shiny or trendy (it’s literally from the 1970s), but because it turns out to be a strangely good fit for this slow, remixing loop we actually run.

With Prolog, we can encode a high number of complicated definitions, computing procedural decision points, variable factors, alternatives.

Please see Prolog is On Deck to Bat for my take on Prolog’s role in this blog.

LLMs are unmatched at noticing patterns across huge swaths of data. They surface analogies at a scale and speed no human could touch. But they’re probabilistic—they can drift, confabulate, or chase red herrings when the remix gets too loose. What they really shine at is the “this kinda looks like that” step.

Stories, though—these compact bundles of situation + actions + trade-offs + outcomes—are the things worth capturing and plowing back. We already know how to lift them into RDF/Turtle for sharing, querying, provenance, all the enterprise-friendly stuff. That gives us the structured, inspectable, and linkable soil.

But to make the cycle actually turn—to take a noticed pattern, apply analogical tweaks, explore “what if” branches, and spit out new facts or rules that can be plowed back in—we need something that executes sequences cleanly, chains causally without hallucinating, and lets us branch on possibilities in a controlled way.

Prolog does exactly that. You describe the pattern once—declaratively—and the engine searches for satisfying paths. Lists and recursion handle ordered sequences (a recipe, a causal chain, a story progression) without any fuss. Unification lets things match in flexible ways; backtracking explores alternatives almost like those “happy accidents” of analogy. When it finds good paths (or a handful of plausible ones), you write the results out as plain Prolog facts to a file. A separate program loads that file later, treats the new facts as input, and the loop keeps spinning.

No shared process, no brittle coupling—just files you can open, read, tweak, version, audit. It’s plowing back, digitized.

Code 4 is an example of converting a text recipe into Prolog. Please see, manhattan_cocktail.md, a text recipe for a Manhattan cocktail. It’s a recipe someone made up decades ago that is certainly influenced by the spirit knowledge of the inventor—a remix of drinks made from older spirit + vermouth + bitters, refined over time for balance and ritual (trained towards popularity fit).

We notice the pattern, lift the story, encode it. This recipe isn’t difficult to comprehend, but it doesn’t need to be this specific. We know it works because it has endured at bars for decades. So we could experiment with variations as subtle as the kind of whiskey or vermouth, the proportions, and selection of flavorings. It can serve as an analogy, a proven starting point, for building many other drinks.

manhattan_type(classic).manhattan_type(dry).manhattan_type(perfect).vermouth_for(classic, sweet_vermouth).vermouth_for(dry, dry_vermouth).vermouth_for(perfect, half_sweet_half_dry).base_for_manhattan(rye_whiskey).bitters_for_manhattan(angostura_bitters).garnish_for_manhattan(maraschino_cherry).recipe_steps(Type, Steps) :- base_for_manhattan(Base), vermouth_for(Type, Vermouth), bitters_for_manhattan(Bitters), garnish_for_manhattan(Garnish), Steps = [ add(2, oz, Base), add(1, oz, Vermouth), add('2-3', dashes, Bitters), fill_with(ice), stir('20-30_seconds'), strain(into_glass), garnish(Garnish) ].

Code 4 – Recipe for a Manhattan converted into Prolog.

See manhattan_cocktail_instructions.md for an exercise utilizing Code 4.

Project Management and Plans

Not much shouts “planning” louder than project management. The first half of any PM cycle is pure planning—defining scope, sequencing tasks, identifying dependencies, spotting decision points, and estimating resources/timelines. The second half is execution, but real-world obstacles almost always trigger sub-plans, replanning, or branching paths.

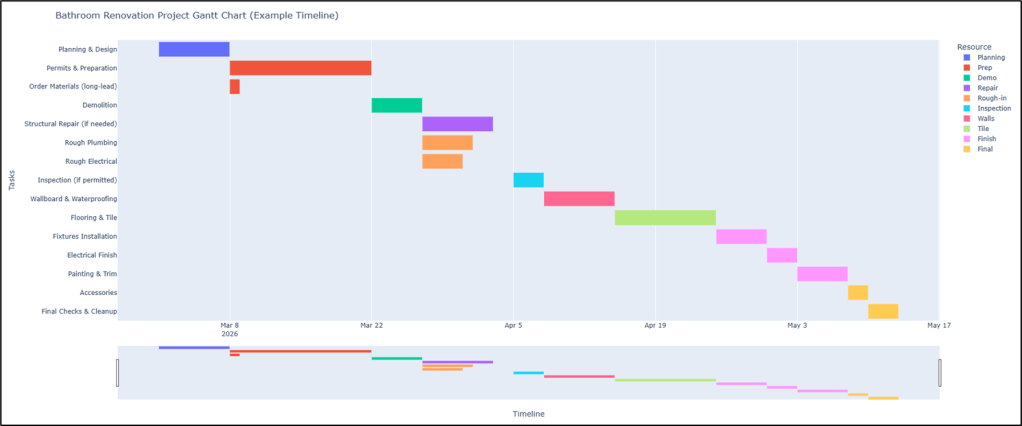

A classic visualization tool is the Gantt chart—horizontal bars show task durations against time, with dependencies often indicated by arrows or links. Each bar essentially encodes a mini “story” or transition—from State A (task start) to State B (task complete)—while arrows capture the causal flow that System 2 reasons through when hardening a plan.

The bathroom renovation example in the GitHub repository is an example of a System 2 artifact. It is a hierarchical plan with phases, explicit dependencies, conditional branches (decision and requires), and simulation rules (can_start, next_tasks).

Figure 4 is an example of a Gantt chart for this plan. Gantt charts aren’t just pretty pictures—they externalize the analogy-hardened reasoning that lets us coordinate complex, multi-step transitions from current undesired state to desired outcome.

Dependencies become arrows, highlighting how one completed state enables the next. This is exactly what System 2 produces, an encoded generator of traces (sequences of states) that can be followed operationally (System 1) or replanned when surprises arise (ex. mold discovery during demo).

The text version of the bathroom renovation contains information extractable by an LLM. However, raw text may contain ambiguities (not everyone is a perfect writer) that might be misinterpreted and will need to be worked out. To make the information in the text explicit, we can transform it into a Prolog encoding equivalent. It’s also executable—query for next possible tasks given completed ones and resolved decisions—turning analogy-derived reasoning into crisp, explorable structure.