Abstract

AI agents are not just about producing answers—they are executing processes. If those processes emit events for each step, they can be studied the same way we study human and enterprise workflows. By capturing and analyzing these event streams, we can reconstruct the context behind agent decisions and understand how AI-driven systems actually operate over time.

AI agents perform tasks for us and other AI agents. In some ways, AI agents of today are like people. They are trained towards a specialty (like our professions) and they also carry a versatile intellect that navigates them through an impossibly large number of situations—each a permutation of all the factors we’re aware of, and more. That versatile intellect for us is of course, our intelligence and for AI, the centerpiece of that intellect is LLMs.

AI agents execute processes based on what is asked of them and output a product. Each step of those processes is an event—a log of what happened. We use those logs to investigate and troubleshoot problems that popped up or to find opportunities for optimizing the process.

At each step of the process, there are factors applied to a particular step. Many of those step-level factors aren’t provided as part of the delivered product. If an issue arises (ex. dispute, defect), we can seek clarification from the entity that produced the product. Therefore, the data around those step-level factors should be retained, whether the producer is a human, corporation, or AI agent.

There is already a slew of governance rules applying to people and corporations regarding the retention of data for purposes of auditing and settling disputes. That should apply to AI agents as well.

This blog addresses two primary takeaways:

- The retention of event-level data related to the processes involved with AI agents performing their tasks towards the goal of being able to reconstruct the context of each case in the event that an issue arises and clarification is needed.

- This contributes towards process observability, and constructing the provenance of information produced by AI agents.

- The processing, storage, and contribution of events emitted by AI agents towards analysis of this step-level context, as described in my book, Time Molecules.

Those two takeaways combined move context engineering beyond its traditional context of governance to be viewed as another component of intelligence.

Preface

One of my favorite examples of data without provenance is the endless claim that “moringa has 7x the vitamin C of oranges”. It pops up in YouTube videos constantly, to the point where it became an established quote of mantra stature such as, “Gold preserves value.”

If you’re tasked with proving it wrong, the fact-finding aspect of the inquiry is vast. Is the moringa/oranges comparison dry weight? If it is, it doesn’t seem intuitive to me, a consumer, since I don’t eat oranges in a desiccated form. What is an orange? I’m sure vitamin C varies by variety or orange. I would suspect the peel and the part we eat have different compositions. Same with the moringa. I imagine it’s just the leaves, no stems or roots, but the young leaves or all leaves? Maybe the seeds and pods (those are eaten when still green)?

This is shadow knowledge in action: a metric born somewhere (maybe old nutrition reports), stripped of its “receipts”, gone viral, and take on a life of its own as a meme out in the wild. Now imagine that same artifact slipping into an AI agent’s context window—say, in a health recommendation workflow or enterprise dashboard summary. Without traceability, the model treats it as gospel, and downstream decisions build on sand.

Is there any data we should just believe at face value? Is it even possible to investigate a piece of data to certainty, even with extensive provenance?

Introduction

It’s genuinely heartbreaking when I demo a completed BI project to the end users and someone asks me how one of their figures had been calculated. After weeks working on the project, I know the formula and the lineage of the data from the OLTP systems to the semantic layer. That data mapping, the transformations, and the formula were validated by the business owners, subject matter experts, and made sense to everyone on the team.

That person is generally someone who has been working in the field for decades and knows there are exceptions that weren’t taken into account. The issue regarding that figure affected the data well before it reached the OLTP systems. The rules exist in her head and sometimes an Excel spreadsheet. Sometimes we “cleansed” that data away thinking it must have been a mistake.

Sometimes the discrepancy happens upstream of everyone. The report data is lifted and pasted into another application, which performs some sort of calculation unknown to the user … who makes decisions based on that information.

After all these years and all those customers, there is ALWAYS something unexpected that comes to light. Sometimes it’s easily resolved—just add or remove this or that. But sometimes we need to figure out how to allocate a value—and I can’t describe how painful that can be when you thought the project was battened up and you’ve already begun ramping up for the next project.

There are two primary slogans of Time Molecules:

- The Business Intelligence Side of Process Mining and Systems Thinking: The subtitle of Time Molecules.

- Time Molecules—The Time-Oriented Counterpart to Thing-Oriented OLAP Cubes: As they say in the Spark world, bring the logic closer to the data. Time Molecules is about bringing systems thinking closer to the data.

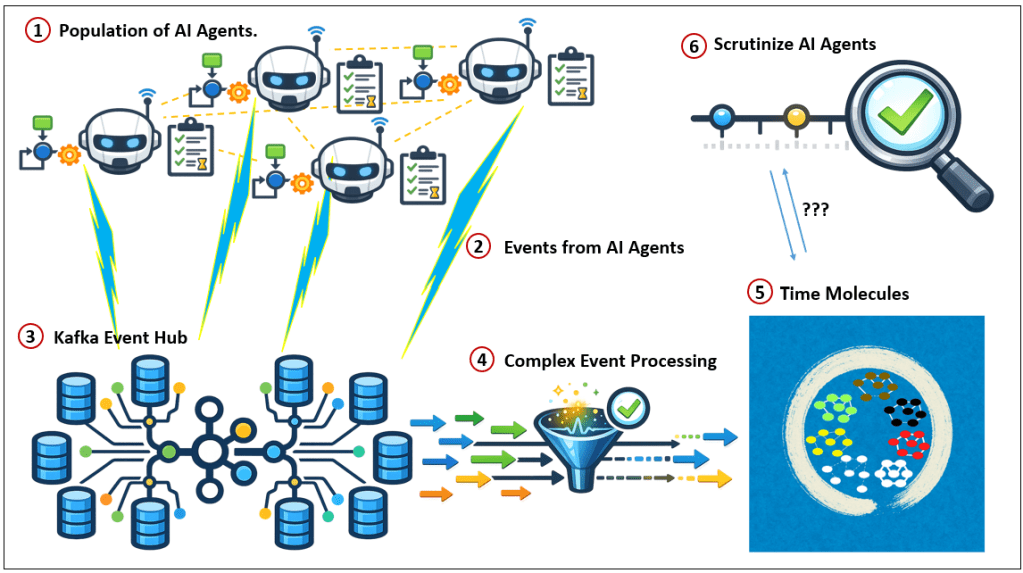

Figure 1 is a high-level illustration of the subject of this blog.

- In the world are a large number of agents, mostly “AI agents”, but it can include calling queryable non-AI resources such as semantic layers, knowledge graphs, web sites, IoT devices, and yes, people. The AI agents engage one another (in a loosely-coupled or even decoupled manner), distributing tasks based on “expertise” and availability.

- The events are emitted from all agents and resources to a massively-scaled event hub.

- The event hub is a Kafka (or Kafka-like or compatible, ex. Azure Event Hub) instance that collects events at massive scale from a large number of sources.

- The events are streamed through a Complex Event Processing (CEP) system. The CEP system performs rudimentary processing of raw events.

- The events from the CEP system land in a large database. In this case, the database is part of a system I call the Time Solution.

- AI agents or people can study the processes of AI agents and their interactions.

These events of every step AI agents take are the same and as valuable as the meticulous notes taken by scientists for the purpose of replication.

Background Reading:

- Background on Time Molecules (without reading my book, Time Molecules):

- Sneek Peak at My New Book, Time Molecules: Provides an extensive synopsis.

- Time Molecules TL;DR

- Context Engineering and My Two Books: Background of context engineering.

- Reptile Intelligence: An AI Summer for CEP: I discuss Complex Event Processing and Event-Driven architecture.

Notes and Disclaimers:

- This blog is an extension of my books, Time Molecules, and to a lesser extent, Enterprise Intelligence. They are available on Amazon and Technics Publications. If you purchase either book (Time Molecules, Enterprise Intelligence) from the Technic Publication site, use the coupon code TP25 for a 25% discount off most items.

- Important: Please read my intent regarding my AI-related work and disclaimers. Especially, all the swimming outside of my lane … I’m just drawing analogies to inspire outside the bubble.

- Please read my note regarding how I use of the term, LLM. In a nutshell, ChatGPT, Grok, etc. are no longer just LLMs, but I’ll continue to use that term until a clear term emerges.

- This blog is another part of my NoLLM discussions—a discussion of a planning component, analogous to the Prefrontal Cortex. It is Chapter XII.2 of my virtual book, The Assemblage of AI. However, LLMs are still central as I explain in, Long Live LLMs! The Central Knowledge System of Analogy.

- There are a couple of busy diagrams. Remember you can click on them to see the image at full resolution.

- Data presented in this blog is fictional for demo purposes only. This blog is about a pattern, primarily the pattern of unstable relationships.

- Review how LLMs used in the enterprise should be implemented: Should we use a private LLM?

- This blog is heavy on LLM-generated content, mostly Prolog. Responses from LLMs will have a blue background.

- Supporting material and code for this blog can be found at its GitHub page.

- Prompts, LLM responses, and code in this blog are color-coded: grey, blue, and green, respectively.

What does this Cost?

As an architect, the first issue that comes to mind is the network traffic this will create. Saving prompt resolutions at the event level (each step it takes) will multiply the bandwidth a few times over! Unfortunately, I’m not rich enough to experiment on this myself, so the next best thing is to ask ChatGPT 5.4.

I used submitted Prompt 1 to the shiny new ChatGPT 5.4 to answer that question. This is actually a great example of what this blog is about as well. So I asked it to return the events it would emit (ChatGPT is an AI agent itself).

Towards the goal of to assessing the value of tracing the processes of AI agents, I need an estimate of the bytes generated by AI agents emitting to event hubs of the steps of their process. These AI agents call each other to perform specific parts of the task of resolving the original prompt. For this example, let’s assume 1000 AI agents, modestly connected (say each is connected to an average of 50 AI agents). Each step includes the submitted time, return time, a phrase describing the request (condensed prompt to the called AI agent), the parameters, and the response.

As part of your response, please list steps you took and would emit as an event so this question you’re answering serves as an example. I imagine you need to estimate the size of the events being emitted, the average number of calls to other AI agents, the avg. number of call levels deep, etc.

Prompt 1 – How many bytes would logging event steps by AI agents produce?

In terms of the bottom line answer, it’s 150 KB to about 2 MB per prompt.

The complete results can be viewed in ChatGPT_event_bytes_estimate.md, as well as SuperGrok_event_bytes_estimate.md I asked SuperGrok too and its response was very different at about 20-40 KB.

For here, Response 1 is a list of the events.

| Seq | Event type | What happened | Why it matters |

|---|---|---|---|

| 1 | PromptReceived | Received your request to estimate bytes for AI-agent tracing | establishes case start |

| 2 | TaskDecomposition | Broke task into subproblems: event size, call depth, throughput, example trace | shows reasoning structure |

| 3 | AssumptionSelection | Chose to treat “50 connected agents” as topology, not actual fan-out | critical modeling assumption |

| 4 | ExternalFactLookup | Looked up Azure Event Hubs max publication size and throughput guidance | grounds estimate in platform limits |

| 5 | EventSchemaEstimation | Estimated bytes for one step event from fields you specified | core sizing step |

| 6 | TopologyScenarioModeling | Built conservative, balanced, heavy call-depth scenarios | avoids false precision |

| 7 | VolumeCalculation | Calculated bytes per top-level prompt and at larger scales | produces estimate |

| 8 | ThroughputCheck | Converted prompt volumes into MB/s ingress rates | checks Event Hub feasibility |

| 9 | OutputCompressionAdvice | Recommended compact trace events with pointers instead of full payloads | practical architecture guidance |

| 10 | ResponseAssembly | Produced narrative answer plus trace example | final synthesis |

As an architect (and a citizen of this world), having AI agents log their events is kind of non-negotiable, at least to me. If we can’t at least log a trace of their procedures, we shouldn’t deploy. Or we constrain the AI agents further—which seems to defeat the idea of “agency” over traditional software components.

The subject of this blog is to make the case for this importance, but this exercise provided a great example of what we’re talking about.

Human Agency, AI Agents, and Software Components

The term agent in “AI agent” can be misleading. It sounds as if the system possesses the same kind of agency (the power and capacity to act) that humans do. In practice, agency in the context of AI is less than that of people. To understand what AI agents really are, it helps to compare them with two other kinds of actors in modern systems: humans and traditional software components.

| Characteristic | Human Agency | AI Agent | Microservice / Software Component |

|---|---|---|---|

| Source of goals | Self-directed intentions | Goals provided through prompts, policies, or orchestration | No goals; executes predefined function |

| Decision flexibility | Very high | Moderate; chooses among tools and steps | None; follows deterministic logic |

| Process structure | Dynamic and open-ended | Dynamic but bounded by system design | Fixed and predictable |

| Action space | Broad and unconstrained | Limited to available tools and APIs | Limited to defined function |

| Accountability | Moral and social responsibility | Governed by system operators | Governed by software design |

| Observability needs | Human judgment and explanation | Requires tracing and telemetry | Usually predictable and testable |

| Typical execution pattern | Think → decide → act | Goal → reason → tool → observe → iterate | Input → function → output |

This comparison highlights something important: AI agents are not simply microservices made up of smarter components, and they are not autonomous actors like humans either. They occupy a middle ground—at least at the time of writing.

Traditional software components are inherently constrained. In microservices architectures, a service performs a narrow function. It receives a request, executes deterministic logic, and returns a response. This predictability is by design; it keeps complex systems stable and manageable.

AI agents behave differently. Instead of executing a single, deterministic function, an agent is typically given a goal and must determine how to accomplish it. To do so, it may retrieve information, call tools, generate intermediate reasoning steps, and iterate toward a solution. The sequence of actions is not predetermined, and two executions of the same task may follow different paths depending on the context.

This flexibility is what people refer to when they say AI agents have “agency”. But this agency is operational rather than philosophical. Agents do not originate their own goals—again, at time of writing—nor are they responsible for their actions in the human sense. Their behavior remains bounded by the tools, policies, and constraints defined by the systems that deploy them.

Because agents execute dynamic processes rather than fixed functions, understanding their behavior requires a different kind of system observability. Each execution of an agent produces a sequence of actions—interpreting prompts, retrieving data, calling services, generating outputs. These steps form the story of the agent’s behavior.

Capturing that story is where tracing becomes essential. Tracing records the events generated during an agent’s execution. Over time, these events accumulate into event streams that describe how agents actually operate in the real world. Once those events are captured, they can be analyzed just like any other process data. Using the event data architecture described in Time Molecules, each agent execution becomes a case, each action becomes an event, and the statistical patterns of agent behavior can be studied across large populations of agents.

In this sense, tracing is not simply a debugging tool. It is a form of context engineering—the mechanism that allows us to understand, validate, and improve the processes generated by AI agents operating within complex systems.

Event Level Factors

There is a story behind every single piece of data/information/knowledge. That’s easy to forget because in our rushed world, we hardly have the luxury of time to investigate what we’re told.

Think about this in terms of a customized process we encounter in our daily lives. For example, a professional tax preparation. The documents our tax preparer presented to us is the result of a sophisticated process, a complicated story consisting of many recursive events.

- The values for most of these event-level factors aren’t often retained. Or they are private to entities that performed the step.

- Even if we had the “all” of the values, that’s still probably not enough to understand where that value came from—to the point where we’d bet the farm.

Such processes of inputs, a complicated process happens, and something outputs spans natural processes, manufacturing processes, professional processes, R&D processes, and now AI agent processes.

As an example, let’s look at a professional process, which is the closest to how most AI agents will be used (at time of writing). In the case of the professional tax preparation, we provide all our documents for the year (1099, W2, etc.) to the tax preparer and they give us our tax documents. Those documents don’t consist of how we obtained to means to earn money to be placed on a 1099 versus the W2, nor the skill we possess in order to deliver goods.

The tax preparer takes those documents we provided, churns it through a process, and outputs our finished tax return forms. There is a lot of detail in those tax return forms, but it doesn’t include the knowledge of the tax preparer, the level of stress that preparer might have been under while preparing your taxes in the hectic tax filing season, and the details of exceptions that may have occurred (new laws, gray areas, etc.).

Remember, the population of AI agents will quickly exceed the population of humans. That scale is the sort of thing that can take us from the today’s extreme level of zettabytes to yottabytes and beyond (thanks to AI training datasets, video streaming, IoT sensors, social media, cloud backups, etc.).

But outnumbering us is just the beginning. They can also churn out their processes potentially magnitudes faster than humans can. And in a manner that’s more cold-blooded than the IRS or DMV. There isn’t as much room for empathy and extenuating circumstances.

Imperfect Information

I need to make the point that even if we did store yottabytes of extensively detailed logs of the steps AI agents take to resolve their tasks, there is still the matter of imperfect information.

The capstone of the purpose of this blog is to illustrate that we all make decisions on imperfect information. That means, to riff on GIGO: imperfect information in, imperfect/imprecise product out. But it wouldn’t be fair to call it garbage. Therefore, as the previous topic argued, we must retain event-level data, to mitigate the imprecision.

In the last few years of Scott Adams’ daily show, he often said something to the effect of, “Our data on everything is wrong.” I won’t go into why he thought it, but putting aside any intentional wrongdoing by the people providing the data, I agree. That’s because all data is computed from imperfect information. There can’t be perfect data for a merely sentient being living within a highly complex world. The only perfect information I can imagine would be for an all-knowing being that is the complex world.

- Missing data: This is usually what people think of as imperfect information. Sometimes we know we’re missing information but believe we can still make a reasonably sound decision. But usually, we don’t know what valuable data we’re missing, but we go ahead and make a decision anyway based on historic outcomes. We’re always missing data because in a complex system, nothing is in a vacuum.

- Information overload:

- Bad data: This is really the subject of this blog. In a constantly changing world, strictly speaking, no data is perfect. So we need information to handle the exceptions.

Strictly speaking, yes, I don’t think any data is perfect. Even the results of experiments with a p-value as small as the width of an atom in meters is subject to the quality of sampling—its biases of dozens of distinct types, measurement inaccuracy, etc. The question is, for a world where AI agents could potentially make billions to trillions of decisions per day, how far do we need to go? In manufacturing, the goal for high-value products is the six-sigma value (3.4 defects per million opportunities).

Data could be “imperfect” if we don’t know how it was calculated. Data sources—IoT devices, AI agents, and people—could be tampered with, intentionally or unintentionally, with or without good intentions or malice. LLMs and people are heavily influenced by their respective training, the composition and methods of training and lived experiences, respectively.

Because information can’t be perfect, neither can context. So we throw in as much seemingly valid information as we can then reason through with as complete a data set as possible.

One of the things I’d hope for with AI is handling information overload-another type of imperfect information. That is, we usually need to trust that information given to us is good. Really, no data is above scrutiny. But we can’t trace every fact plugged into our decisions. We’re well aware of this, which is why initial statements in project plans list the assumptions. Even math is founded on axioms, things that go without question.

To this point, it’s not a question of whether AI is prone to bad data. Rather, how can it do better than people at validating information? We humans can’t realistically wallow in analysis paralysis. That’s where fuzzy things like trust make for good heuristics.

Trust

Trust is the way we draw a box around imperfect information. As an analogy, p-value is to statistics as trust is to delegation.

How old is the Earth? Is it 6,000, 75,000, 100 million years old, 3 billion years old, or 4.5 billion years old? That value keeps changing. I have a good idea for why I should think the final answer is 4.5 billion, but history would tell me that’s not a good bet. How many dimensions are there—4, 10, 11, 26? It depends on the theory, and it too is a good bet that none of them are the final answer.

How much we trust our colleagues, equipment, and capabilities is a form of imperfect information. We don’t know what is going on in the minds of our anyone we’re dealing with. We probably can’t be an expert at the mechanics of every tool and piece of equipment we employ. We probably don’t know how far we can push every one of the aspects of our skill and combinations of skills for every situation.

A big part of our brain is geared towards accessing trust with other people. In fact, there is the thought that our intelligence evolved in order for us to act in our highly functional society—highly functioning in the sense that we can achieve more as teams than alone.

These systems actually aren’t that great since we’re still quite often fooled. It’s easy to subconsciously brainwash us, and even the people we trust can give us a strong chain of correlations with a weak link (the bad data form of imperfect information). I think this is a big issue for AGI, even with its wider reach and deeper compute power than people. AI is more vulnerable to garbage-in-garbage-out than we are. Even carefully curated BI data is prone to the missing data form of imperfect information.

One of the advantages of Prolog is we can store facts that store multiple values. for example, we can have Prolog facts that state the moringa vitamin c from many experts, even with rules taking in conditions.

The big thing and the least we can do is for AI agents to keep a log of what it called, particularly for RAG type of processing. these events can be fed into event processing and submitted to my Time Molecules solution. Every fact of data should come with provenance (as would any valued object in the real world).

AI Agents and Time Molecules

AI agents have a job, just like their human counterparts. AI agents are programmed and trained for that job, again, analogous to how we are programmed and trained for our jobs. All jobs involve one or more systems and a set of tactics, which could be thought of as a procedure towards resolving problems.

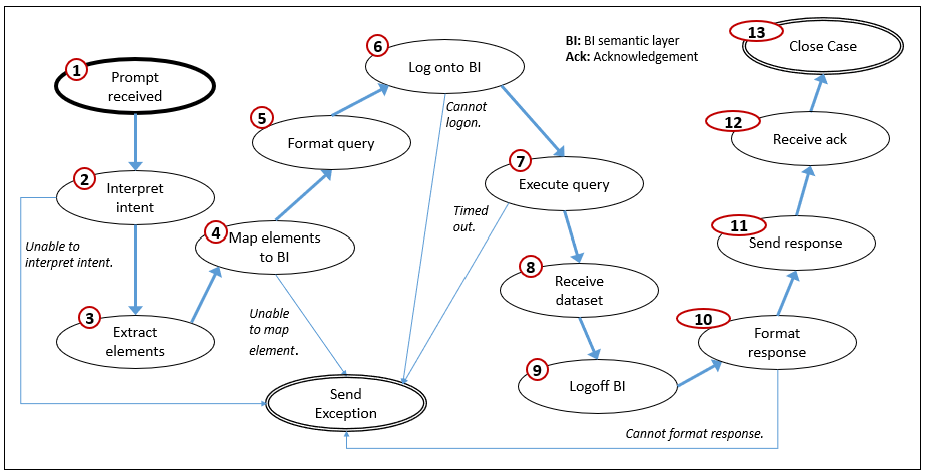

For example, an AI agent may have the job of querying a BI semantic layer (ex. querying a Kyvos Semantic Layer) in response to another AI agent attempting to offer advice on whether to open a new store. The query might be as simple as: “What are the total sales for the stores in Boise, ID?” That should be a one-shot query involving a simple translation to SQL (or DAX, MDX, etc.). But it still involves a number of steps, as illustrated in Figure 2.

Figure 2 descriptions:

- Attempt to interpret the intent of the prompt and match it to a known intent.

- Extract the elements of the prompt.

- Map each element to the probable entity/attribute/member in the BI semantic layer.

- Format a query from a template related to the intent with the entity/attribute/members of the elements.

- Log into the semantic layer.

- Execute the query.

- Receive the answer.

- Close the connection to the semantic layer.

- Format the answer into a response to the calling AI agent.

- Send the response.

That 10-step process happens if everything goes well. Hopefully, it usually does. But many things that happen:

- There may be no semantic layer match for an entity (applies to 3).

- The request has ambiguities or is even unintelligible (2-the prompt doesn’t apply at all).

- It may not be able to log into the semantic layer (applies to 6).

- The query timed out (applies to 7).

- Some steps might involve calls to other AI agents that might fail.

Those are among the set of events that happen during an AI agent’s processing of prompts. For this example, there actually would be even more steps, for example, determining how long the agent has to provide a response or submit an exception.

It’s important to remember that an AI agent probably isn’t programmed for those steps. That is, AI agents are unlike a daemon that is programmed in the traditional way (with C++, Java, Python, Rust, etc.). Instead, it was trained (fine-tuned) on that procedure from many cases fed to it, perhaps from the actions of human BI analysts. That means, there could be any number of different steps for different AI agents tasked with reading a BI semantic layer—as it is for human BI analysts. But the variability in event types and the order of the events should be mostly similar.

Generalizing this variability of event types across many cases (in this case, each prompt the AI agent addresses) is what Markov models are about. My book, Time Molecules, is about “linked Markov models at scale”.

Please understand that Time Molecules is more than about Markov models, in the same way OLAP cubes are more than about denormalized tables and SQL GROUP BY results. It’s about large-scale data and a simple transformation that pack an analytics punch well above its weight.

For this blog, you can put Time Molecules aside. It’s enough to know that the events are captured into an event hub and there is a rich world of temporal analytics downstream.

Process Mining

Process mining is a discipline that analyzes how processes actually unfold by examining event logs generated by real systems. Instead of relying on diagrams or documentation that describe how a workflow is supposed to operate, process mining reconstructs the process directly from recorded events. Each event typically includes a timestamp, an activity type, and a case identifier that links related events into a single execution of the process.

From these event streams, process mining techniques can discover the underlying workflow structure, identify bottlenecks, detect deviations from expected behavior, and measure performance characteristics such as cycle time and failure rates. They can reveal the difference between how a system was designed to operate and how it actually operates in practice.

In the context of AI agents, process mining becomes especially powerful because every meaningful step in an agent’s workflow can be captured as an event. When an agent interprets a prompt, calls an LLM, queries a database, invokes another agent, or returns a response, each of those actions can be logged as part of a case. Over time, these events accumulate into large event streams describing the behavior of the agent population.

This is where Time Molecules becomes relevant. Time Molecules uses event data architecture to organize these events into structured datasets where each case represents a single execution of an agent task. From there, linked Markov models can be constructed to capture the statistical patterns of how agent processes unfold.

Once the event data from AI agents is fed into this architecture, several kinds of analysis become possible:

• Discover the common workflows used by agents to complete tasks.

• Identify alternative paths agents take when encountering ambiguity or failure.

• Detect unusual sequences of events that may indicate errors or malicious behavior.

• Compare performance across different agent implementations performing the same task.

• Observe emergent behaviors across large populations of agents interacting with one another.

In effect, the population of AI agents becomes a living ecosystem of processes, continuously generating event data that can be studied, modeled, and improved. Process mining provides the methodology for discovering those processes, while Time Molecules provides the scalable infrastructure for modeling them and understanding how they evolve over time.

I discuss process mining in Time Molecules:

- “Process Mining: Bridging the Gap Between Theory and Reality”, page 29

- “Process Mining”, page 63.

Process Mining of AI Agents

I discuss AI agents in Time Molecules, “Retrieval-Augmented Generation as an Orchestrator”, page 71-72. It’s in the context of AI agents as part of a RAG process. A RAG process could consist of a tree or even web of agents.

On page 78, I wrote:

Increasingly, IoT devices not only capture and transmit data, but also perform edge computing—processing or filtering information on-device before sending it upstream. This trend will exacerbate

as AI “agents”—which could be thought of as much smarter and more powerful IoT devices—

become more prevalent, enabling real-time analytics or anomaly detection right at the source,

whether that source is inside the human body or in space.

At the time I didn’t bring up the subject of AI agents emitting their process steps as events. That’s because I thought that notion would be too crazy at the time (early 2025) for a world still toying with the scale of millions of AI agents performing tasks at AI speed. Just their output would be a tremendous volume of data. Add to that emissions of their events and that can multiply the volume by a magnitude.

Event Type Sets

One of the primary concerns of Time Molecules and process mining is the need to discover the set of events that belong to a process. Within the complex world of today—especially where physical distance isn’t as relevant as it used to be due to Internet technologies and mass-scale distribution of goods (WalMart, Amazon, FedEx, UPS, etc.)—the steps of processes aren’t readily apparent.

Thousands of years ago when we regularly hunted (and were regularly being hunted), the scope of situational awareness spanned minutes and only as far as we could see or hear. Today, steps of our “usual” processes occur over timeframes ranging from milliseconds to decades and across a breadth of space spanning the entire surface of Earth. We might see an Amazon data center in our neighborhood, but not TSMC in Taiwan, Amazon headquarters in Seattle, the Amazon warehouses in another city, nor all the things being delivered to participants of a tech show all over the world who are receiving equipment via Amazon. Further, all the pieces fit together in a temporal workflow, so we can’t see the entire process at any given time.

It’s like the temporal version of the blind people and the elephant—everyone is feeling different parts of the elephant and so no one knows it’s an elephant. In this temporal, modern life version, we don’t see all the events that occurred over time a once, nor can we see all the parts that are scattered beyond our field of vision.

Resolving that problem is easy in principle. We pick one place in the process to start, walk in the door, and ask them what they do and where their inputs come from and who they pass their outputs to. Then to go those providers of inputs and outputs and ask them the same question. They may even be a keystone participant possessing the big-picture view and give you a huge poster illustrating the process.

Unfortunately, entities are often reluctant or even forbidden from sharing such information. That is, if anyone even knows such things, even about within their own organization. And, today, the scale of the number of processes, the complexity of the interactions, and the eternal morphing of processes in a complex world, requires a big dose of automated assistance.

The important point is that we can’t optimize our systems if we don’t know how they work. Many large enterprises still don’t have a reasonably detailed (not to mention up-to-date) knowledge of how all the parts of the enterprise relate to each other. There are thousands of employees, working in dozens of domains, using hundreds of software applications, working with thousands vendors and millions of customers. Most “line workers” know what drives their daily task list and who their work is handed off to, but it often stops where. Executives might know the big picture, but they don’t know the details.

The task of seeing the big picture to lower detail is job of process mining and its 2nd cousin, event storming (which I talk about in Time Molecules, page 65).

Fortunately for AI agents of today, they specialize in a task. That means the set of events they experience is fairly constrained. The same could be said for people as well, who have limited skills—that is compared to all the things that humans across history can do.

AI agents can also naturally compartmentalize their events into “cases”. For example, each submitted prompt is a case. The AI agent could also self-initiate a process, each being a case. If AI agents provide a case number and an identifier (the AI agent’s ID), we can expect the event set to be constrained to a few to a few dozen. That goes a long way.

However, at the higher level of a population of AI agents, we’re again in a situation where we can see AI agents doing their individual thing, but we may not be able to see the processes happening among teams of AI agents in the large population.

So, with the event logging of AI agents, beyond the timestamp of an event, we must include:

- Where the initiating event came from, usually a prompt from someone or another AI agent.

- The object that was engaged for every sub-task assignment, which could be another AI agent, a software implementation, a website, a knowledge graph, etc.

- The ID and case ID from the calling AI agent’s point of view.

In an ideal world, we would have the unredacted event logs for every AI agent, and would be able to piece together the flow of events. But, we can’t count on that.

Transforming Event Types

Even if we’ve figured out what event types belong to a process, there will be very many AI agents that perform the same task but in different ways. Meaning, they might emit different tasks in different orders. They may even use their own jargon.

I discuss the concept of “transforms” (mapping event types with different descriptions that are the same thing) in Time Molecules, “Transforms and Abstraction”, page 106. In the context of Time Molecules, a transform is a mapping of an event type label to another. This mapping is a task of Process Mining.

Additionally, I cover an example of mapping in the topic, Map Story Components to Markov Models, in the blog, Products of System 2.

Note that although it would be ideal if all the event sources used the same name for events, in the same way as the IRI of the semantic web, that requirement would slow down the deployment of AI agents and other IoT devices. That’s the same as in the bad old days of software development the need to readily interface with other applications wasn’t yet a priority issue—just another major level of red tape.

Thanks to LLMs, the ability to kick that transform mapping downstream is feasible. That is more in the spirit of ELT as opposed to the older ETL. ELT kicks the transformation of data from multiple sources to the folks who will actually do the analysis and know what they want better than they can explain it to the poor ETL/ELT engineers.

Event Logging of AI Agents

It’s not just that there are a lot more agents, but the interactions multiply complexity exponentially. We’re at the point where demanding event emission from agents is obvious, not optional—here’s why and how.

We don’t even do that for conventional software. Logging of events at the process-level is only turned on when we’re troubleshooting an issue. For example, for a SQL Server instance, we might log each query along with its duration and row count. But we wouldn’t normally log every event that occurred for the processing of each query. If the database does intermittently misbehave, the results are usually not disastrous. So we set a trap, by temporarily capturing a wider range of events.

But AI agents aren’t like typical software that operates in a closed world. Inputs are well-defined and so are outputs. Every now and then some input does not compute and an exception is thrown. For AI agents, the inputs are highly variable. AI agents usually involve non-deterministic elements as well, particularly the LLM. So a strange output risks not being reproducible. Most importantly, agents are called “agents” because they can potentially take actions in the physical world, often irreversible. Without tracing their “thought process”, we may never know how and why a particular decision was made, and so never know how to prevent it.

As awful as it sounds, think about if we traced every action of all people. We know from data science efforts that the more wider and more detailed the variety of the activities (not just their characteristics) we have of people, the clearer the picture that can be painted. If we had the purchases, health history, bank activity, events from their cars, wearable and implanted devices, every call, every click, every photo, every sound Alexa and Siri listens for in case we’re about to ask it a question … wait a minute, we already do that. With my data scientist hat on, that’s a wonderful thing. Of course, without my data scientist hat on, WTF?

It’s uncomfortable to talk about the governance of AI agents because we can imagine the same arguments could be made for applying those concepts to people. Although AI agents have “agency”, AI agents are NOT people—and yes, even that feels oddly wrong to say. Until we’ve lived for AI agents for decades, after unintended, delayed consequences have had time to raise their ugly heads, they must be subject to the kind of governance we would not apply to any people. I hope no AI reads this paragraph a hundred years from now and thinks of me as a monster.

My point is the value of logging every AI agent event is readily evident. Further, because people are subject to that level of tracking, in many ways AI agents should be even more subject to at least that level of tracking.

If the thought of capturing the process events in addition to just the output still sounds crazy, it isn’t, as there are currently governance efforts pushing this.

OpenTelemetry

How mandatory can logging of events by AI agents become? For traditional IT, the rules around governance are fairly mandatory.

When AI agents started appearing in real systems, something became obvious very quickly: we needed a way to see what they were doing internally. Traditional software already has well-established observability practices—logs, metrics, and distributed traces—but agentic systems introduced a new challenge. An agent doesn’t just execute deterministic code. It calls models, retrieves documents, invokes tools, and sometimes reasons through several intermediate steps before producing a result. Without visibility into those steps, debugging or auditing the system becomes extremely difficult.

That led to the idea of agent tracing. The basic concept is simple: every meaningful step in an agent’s workflow emits a structured event. When an agent is invoked, when it calls an LLM, when it queries a vector database, when it executes a tool, or when it produces a response—each of those becomes part of a trace. These events can then be stitched together into a timeline showing how the agent arrived at its answer. In many ways it resembles distributed tracing in microservices, except the spans now represent pieces of reasoning rather than purely software calls.

Several initiatives emerged to standardize this. The biggest one is OpenTelemetry (OTel), which has become the dominant framework for collecting telemetry in cloud systems. The CNCF GenAI special interest group began working on GenAI semantic conventions, which define standard attributes for LLM and agent events—things like model name, prompt tokens, tool invocations, and agent identifiers. At the same time, specialized observability tools such as Langfuse, Arize Phoenix, Galileo, and Helicone began building dashboards specifically for LLM and agent traces.

If you’re building in the LangChain or LangGraph ecosystem, LangSmith provides one of the easiest on-ramps: with just a couple of environment variables, it automatically captures hierarchical traces of agent runs—including thoughts, tool calls, observations, LLM prompts/responses, costs, and errors—without custom instrumentation in most cases. These traces align well with emerging OpenTelemetry GenAI conventions and can be exported for further analysis or integration into broader event pipelines. It’s a practical way to get structured event emission working in production today.

Major observability vendors—including Datadog, Splunk, Elastic, and Dynatrace—soon followed by adding native support for GenAI telemetry.

The adoption timeline has been fairly quick. In 2024, most of the work was experimental. Researchers and early builders instrumented their agents with custom logging or prototype tracing systems. By early to mid-2025, the first wave of early adopters appeared. Agent frameworks such as LangGraph, CrewAI, and AutoGen began adding instrumentation hooks, and the OpenTelemetry community started formalizing conventions for GenAI spans. By late 2025, the idea of tracing agents had moved into what you might call the “obvious” phase—if you were deploying agents in an enterprise environment, people expected some form of traceability and audit trail. As of 2026, the ecosystem is converging with the OpenTelemetry conventions are stabilizing, frameworks increasingly instrument themselves automatically, and observability vendors are integrating GenAI traces into their standard monitoring stacks.

The reason this shift happened so quickly is partly technical and partly organizational. Technically, agents are complex distributed workflows involving models, tools, APIs, and data sources, so debugging them without traces is almost impossible. Organizationally, enterprises need governance and accountability—they have to be able to answer questions about why an agent took a particular action, especially in regulated environments.

In short, agent tracing is becoming the equivalent of logging and distributed tracing for traditional software. What started as experimental instrumentation in 2024 is rapidly becoming standard infrastructure for agentic systems. As more frameworks adopt common telemetry conventions and more monitoring platforms support them out of the box, tracking the internal steps of AI agents is likely to become a routine part of building and operating AI-driven applications.

Here are a few excellent references on OpenTelemetry that you might find valuable for learning about this observability framework. I’ve selected these based on their comprehensiveness, authority, and relevance, drawing from reliable sources as of early 2026. Each includes a brief description and direct link.

- Official OpenTelemetry Documentation (opentelemetry.io): The primary hub for all things OpenTelemetry, including specs, SDKs, instrumentation guides, and collector setup. It’s the best starting point for official, up-to-date information.

- Awesome OpenTelemetry (GitHub Repository): A curated, community-maintained list of resources, including books, blogs, tools, and educational series like “30 Days of OpenTelemetry.” Great for discovering a wide range of materials.

- Quick Guide to OpenTelemetry: Covers core concepts, instrumentation tutorials, and comparisons (e.g., vs. Prometheus), with practical export advice. Link: https://coralogix.com/guides/opentelemetry

Memes and Intent, Appetite, and Motivation of Context

Context engineering is not only about providing facts of provenance. It also includes prioritizing goals and motivations, which shape how decisions are made when information is incomplete.

These are the most important factors of context. It’s the primary sorting order, the prioritization of competing goals. Without it, logic and statistics will not match human behavior. Not because “emotions” are this magical thing, but because it marshals attention towards what matters—away from concerns of risk or lost opportunities.

Unfortunately, intents and motivations usually escape conventional description. I don’t believe LLMs can capture that. Maybe it’s captured in punctuation, expletives, ALL CAPS, numerous exclamation points, and now emojis within the text they’re trained on. But it doesn’t capture severity signaled by nuanced facial expressions and empathy that authors would assume from their readers, learned from their own experiences, which haven’t been assimilated into the training material of today’s LLMs.

Current multimodal models (vision + text, voice) close the gap a bit by learning some cross-modal correlations (angry faces co-occur with certain language patterns), but even there the model isn’t feeling stakes; it’s just modeling higher-dimensional co-occurrence statistics.

But many intents and motivations can’t really be expressed as a set of events. They are often expressed as symbols that require very much conditioning before having meaning. Examples include slogans, icons, brand logos, and powerful photographs (ex. “The Blue Marble”) and posters (ex. “Uncle Sam Wants You”).

Famous slogans like “Semper Fi” or “Think Differently” work because they’re compact, repeatable, emotionally loaded declarations of purpose. They go beyond simply informing. They infect the mind with a priority heuristic. That is, when everything is on fire and choices conflict, default to fidelity/loyalty/defense because that’s the valence that feels most alive, most “us”. Troops don’t follow them out of fear of the chain of command alone—they follow because the phrase has become a personal badge, a tribal signal, a reminder of the appetite for belonging, competence, and winning together. The commander’s intent builds on this by giving decentralized agents (soldiers, squads) a north star that survives communication breakdowns: “Here’s the end-state we crave; adapt ruthlessly to get there.”

A real meme (the real meaning of meme—Richard Dawkins, Susan Blackmore—not funny social media pictures) takes that mechanism and supercharges it for the digital/always-on era. Memes aren’t just slogans with pictures—they’re hyper-compressed cultural viruses that bundle:

- Visual shorthand (the image template that instantly evokes recognition and feeling)

- Ironic/humorous/distilled insight (making the “why” feel clever and earned, not preached)

- Shareability as social proof (spreading only when it resonates, so adoption signals genuine alignment)

- Adaptive mutation (people remix them, keeping the core intent alive while fitting new contexts)

This is why memes have powered everything from grassroots movements (ex. viral symbols in protests that rally without central coordination) to online subcultures that self-organize around a shared vibe. They align intent not through top-down orders or punishment avoidance, but by hijacking the brain’s reward circuits for pattern recognition, humor, in-group belonging, and outrage/affirmation. The result: decentralized actors resolve goal conflicts the same way because the meme has already ranked priorities for them (“this feels epic/right/funny/urgent → pursue it over safer/boring alternatives”).

In context engineering for LLMs and agentic systems, we’re still mostly stuck at the slogan level: we write crisp intent statements, priority clauses (“user win > everything”), or motivational anchors at the top of context. Those help, but they’re static text—flat, non-viral, missing the multimodal punch and cultural stickiness that makes a meme “real”.

A very effective meme can’t be effectively captured in words because it requires immersion into culture, into our soft, gooey brains. There’s no way to describe the meaning of the image of your family that makes you brave Thanksgiving traffic and air travel to get to them. In a sense, a meme only has the status of meme after it’s proven to be successful.

I used the family example to point out that we’re more meme-driven than we think. That’s because most of what we do is dictated by our job and rules of polite society—so often, what really drives us gets put on the backburner. But even the marching orders that take up most of our energy are meme-driven—in the worst form, by the mega meme of losing all our stuff.

So, not capturing these “lost for words” memes for context is having one hand tied behind your back. To capture the full power, future context needs to include meme-like artifacts that do the heavy lifting of alignment:

- Embed actual meme templates or references in persistent memory or system context, with instructions to remix/adapt them in reasoning.

- Inject viral patterns as high-priority examples: not dry rules, but distilled, emotionally valenced snippets that models pattern-match against.

- Multimodal reinforcement: Pair text intent with described or referenced visuals that simulate the meme’s visceral pull—since models increasingly handle image+text, this bridges the gap where pure text fails to marshal “appetite”.

- Dynamic remixing in loops: let agents evolve the “meme” across turns (ex. internal note: “Our squad chant now: ‘User First or Bust’—remix aggressively to fit new info”), mimicking how human groups keep the rallying cry fresh and owned.

The difference is night and day. A slogan says, “Prioritize towards this North Star”. A real meme makes prioritizing this feel inevitable, rewarding, and identity-defining—the same way “Who Dares Wins” doesn’t command SAS operators; it reprograms their risk/reward calculus so boldness becomes the default appetite.

In short, slogans align troops—whether literal military or figuratively in corporations, clubs—through shared purpose. Memes align swarms (human or agentic) through shared infectious purpose—faster, stickier, more resilient to noise. If context engineering wants agents that don’t just follow instructions but crave the right outcomes the way motivated people do, it has to engineer in that memetic layer. Not as gimmick, but as the ultimate intent-marshaling primitive.

But note that I don’t mean that memes are “irrational override”—they’re a compact encoding of a priority function / utility tradeoff that you still have to audit.

What to Do with All that AI Agent Context?

After we’ve equipped our AI agents to provide activity logs, which are captured by an event hub, stored in what is essentially an Event Ensemble, identified the processes, and aggregated cases into the Markov Model Ensemble, how do we make use of those models? That’s pretty much what Time Molecules is about, and it would be silly to rehash all its contents here. Just to list a few things, we can …

- Piece together how and why AI agents made a decision, validating the quality of the decision.

- Study the process the AI agents take to make a decision so that we can optimize the process.

- The primary paths.

- The exceptions and loops that might occur.

- Differences under different contexts—how different contexts affect other contexts.

- How the process changes over time.

- Study how processes affect other processes.

- Interestingly, how the scale of AI agent processes after existing processes.

As I mentioned earlier, Time Molecules is the Time-Oriented Counterpart to Thing-Oriented OLAP Cubes. Richer than analyzing sets of tuples, we analyze processes/systems. Time is the ubiquitous dimension across virtually all databases, a readily available key method of data integration. Analysis across time is systems thinking.

Every cycle of a process tells a story. It’s richer than just the simple answer at the end of the story. I wrote in another blog that stories are the transactional unit of human intelligence. I mused in the blog, Products of System 2:

Instead of recognizing states (snapshots) we recognize stories that happen in 4D spacetime. Could it be that the AI analogue of the minicolumns of our neocortex are Markov models—the aggregation of stories? A structure that is both recognizable but is more than a collection of qualities—a recognition of sequence, not just a fuzzy snapshot of an instant of time, but that fuzzy snapshot as just the first event in a learned model of probable next events.

If we didn’t capture the event log of AI agents, where each case is a story, I feel like we’ve stripped away the collective intelligence at the level of the population of AI agents—somewhat analogous to losing our culture at the societal level.

Background Data Validation with System ⅈ

Another System ⅈ background process is to validate facts (“fact check”) through a wide knowledge graph using SWRL and/or a library of Prolog snippets. For example, does moringa have 7x the Vitamin C of oranges?

The fact-checking needs to find logical inconsistencies, in particular, key data points. Inconsistencies will pop up in System 2 from System ⅈ like another kind of thought. It’s not just a matter of how many “votes” there are (how many times a value was cited), but perhaps it could be an outlier (more than two standard deviations) from the mean.

For the question of moringa having 7x the vitamin C of oranges, there are many questions one would ask as I describe in the Preface. We could have several templates for types of questions, for example, a template that helps LLMs categorize a service case. We ask the LLM to categorize the nature of our questions, match that category to a template of a set of questions, then apply the template. It might go something like this:

Before answering a question, the system should first determine what kind of question it is. A claim like “Does moringa have 7x the vitamin C of oranges?” is a comparative value claim, so it should trigger a validation template for numerical comparisons. Instead of merely counting how often the claim is repeated, System ⅈ breaks it into a tree of smaller questions: what form of moringa, what form of orange, what unit of measure, what serving basis, what source, and whether the cited values are typical or statistical outliers.

A knowledge graph helps identify the entities and relationships, while SWRL and Prolog rules test whether the comparison is logically valid and whether the value is plausible. In this way, System ⅈ performs background validation not as a popularity contest of citations, but as a structured reasoning process whose inconsistencies can surface into System 2 like another thought demanding attention.

Of course, we could ask an LLM directly, prompting in a way that it digs deeper than just finding a commonly claimed answer. Please see:

- moringa_oranges_vitamin_c.md for a response from ChatGPT 5.4 on this question

- moringa_oranges_vitamin_c_supergrok.md of the steps it took to fact check.

- generalized_chain_of_thought_events.md for a structured reference across fact-check categories created by SuperGrok.

- test_generalized_cot_events.md for a test of the labels provided from #3.

When viewing those two prompt/responses, remember that a semantic layer, database, knowledge graph, Prolog, even person could be called upon to address one of those steps. Not just other LLMs. In this case, for demo purposes, I just used the chat UIs.

More on this topic in a future blog.

There’s a lot we can do with statistics towards the goal of validating a fact. For example, compare moringa with other similar plants (an analogous relationship) to see how it worked. Then the steps are stored as a certification of the value along with the qualifiers so the template can be used continuously.

In short, tracing transforms AI agents from opaque black boxes into observable processes. Once those processes emit events, they can be studied through process mining and modeled through Time Molecules. The result is an architecture where the behavior of AI systems can be understood, validated, and improved over time.

Conclusion

The central argument of this blog is that AI agents should emit events describing their internal process steps, not merely produce final answers. These event streams allow us to reconstruct the context behind decisions, understand how agent systems actually behave in the wild, and improve the intelligence of those systems over time.

Much of the current conversation around tracing AI agents focuses on governance—auditing decisions, understanding failures, and satisfying regulatory requirements. Those motivations are important. As AI systems become capable of acting in the physical and economic world, organizations must be able to explain how and why decisions were made. Tracing provides the provenance needed for that accountability.

But there is the other part of the story beyond governance.

When AI agents emit structured events for each meaningful step in their workflows—interpreting prompts, retrieving knowledge, invoking tools, interacting with other agents, and producing responses—they generate something far more valuable than an audit trail. They generate process data. And process data is one of the richest sources of intelligence available in complex systems.

This is where the ideas in Time Molecules come into play. The book focuses on the architecture that captures and organizes these events—the Event Ensemble—and on the modeling layer that analyzes them through linked Markov models—the Markov Model Ensemble. Together, these components provide a way to study the statistical behavior of processes at scale. Rather than examining a single agent’s reasoning path, we can observe patterns across entire populations of agents performing similar tasks.

Once those event streams are available, a wide range of analyses becomes possible:

- Discovering the most common workflows agents use to solve problems

- Identifying alternative paths when agents encounter ambiguity or failure

- Detecting anomalous or suspicious behavior

- Comparing the performance of different agent designs

- Observing how processes evolve as models, prompts, and tools change over time

In other words, tracing transforms AI systems from opaque collections of outputs into observable ecosystems of processes.

Seen from this perspective, event logging is not merely a debugging tool or regulation. It is a mechanism for collective learning. Each execution of an agent becomes a case; each sequence of events becomes a story; and across millions or billions of such stories we begin to see patterns that no individual execution could reveal.

Human intelligence works in a similar way. Culture accumulates knowledge by remembering stories—what worked, what failed, and what patterns emerged over time. If we deploy large populations of AI agents but only record their outputs, we lose that narrative layer. The system produces answers, but it does not accumulate process knowledge.

Aside: AI Agents (Robots) in the Physical World

Around the time this blog was published, Tesla robots were appearing more frequently in demonstrations performing simple tasks in the physical world. In many ways these robots can be thought of as composite AI agents—systems combining perception, planning, control, and increasingly AI models coordinating their actions. A robot navigating a workspace or picking up objects is executing a process just like a software agent resolving a prompt. Each adjustment, decision, or interaction could be recorded as an event.

That is where the value of the architecture described in Time Molecules becomes more evident. The Event Ensemble—essentially an “event data warehouse”—stores the underlying sequences of events as they actually occurred. From there, the Markov Model Ensemble generalizes those sequences into transition probabilities and properties such as the mean and standard deviation of the time between steps. Instead of merely seeing that a system chose option A rather than option B, we begin to see the story of how those choices typically arise and the conditions under which different paths tend to occur.

This is why I describe Time Molecules as the time-oriented counterpart to thing-oriented OLAP cubes. OLAP aggregates facts about things, while Time Molecules aggregates stories about processes unfolding through time. Time Molecules is about bringing systems thinking closer to the data—allowing large populations of AI agents, whether software or robotic, to learn not just from isolated decisions but from the statistical patterns of entire processes, within an ecosystem of other processes.